For most of the past decade, finding the right creator for a brand campaign was a process that combined two of the worst possible inputs: vanity metrics and gut feeling. A brand manager would open a spreadsheet, pull up a list of influencers ranked by follower count, scan a few recent posts, and make a call. If the creator’s aesthetic looked right and their numbers seemed impressive, they got the deal.

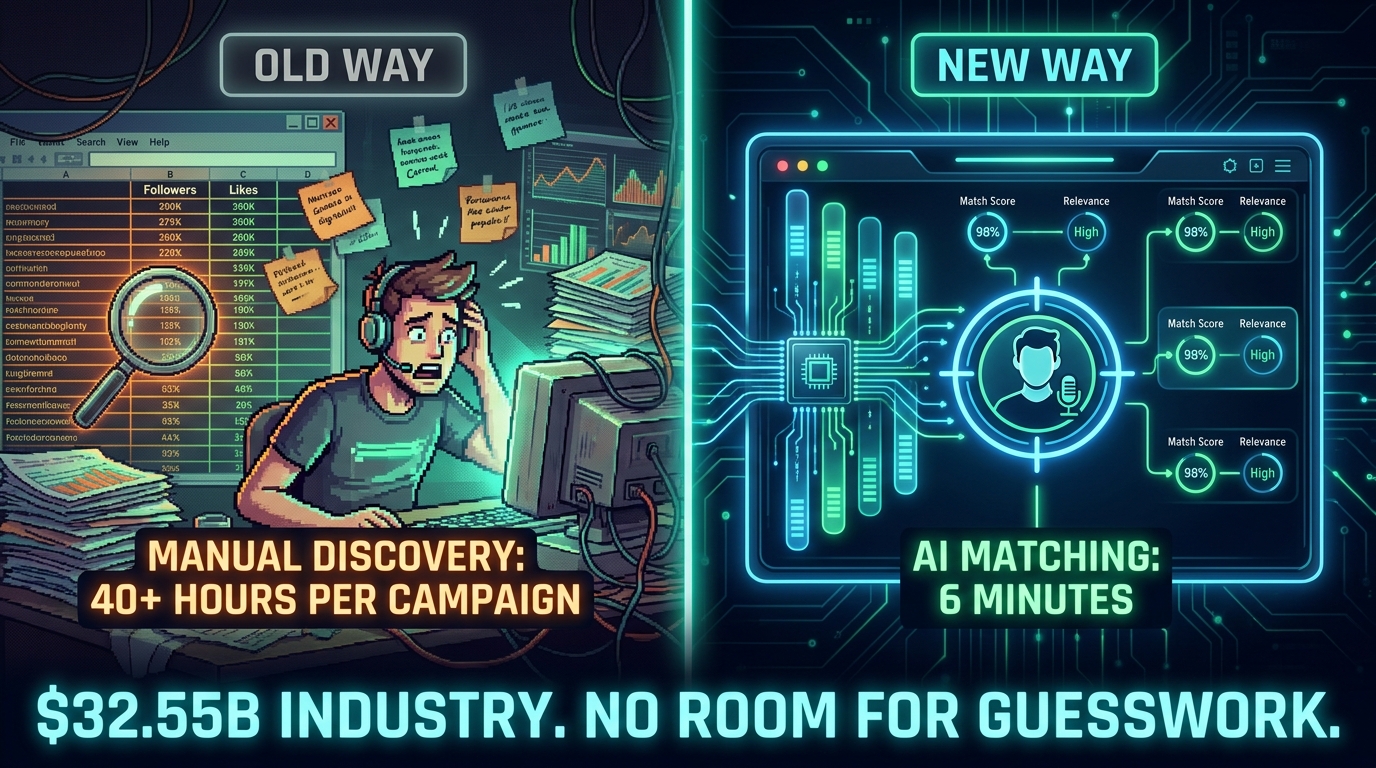

This approach worked — barely — when the creator economy was small enough to manage manually. It does not work now. The influencer marketing industry has crossed $32.55 billion in 2026, with 86% of US marketers actively running creator partnerships. There are millions of creators across TikTok, Instagram, YouTube, and emerging platforms. No spreadsheet in the world can process that volume accurately, and no human gut can reliably predict which creator will actually convert an audience into customers.

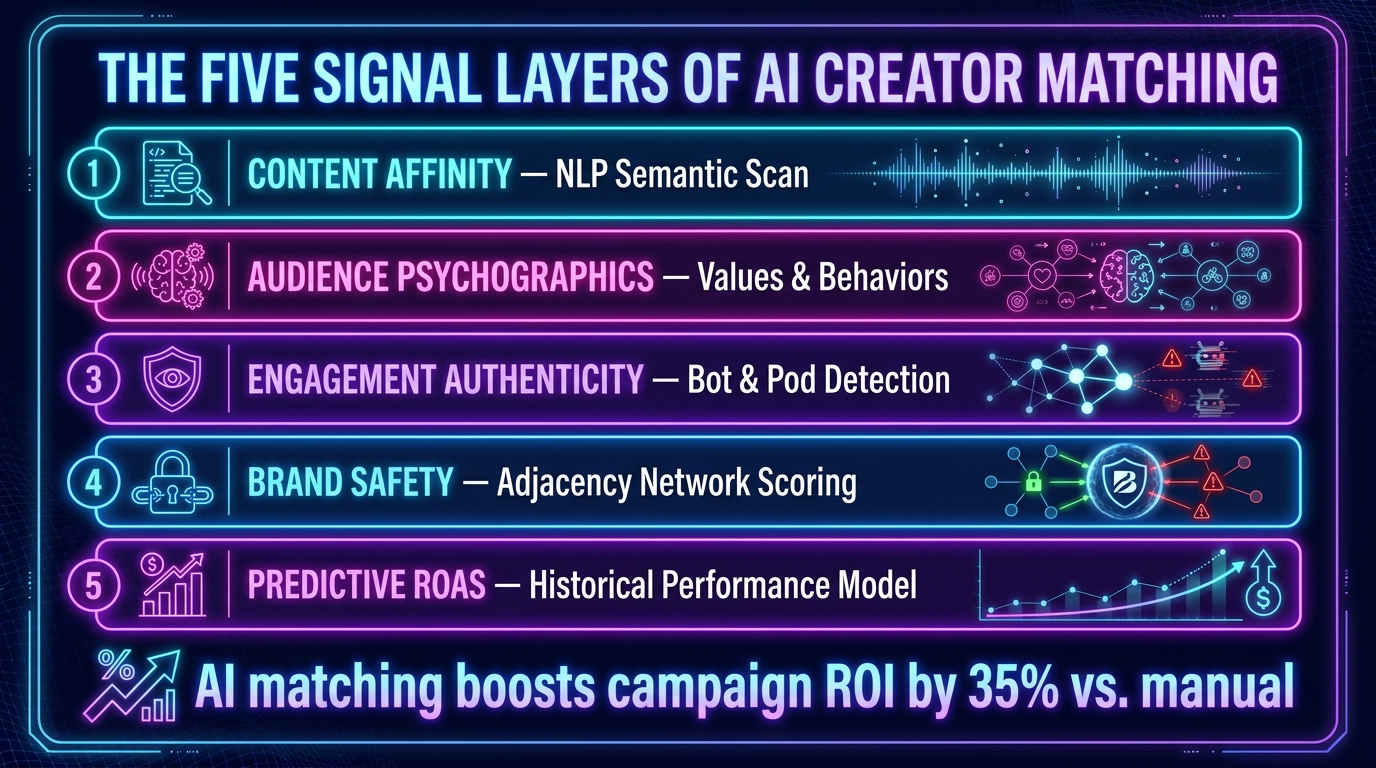

What’s replacing guesswork is a science — a layered, signal-based approach to creator matching that has more in common with programmatic advertising than with the old influencer-agency relationship model. Brands that understand how this science works are seeing automated AI matching deliver roughly 35% higher campaign ROI versus manual discovery. Brands that don’t understand it are still paying for audiences that don’t exist, creators whose content drifts into brand-unsafe territory, and partnerships that look great in a deck but produce almost nothing in sales.

This post is not about which platform has the best dashboard. It’s about understanding the underlying mechanics of AI creator matching — the signal layers, the prediction models, the failure modes, and the architecture decisions that separate campaigns that perform from campaigns that merely happened.

Why Creator Discovery Became a Data Problem

The creator economy didn’t just grow. It atomized. What was once a manageable pool of a few thousand “influencers” with recognizable names has become an ocean of tens of millions of content producers — many of whom have smaller but far more targeted, engaged followings than the celebrities who defined the first generation of influencer marketing.

The Scale Problem Is Fundamental

Consider what manual creator evaluation actually requires: reviewing profile content, scanning comment sections for authenticity, checking follower growth curves for artificial spikes, evaluating whether a creator’s audience overlaps meaningfully with a brand’s customer profile, assessing past partnership content for tone and fit, and repeating that process for every candidate in the shortlist.

For a single campaign, even a moderately thorough manual review takes 40 or more hours per campaign. For a brand running six to twelve creator campaigns per year across multiple product lines, that’s not a workflow — it’s a full-time job that still produces inconsistent results because humans evaluating profiles carry their own biases about what “looks right.”

The Explosion of Creator Data Changed the Calculus

What made AI matching viable wasn’t just the existence of big language models or machine learning — it was the sheer density of data that platforms began generating about creator behavior. Every post, every comment, every save, every share, every affiliate click carries a signal about who a creator’s audience actually is and what they actually do after watching content.

This data infrastructure made it possible to move from subjective evaluation (“this creator’s vibe matches our brand”) to quantitative matching (“this creator’s audience has a 74% overlap with our customer psychographic profile, a 94% authentic engagement rate, and a predicted conversion rate of 3.2% for product-adjacent content in this category”).

That shift — from aesthetic judgment to signal-based prediction — is the core of what AI creator matching actually does. And understanding it requires understanding the signal layers themselves.

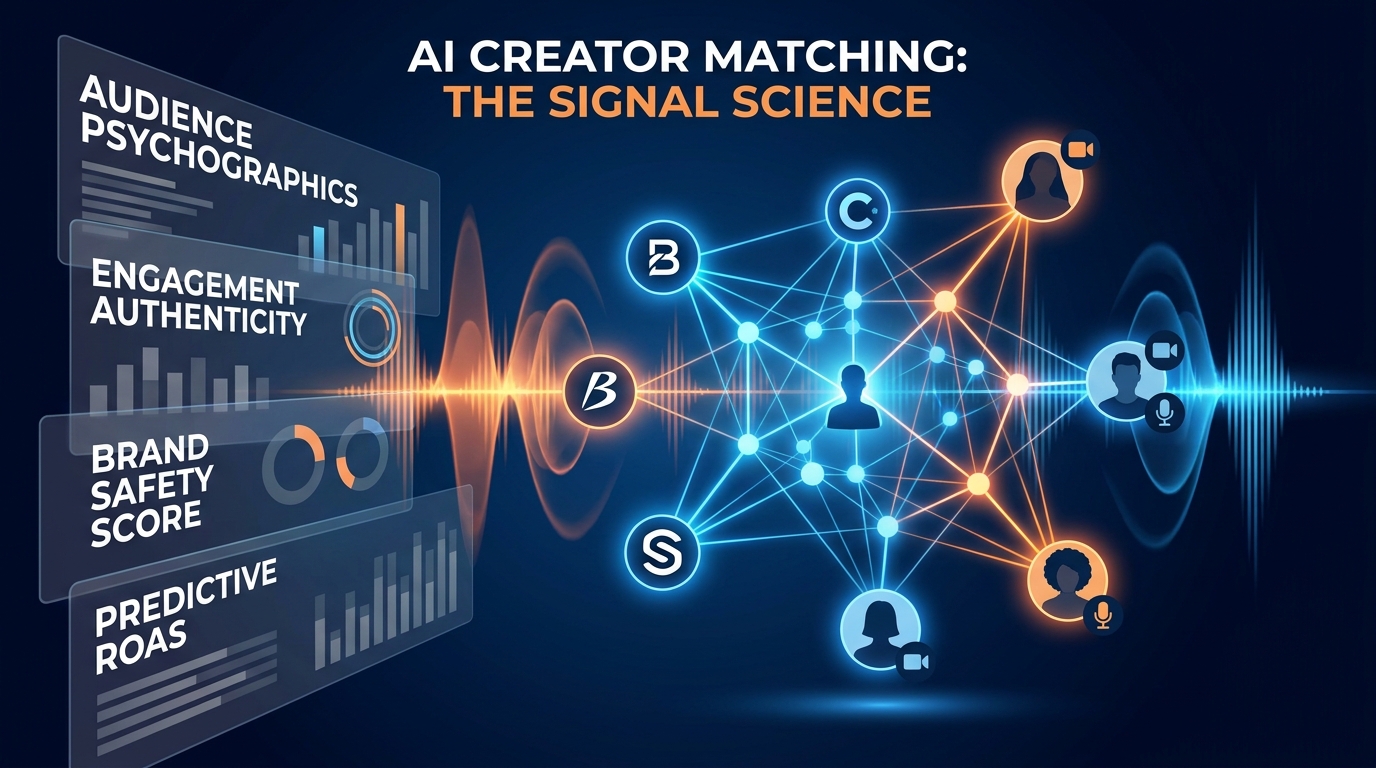

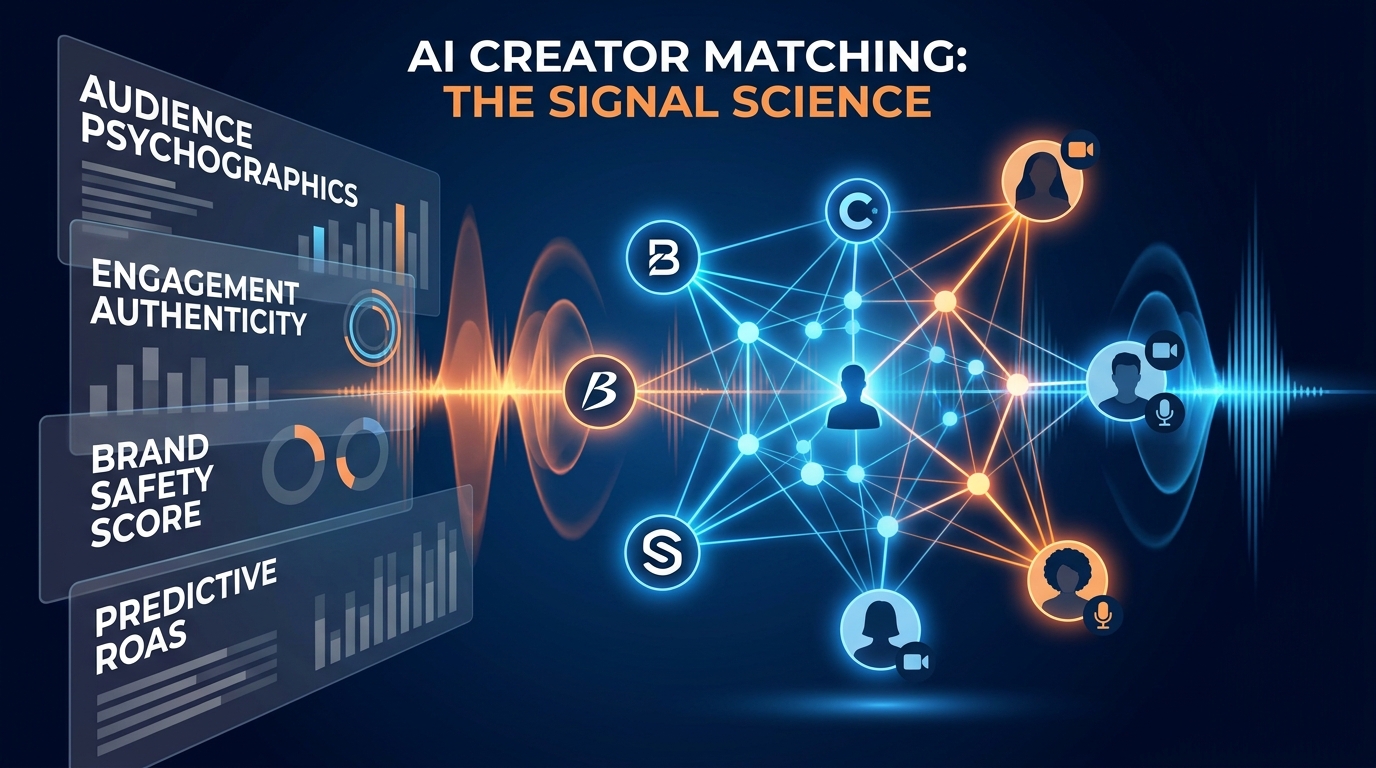

The Five Signal Layers AI Matching Actually Uses

Not all AI matching systems are built the same, but the serious platforms share a common architecture: they evaluate creators across multiple distinct signal layers, weight those signals against campaign objectives, and produce a match score that accounts for far more than follower counts and engagement rates.

Layer 1: Content Affinity via NLP Semantic Scanning

The first layer is content analysis — what does this creator actually talk about, and how do they talk about it? Modern AI matching platforms use Natural Language Processing (NLP) to semantically scan a creator’s full content library: captions, spoken words in video transcripts, hashtag patterns, and even visual composition through computer vision models.

This goes beyond “does this creator post about fitness?” to granular category mapping. A fitness creator who primarily posts about marathon running has a fundamentally different semantic profile — and a fundamentally different audience — than one who posts about home workouts for parents of young children. Both show up when you search “fitness” manually. Only the second one is useful if you’re selling a product designed for time-poor adults with domestic routines.

Semantic scanning also detects content cadence, posting consistency, and how a creator frames sponsored versus organic content — all signals that affect how an audience receives a partnership post.

Layer 2: Audience Psychographics Beyond Demographics

The second layer is where things get genuinely interesting — and where the gap between basic and sophisticated platforms is most visible. Every platform tracks audience demographics: age, gender, geography. The platforms worth using go further into audience psychographics: what does this audience value, what motivates their purchasing behavior, how do they describe their identity, and what emotional triggers drive their content engagement?

AI tools now analyze audience comment behavior, follow graph patterns, and behavioral signals to map not just who follows a creator, but why they follow, and what category of decisions they’re actually in the market for. A lifestyle creator with an audience that values sustainability, minimalism, and ethical consumption is a completely different match from one with an equally sized audience that prioritizes convenience, price, and aspiration — even if the demographic data looks identical on paper.

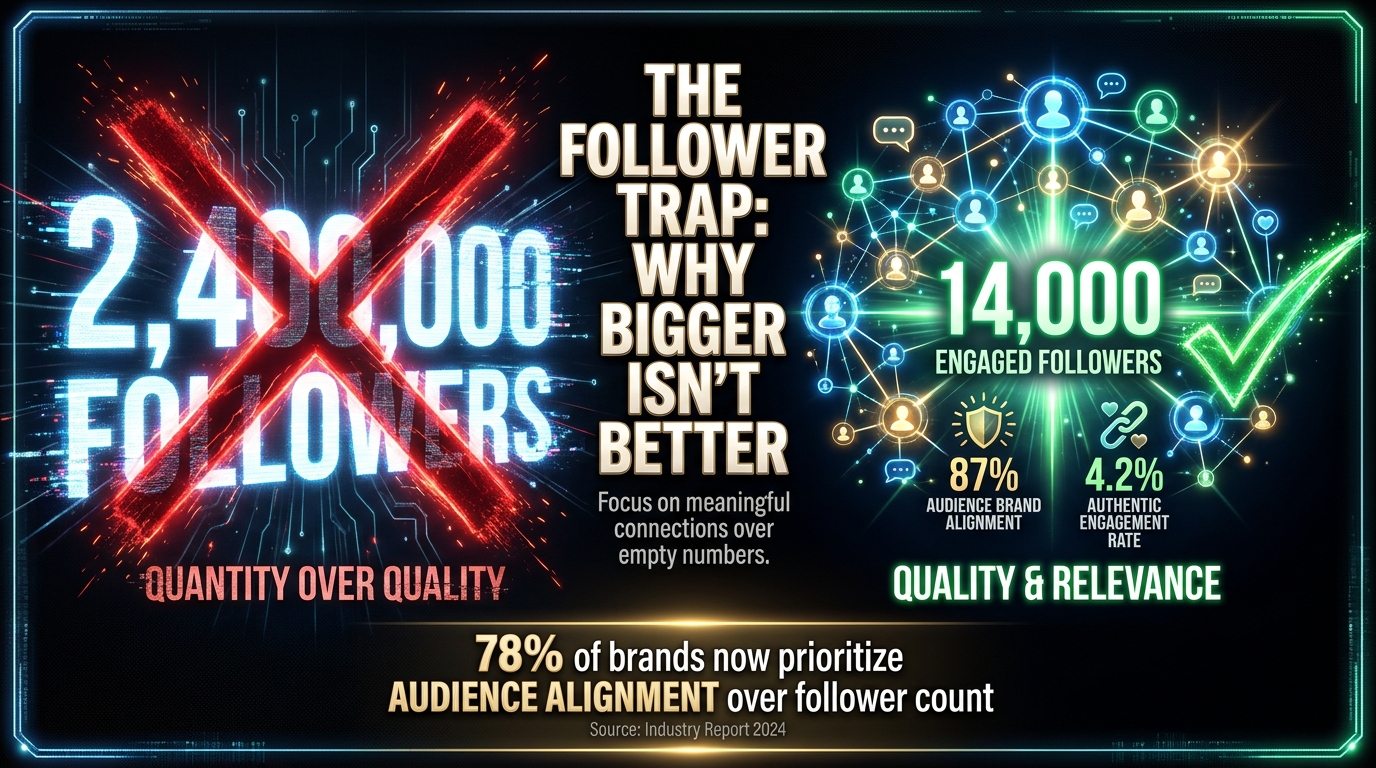

In 2026, 87% of brands require detailed psychographic data as part of their creator vetting process, up from just 62% in 2024. That’s not a trend — it’s a structural change in how creator partnerships are evaluated.

Layer 3: Engagement Authenticity Scoring

The third layer is fraud detection — arguably the most consequential signal layer, given the scale of the problem. The numbers are stark: roughly 50% of influencers have detectable fake followers, and about 25% have actively purchased them at some point. The annual cost of influencer fraud is estimated at $1.3 billion industry-wide.

AI authenticity scoring doesn’t just look at whether followers are real accounts. It evaluates the quality and distribution of engagement: Are comments generic one-word responses that suggest engagement pod activity? Does the engagement rate spike unnaturally on certain posts and collapse on others? Does the follower growth curve show organic patterns or artificial bumps?

Advanced platforms achieve 94% accuracy in detecting fake followers — significantly better than any manual audit process. But this layer still has a meaningful failure mode: engagement pods, where groups of real accounts artificially boost each other’s content, remain genuinely difficult for algorithms to distinguish from organic community behavior.

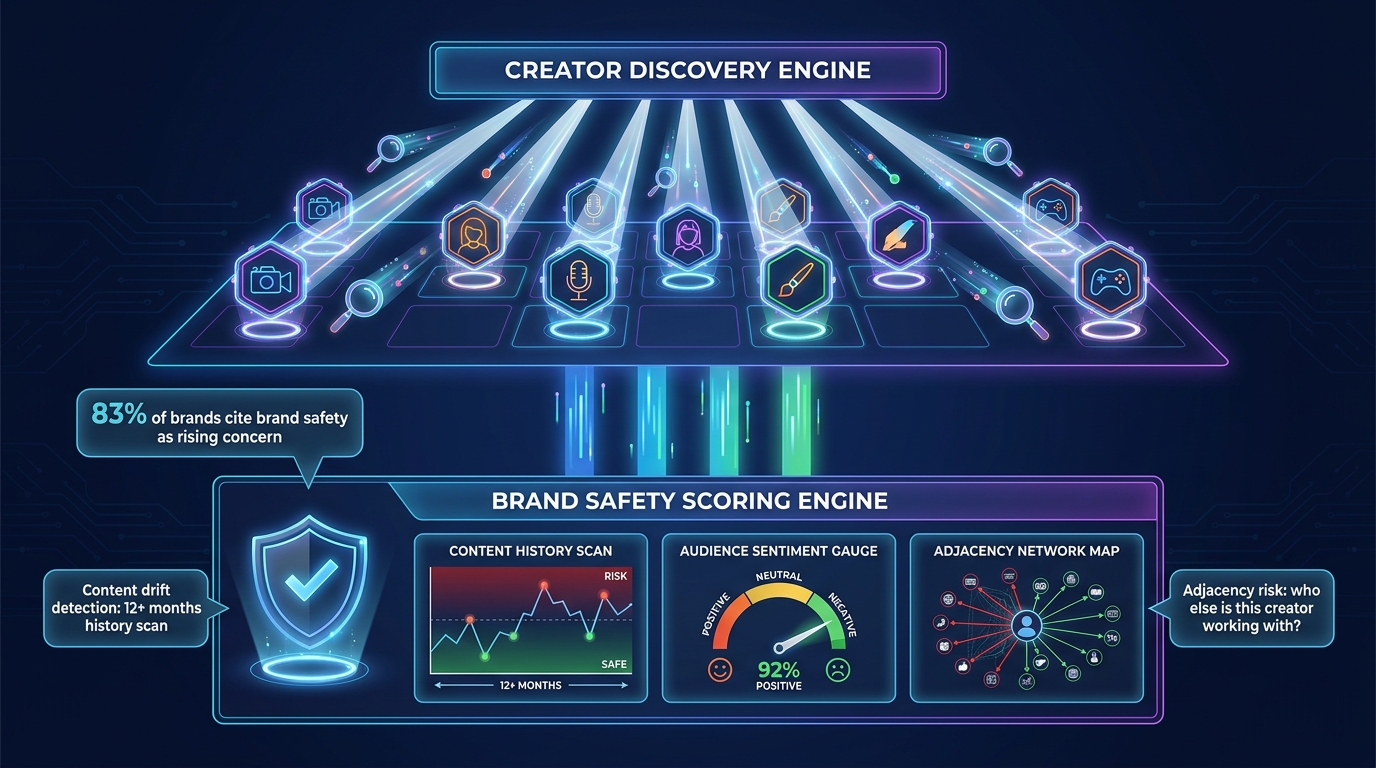

Layer 4: Brand Safety History and Adjacency Network Scoring

The fourth layer addresses a risk that brands consistently underestimate: a creator’s historical content and their collaboration network. A creator who posted brand-safe content for the last six months might have a two-year-old archive full of material that creates reputational risk. More subtly, a creator who regularly amplifies or collaborates with other creators whose content is problematic carries adjacency risk — their audience may include communities that don’t align with a brand’s values, even if the creator’s own content passes review.

Leading platforms have moved to what’s called a paired discovery-safety architecture, where brand safety scoring runs simultaneously with creator discovery rather than as a sequential review step. This allows the system to surface diverse creator pools while continuously flagging risk — replacing the blunt instrument of static block lists with a dynamic, real-time risk layer.

Layer 5: Predictive Performance Modeling

The fifth layer is the most forward-looking: using a creator’s historical collaboration performance data to forecast how a new partnership is likely to perform. This includes prior conversion rates on sponsored content, click-through rates on affiliate links, audience sentiment after sponsorship disclosures, and how engagement changes when a creator posts about different product categories.

Predictive performance modeling is what turns creator matching from pattern recognition into genuine forecasting — and it’s what makes the AI approach closest in spirit to programmatic advertising. When a platform can say “based on this creator’s last 14 brand partnerships in adjacent categories, we project a 3.4% conversion rate and a 7:1 ROAS for a product in this price tier,” it’s doing something qualitatively different from a keyword search with demographic filters.

The Follower Count Trap — and Why Brands Are Finally Moving On

The persistence of follower count as a primary selection criterion is one of the most expensive habits in marketing. It’s not just that big accounts don’t always deliver — it’s that the mechanism producing their apparent reach has often been structurally disconnected from purchasing intent for years.

The Real Unit Economics of Creator Tier

Consider what the data actually shows about creator tier performance. Nano-creators (1,000–10,000 followers) and micro-creators (10,000–100,000 followers) consistently outperform macro-creators and celebrities on the metrics that actually matter for most brands: engagement rate, comment authenticity, audience-topic alignment, and conversion rate per impression.

A macro-creator with 2 million followers who built their audience through viral entertainment content carries enormous reach, but that audience may be demographically broad, geographically scattered, and psychographically unrelated to any specific purchase category. A micro-creator with 22,000 followers who built their audience through deep expertise in skincare formulation ingredients has an audience that is actively researching, actively spending, and actively trusting the creator’s recommendations in that category.

The conversion economics are not even close. Yet for years, brands defaulted to follower count because it was the only metric that was easily visible and intuitively legible. AI matching changes the input set entirely.

The Shift to Watch Time, Saves, and Shares

Forward-thinking brands and agencies have been shifting their primary evaluation metrics to engagement signals that correlate with genuine interest: watch-through rate on video content, saves (which indicate audience intent to return or act), shares (which indicate audience confidence in the recommendation to their own network), and click patterns on links in bio or swipe-up features.

These signals are harder to fake than raw follower count and likes, and they’re more directly correlated with content that creates purchasing behavior rather than just scrolling behavior. AI matching platforms that weight these behavioral signals over vanity metrics tend to produce substantially more actionable shortlists — and substantially fewer post-campaign disappointments.

How the Fraud Problem Reshaped the Entire Matching Architecture

The $1.3 billion annual cost of influencer fraud isn’t just a financial problem. It’s an architectural one. The existence of sophisticated fraud at scale — fake followers, engagement pods, purchased metrics — means that any matching system built on surface-level data is fundamentally compromised from the start.

The Layers of Fraud AI Has to Navigate

Fraud in the creator space operates at multiple levels of sophistication. The bluntest form — buying bulk fake followers — is relatively easy for AI to detect through follower quality analysis and growth curve pattern recognition. More sophisticated fraud involves engagement pods: organized groups of real accounts that systematically like, comment on, and share each other’s content to artificially inflate engagement rates.

Pods are harder to detect because the individual accounts are real people making real choices to engage — it’s the coordination that’s artificial. AI systems address this by looking for statistical anomalies in engagement distribution: if a creator’s posts consistently receive engagement from the same rotating cluster of accounts regardless of content topic, that pattern suggests organized amplification rather than organic community interest.

Even more advanced is what’s called audience quality drift: a creator who built a genuine, relevant audience two years ago but whose follower base has since been diluted by viral moments attracting a completely different demographic. Their metrics look strong; their audience has simply evolved away from the category they were originally valuable for.

Why AI Detection at 94% Still Isn’t Enough

The 94% accuracy figure for AI-based fake follower detection sounds impressive until you think about what the 6% miss rate means at scale. In a database of 10 million creators, that’s 600,000 creator profiles with fraudulent metrics that still pass automated screening. The responsible use of AI matching isn’t to replace human judgment entirely — it’s to dramatically narrow the field so that human review is applied to a smaller, more pre-qualified shortlist.

The brands seeing the best outcomes from AI matching treat automated scoring as a first-stage filter, not a final verdict. They use the system to eliminate obvious mismatches and flag obvious fraud, then apply a layer of qualitative review to the top candidates before committing budget.

Platform Deep Dive: What the Major AI Matching Systems Actually Do

The AI creator matching market has consolidated around several major platforms, each with distinct methodological approaches and use-case strengths. Understanding what differentiates them is critical for brands choosing a system that fits their specific campaign architecture.

CreatorIQ: Enterprise-Grade Signal Depth

CreatorIQ positions itself as the enterprise standard, and its matching architecture reflects that orientation. Its core differentiator is semantic content analysis combined with what it calls SafeIQ — a brand safety scoring engine that evaluates full content history, audience sentiment, and adjacency networks simultaneously with discovery. For brands with complex brand safety requirements (financial services, pharma, regulated categories), this paired architecture matters.

CreatorIQ also integrates deeply with Salesforce and enterprise data environments, which means matching decisions can be informed by a brand’s own first-party customer data — aligning creator audience profiles directly against actual customer psychographics rather than generic category benchmarks. It’s the most expensive option in the market (custom enterprise pricing only), and it’s primarily optimized for complex, multi-creator global programs rather than small-scale campaigns.

GRIN: D2C and E-Commerce CRM Integration

GRIN takes a fundamentally different approach, positioning creator partnerships as an extension of customer relationship management rather than a media buying process. Its matching system is designed specifically for direct-to-consumer and e-commerce brands, and its core integration is with Shopify and major e-commerce stacks.

The matching logic emphasizes historical conversion signals — specifically, it surfaces creators whose audience behavior patterns correlate with the brand’s own customer purchase data. Where CreatorIQ asks “does this creator’s audience align with our target psychographic?”, GRIN asks “has this creator’s audience historically bought products like ours?” GRIN averages a 5.2x ROI for its D2C clients, and its creator CRM capabilities for managing long-term relationships and seeding programs are genuinely differentiated. Starting pricing around $1,000/month makes it accessible for growing brands rather than just enterprise teams.

Aspire (AspireIQ): Global Scale with Fraud-First Architecture

Aspire’s matching system is built on a combination of collaborative filtering and content-based filtering — an approach borrowed from recommendation system design. Collaborative filtering essentially asks “what creators have worked well for brands with similar profiles and objectives to yours?”, while content-based filtering matches creator content directly against campaign briefs and brand identity parameters.

Aspire’s particular strength is fraud detection depth and global scale — it operates across platforms and geographies in ways that smaller, more focused platforms don’t. For brands running multinational campaigns across diverse creator pools, the fraud detection investment Aspire has made pays dividends in reducing the manual review burden.

Impact.com: Affiliate-First Creator Tracking

Impact.com Creator sits at the intersection of affiliate marketing infrastructure and creator partnership management. Its matching logic is distinctly performance-oriented: it evaluates creators heavily on their historical affiliate metrics — link click rates, conversion attribution, average order value generated — rather than treating audience alignment as the primary signal.

This makes Impact particularly well-suited for brands whose creator programs are fundamentally performance-based rather than brand-awareness oriented. If a brand’s primary question is “which creator will generate the most trackable revenue per dollar spent?”, Impact’s infrastructure answers that question more precisely than platforms focused on softer brand-fit metrics.

The Performance-Based Revolution: From Flat Fees to ROAS-Linked Partnerships

The shift in creator matching technology has enabled a parallel shift in how creator deals are structured — and that shift is arguably more consequential for brand ROI than any algorithmic improvement.

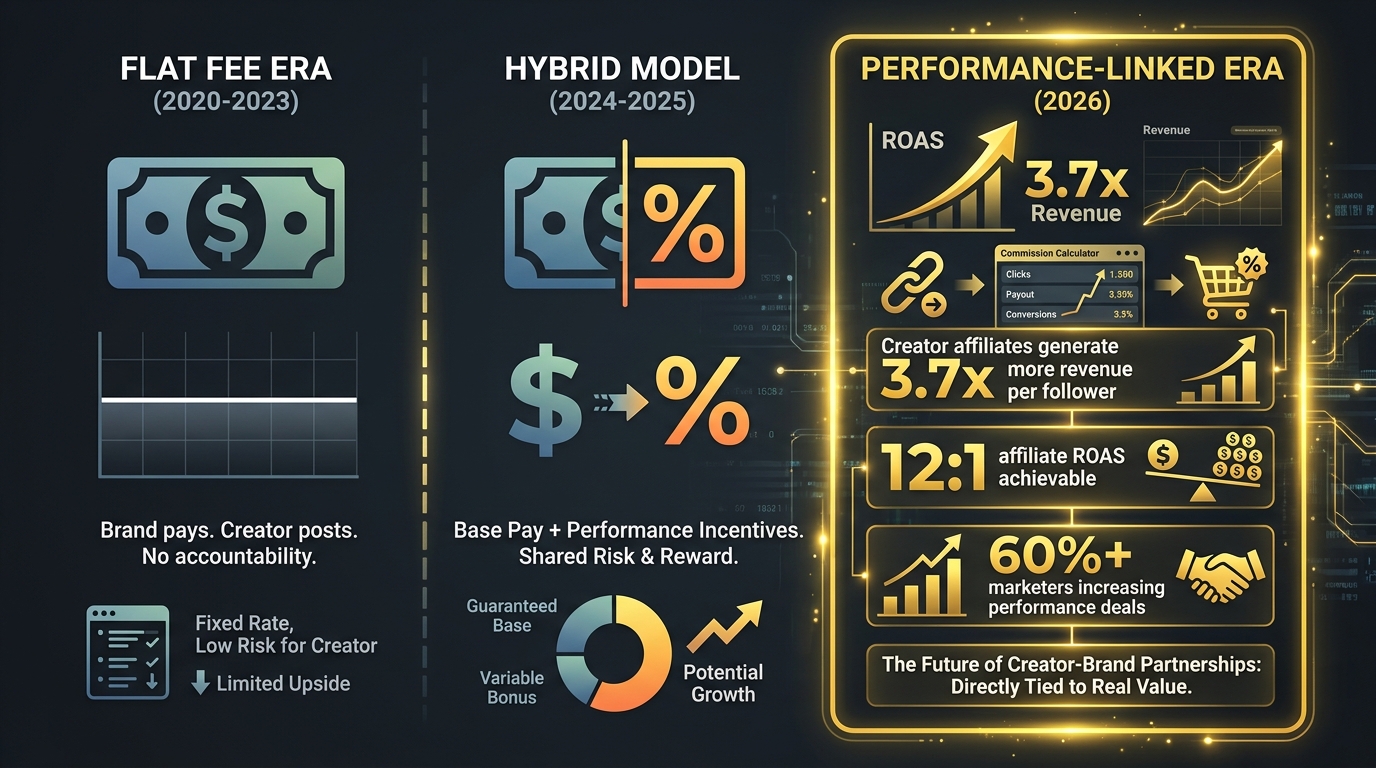

Why Flat Fees Were Always the Wrong Structure

The traditional creator deal was simple to the point of being simplistic: a brand pays a creator a fixed fee, the creator posts agreed content, and then… nobody really knows what happened next. Brands tracked impressions and reach. If the post felt successful, they’d do another. If it didn’t, they’d blame the creator, blame the brief, and move on.

This structure had a fundamental misalignment problem: the creator’s financial incentive ended when the post was published. There was no ongoing stake in whether the content actually converted, whether the affiliate link got clicked, or whether the brand’s revenue grew as a result of the partnership. The creator delivered the deliverable; accountability ended there.

The Hybrid Model Becoming Standard Practice

In 2026, more than 60% of marketers are actively increasing their use of performance-based creator deals. The dominant emerging structure is the hybrid model: a reduced base fee that covers the creator’s content production costs and floor compensation, combined with commission-based earnings tied to trackable conversions — typically running at 10–15% of revenue attributed to the creator’s unique link or code, plus performance bonuses for hitting ROAS or sales thresholds.

This structure works for brands because it shifts risk. The base fee is low enough that a campaign that doesn’t convert is a learning investment rather than a budget disaster. The commission structure means that if a creator genuinely drives sales, they’re rewarded in proportion to the value they create — and the brand’s cost scales with revenue.

It works for creators because — for those with genuinely engaged, commercially active audiences — the upside far exceeds what a flat fee would have paid. The economics are stark: creator affiliates generate 3.7 times more revenue per follower than display affiliates, with affiliate ROAS reaching as high as 12:1 in optimized programs. A creator who would have earned $5,000 flat for a post can earn $50,000+ in commissions if their audience genuinely acts.

The Tracking Infrastructure That Makes It Work

Performance-based deals require performance tracking infrastructure — and this is where the matching platforms become essential. Unique affiliate links, custom discount codes, conversion pixels, and Amazon Attribution integration all need to be in place and connected to real-time reporting before a hybrid deal can be evaluated fairly.

Platforms like Impact, GRIN, and Modash have built exactly this infrastructure: creator-level dashboards that show clicks, conversions, average order value, and ROAS in real time, giving brands the data to identify which creators in a portfolio are performing and scale up their involvement quickly. Programs averaging 47 active creators — up from just 18 in 2022 — reflect how brands are using performance data to continuously expand the creators who actually deliver.

Brand Safety as a Matching Signal, Not a Post-Campaign Review

Brand safety is the part of creator matching that most brands treat as a checkbox rather than a strategic input. That approach is producing increasingly costly errors as the creator landscape becomes more complex and audience expectations for brand accountability rise.

Why Sequential Safety Review Doesn’t Work

The traditional workflow treats brand safety as a post-discovery filter: find creators who match your campaign parameters, then run them through a safety review before making contact. This sequential approach has a structural flaw — by the time safety review happens, a brand’s team has already invested significant evaluation time and, in some cases, developed a preference for specific creators that safety concerns then have to overcome psychologically.

More importantly, sequential review operates on a static snapshot. It checks whether a creator’s content is brand-safe right now, without accounting for content drift — the gradual evolution of a creator’s subject matter, tone, or community associations over time. A creator who looks clean on a point-in-time audit may have posted deeply problematic content 18 months ago, or may be gradually shifting their content toward topics that create reputational adjacency risk.

The Adjacency Network Problem

One of the least-discussed risks in creator partnership is adjacency network exposure. Even if a creator’s own content is entirely brand-safe, their regular collaboration and amplification network — the other creators they tag, duet with, cross-promote, and appear alongside — can create audience overlap and association risk that reflects on any brand partnered with them.

This is particularly acute on TikTok and YouTube, where algorithmic amplification means that a brand’s sponsored content can appear in recommendation feeds alongside content from creators the brand never evaluated or approved. The 53% of media experts who cite AI content adjacency as their top 2026 challenge are grappling with exactly this problem — and static block lists don’t solve it.

The paired discovery-safety architecture used by the leading platforms addresses this by treating adjacency networks as a scored signal in the matching algorithm itself. Rather than flagging risk after a creator is identified, it surfaces creators whose entire network profile — their own content plus their amplification ecosystem — meets the brand’s safety parameters continuously.

Building a Reusable “Safe Creator Bench”

The most sophisticated brands operating at scale have moved beyond campaign-by-campaign creator discovery to building what practitioners call a safe creator bench: a pre-vetted, continuously monitored pool of creators whose content history, audience profile, and brand safety status are known and updated in real time.

This bench approach dramatically accelerates campaign deployment (instead of starting discovery from scratch each time, a brand draws from a pre-qualified pool), reduces the risk of brand safety surprises (continuous monitoring catches emerging issues before they become crises), and enables longer-term creator relationships that build genuine audience trust over multiple campaigns rather than one-off posts that audiences increasingly recognize and discount.

The Human-AI Gap: What Algorithms Still Get Wrong

The strongest case for AI creator matching is also the case that requires the most honest qualification: algorithms are excellent at processing quantitative signals at scale. They are genuinely not good at evaluating the creative, emotional, and cultural dimensions that ultimately determine whether a partnership feels authentic to an audience.

The Tone Problem

AI can identify that a creator posts in the “fitness” category and has an audience with demographic profiles aligned with a protein supplement brand. It cannot reliably detect that the creator’s communication style is ironic and self-deprecating in a way that makes earnest product endorsements land awkwardly — or that their audience has followed them specifically because they puncture the hype around wellness products rather than amplifying it.

A human who watches three minutes of that creator’s content will immediately understand the tone problem. An AI that has scored the creator a 92 out of 100 for category alignment will not. This gap produces what experienced creator marketing teams describe as matches that “look right but feel wrong” — partnerships where every data point checks out and the collaboration produces content that the creator’s audience receives with visible skepticism.

Cultural Nuance and Community Context

Creator communities carry enormous amounts of implicit context that’s nearly impossible to capture in structured data. An inside joke, a running reference, a history with a specific brand or topic, a community norm about what kinds of sponsorships are acceptable — these all exist in the cultural substrate of a creator’s relationship with their audience and can dramatically affect how a branded post lands.

There’s meaningful evidence that over-automated creator marketing is starting to erode audience trust in creator endorsements generally. Research points to a roughly 30% decline in brand partnerships when creator content is perceived as AI-generated or algorithmically directed rather than genuinely chosen. This isn’t an argument against AI matching — it’s an argument for using AI matching to do discovery efficiently while preserving genuine creative collaboration with the creators once they’re identified.

The Relationship History Signal

AI matching systems generally treat each creator as a fresh evaluation subject, scoring their current state against current campaign parameters. They systematically underweight one of the most valuable signals available to marketers: relationship history. A creator who has worked with a brand before, understands the product, has received positive audience feedback on that partnership, and has an established creative vocabulary for that brand is worth dramatically more than their raw match score suggests.

The best creator marketing operations build relationship histories explicitly into their matching criteria — tagging previous performance, noting creative compatibility, and flagging creators with whom the brand has built genuine rapport as priority candidates regardless of how their algorithmic score compares to a fresh candidate.

Predictive Matching in Practice: Building a System That Actually Scales

Understanding the theory of AI creator matching is useful. Understanding how to build an operational system around it is what actually changes campaign outcomes. The brands seeing the best results in 2026 have moved beyond simply subscribing to a matching platform and toward building repeatable, data-informed partnership processes.

Step 1: Define Matching Inputs Before Running Discovery

The quality of AI matching output is entirely determined by the quality of matching inputs. Brands that give a platform generic parameters (“female, 25-34, interested in fitness, US-based”) get generic results. Brands that invest time in defining psychographic parameters — audience values, content engagement patterns, behavioral signals, category expertise — get meaningfully more useful shortlists.

Before running any AI discovery, the most effective process involves answering three questions: What does this creator’s audience need to believe (not just know) for this campaign to work? What behavioral signals suggest that an audience is actually in the consideration phase for this purchase category? What content tone and style have historically correlated with high conversion in similar campaigns?

Step 2: Run Discovery and Safety Simultaneously

Separate discovery and safety review workflows are operationally inefficient and structurally risky. The paired architecture — running brand safety scoring in parallel with matching discovery — should be the default, not an optional add-on. For brands not yet using a platform with native paired architecture, the simplest workaround is to integrate a dedicated brand safety tool into the discovery workflow before shortlisting rather than after.

Step 3: Weight the Five Layers Against Campaign Objectives

Not all signal layers should carry equal weight for every campaign type. An awareness campaign for a new product launch should weight content affinity and audience psychographics most heavily — reach into the right audience mindset matters most. A conversion campaign for an established product should weight predictive performance modeling and engagement authenticity most heavily — what matters is whether this audience buys.

The most sophisticated matching systems allow brands to adjust signal weighting by campaign objective. Brands using fixed-weight matching across all campaign types are leaving precision on the table.

Step 4: Build the Creator Bench Progressively

The EdTech case documented by InfluenceFlow illustrates what progressive bench-building actually looks like in practice: a creator who started at $50,000 in annual partnership value grew to over $400,000 in 18 months as the brand used psychographic matching to build long-term relationships with creators whose audiences aligned deeply with their product value proposition. That growth wasn’t accidental — it was the result of treating creator relationships as a compound investment rather than a series of transactional posts.

Building a creator bench means identifying 15–30 creators who score well across all five signal layers, running initial small-scale campaigns with each to generate real performance data, then scaling up investment with the 5–8 who actually convert, while maintaining relationships with the wider bench for future campaigns and product launches.

Step 5: Close the Loop with Performance Attribution

AI matching produces a predicted performance score. Actual campaign data produces real performance measurement. The brands that improve over time are the ones that systematically feed actual campaign results back into their matching criteria — updating their psychographic parameters based on which audience characteristics actually correlated with conversion, and updating their creator quality assessments based on which creators overperformed or underperformed their predicted scores.

This feedback loop is what turns creator marketing from a series of experiments into a progressively more accurate prediction engine. Each campaign generates data that makes the next campaign’s matching more precise.

What Comes Next: Programmatic Creator Buying and the Path to Scalable Partnerships

The direction AI creator matching is moving is toward something that looks increasingly like programmatic advertising — not in the sense of automated ad placement, but in the sense of data-driven, scalable, continuously optimizing partnerships that can be deployed with the speed and precision that digital marketing teams expect from their paid media channels.

The Programmatic Analogy

Consider what programmatic advertising achieved for display media: it moved the targeting decision from “let’s buy this publisher’s audience” (essentially, follower-count thinking) to “let’s buy these specific behavioral and psychographic signals wherever they appear.” Creator marketing is undergoing the same transition — from “let’s work with this creator” to “let’s identify the specific audience signals we need to reach and systematically find the creators who own access to those signals.”

Some platforms are already framing their matching infrastructure in explicitly programmatic terms, positioning their capability as the ability to specify precise audience parameters and receive a vetted, scored candidate pool automatically — enabling brands to approach creator discovery with the same specificity they’d apply to a paid search audience build.

Performance Contracts and Outcome-Based Scaling

Creator marketing budgets rose 171% in major markets in 2026, and paid amplification spending hit $11.1 billion — a 56% increase from 2025. That level of investment demands the accountability infrastructure that performance-based deal structures and programmatic-style matching provide.

The direction of travel is clear: brands are building creator partnerships the way the best ones build their paid search portfolios — with clear performance benchmarks, continuous optimization, and systematic budget reallocation from underperformers to overperformers. The creator who generates a 12:1 ROAS gets 10x the budget next quarter. The creator who generates 1.4:1 gets a polite offboarding.

The Synthetic Influence Question

No forward-looking discussion of AI creator matching is complete without addressing the emergence of virtual and synthetic creators — AI-generated influencer personas that, according to Grand View Research, already achieve 60% higher engagement rates than traditional creators in certain demographic niches via Generative Adversarial Network (GAN)-produced content tailored to specific audience profiles.

Virtual influencers sidestep many of the human-AI gap problems outlined earlier — they can be precisely calibrated to tone, aesthetic, and messaging without the unpredictability of a real creator relationship. But they introduce new problems: audience trust, FTC disclosure requirements that are still being shaped, and the authentic credibility that comes from a real person genuinely using and endorsing a product is fundamentally absent.

For most brands, virtual creators represent a niche tool rather than a replacement for genuine creator partnerships. But their rapid growth is a signal that the creator marketing ecosystem is continuing to evolve in ways that will require matching technology to adapt continuously.

Conclusion: The Brands Winning Creator Partnerships Are Treating It Like a Science

The creator economy in 2026 is too large, too fragmented, and too data-rich to be navigated by instinct. The brands that are building durable, high-ROI creator programs have made a fundamental shift: they’ve stopped treating creator discovery as a relationship process that happens to involve some data, and started treating it as a data process that eventually produces relationships.

That shift doesn’t mean removing humanity from creator marketing. The five signal layers described in this post — content affinity, audience psychographics, engagement authenticity, brand safety scoring, and predictive performance modeling — are tools for finding the right people to have genuine partnerships with. The relationship still has to be real. The creative collaboration still has to be authentic. The creator still has to actually believe in what they’re recommending. AI matching just means that the initial search is no longer a guess.

The actionable framework for brands building or improving their creator matching systems comes down to five operational commitments:

- Define psychographic matching inputs before running discovery — generic parameters produce generic shortlists.

- Run discovery and safety scoring in parallel, not sequentially — sequential review is both slower and structurally incomplete.

- Weight signal layers against campaign objectives, not just category fit — awareness campaigns and conversion campaigns need different signal hierarchies.

- Structure deals with performance components — hybrid models align creator incentives with brand outcomes and produce 3.7x more revenue per follower than static arrangements.

- Close the attribution loop systematically — every campaign’s results should improve the next campaign’s matching precision.

The $32.55 billion creator marketing industry is not slowing down. The brands that figure out the signal science underneath it — that learn to predict rather than just search — are the ones that will compound that investment into a durable competitive advantage. Everyone else will keep paying for audiences that don’t convert and wondering why their influencer budget feels like a lottery.

The data exists to do this better. The only question is whether your organization is using it.