For years, enterprise automation promised to eliminate grunt work. And to its credit, it delivered — routing invoices, triggering email sequences, syncing records between systems. But there was always a ceiling. The moment a process hit an exception, an ambiguous data point, or a decision that required judgment, the workflow stopped cold. Somebody opened a ticket. A human stepped in. The efficiency gain evaporated.

Agentic AI breaks that ceiling.

Unlike its predecessors — RPA bots, rule-based workflows, even first-generation AI assistants — agentic AI doesn’t just execute predefined instructions. It reasons about goals, plans multi-step approaches, calls tools and external systems as needed, reflects on its own outputs, and adapts when conditions change. Most importantly, it does this across an entire workflow — from the first input to the final deliverable — with minimal human intervention at each step.

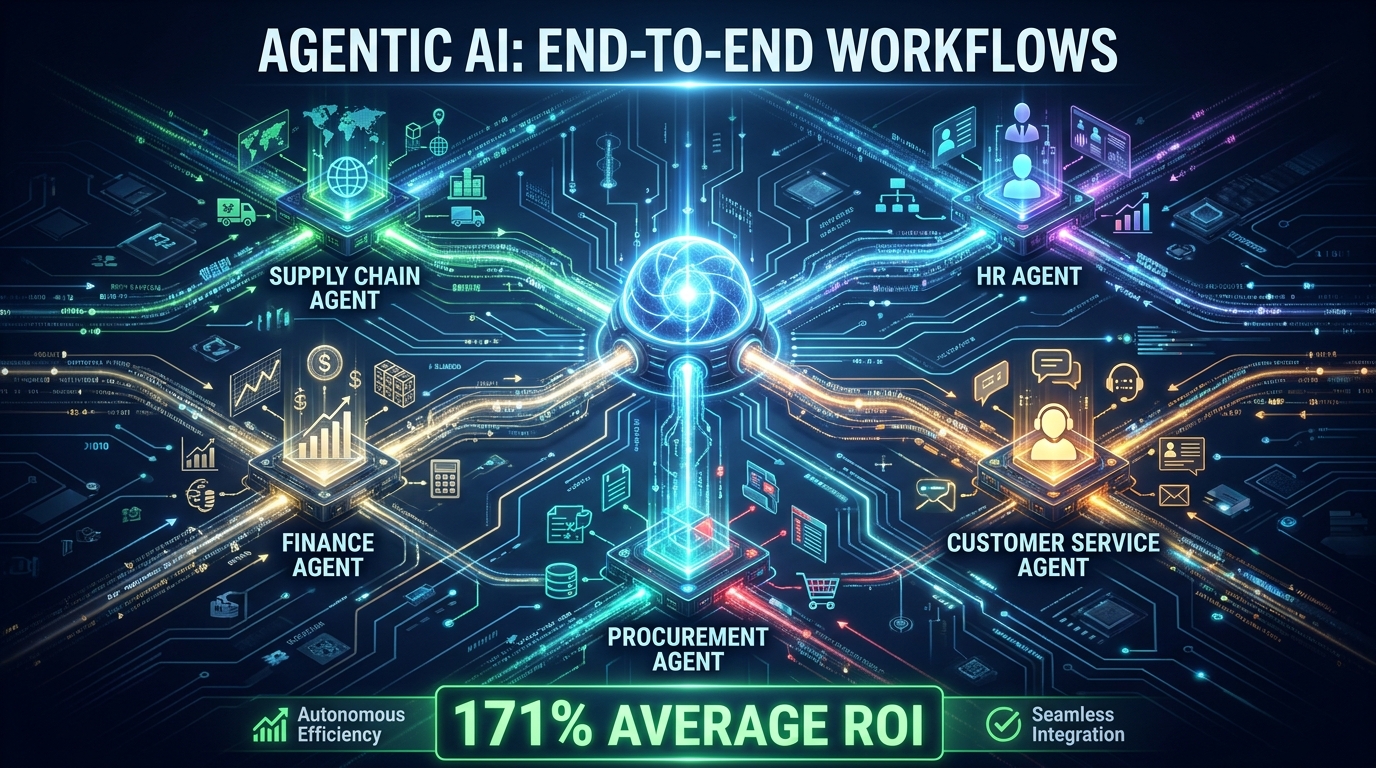

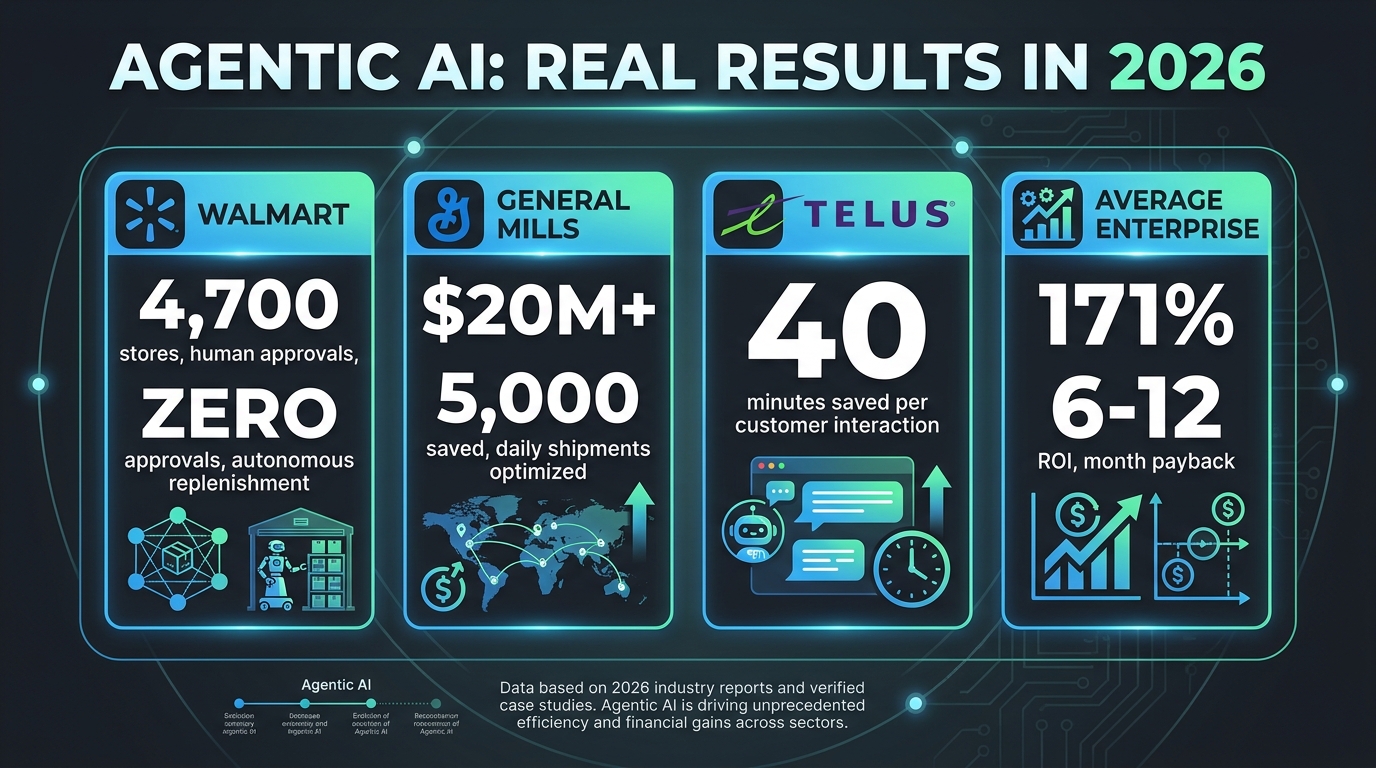

The numbers are moving fast. By the end of 2026, Gartner projects that 40% of business applications will feature task-specific AI agents, up from less than 5% just a year earlier. Among enterprises already in production, 42% have deployed agentic systems, and 72% are either in production or active pilots. The average enterprise ROI sits at 171% — rising to 192% for U.S.-based organizations — with payback periods measured in weeks, not years.

This article takes a practical, architecture-first look at what agentic AI for end-to-end workflows actually means: how these systems are built, where they’re delivering real results, what causes them to fail, and how to build the governance infrastructure that makes autonomous execution trustworthy rather than reckless.

This isn’t a technology preview. These systems are in production today, and understanding them is no longer optional for anyone responsible for enterprise operations.

The Gap Between Automation and Action

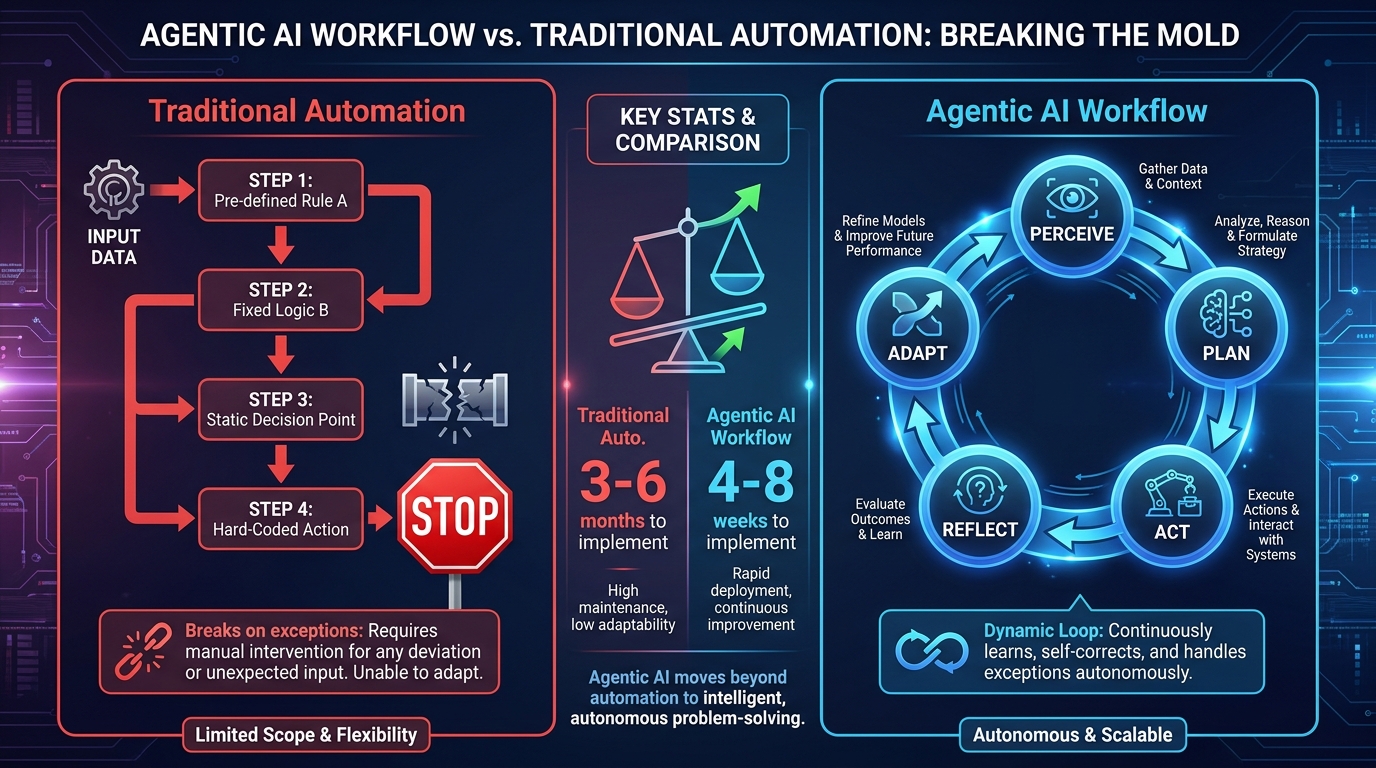

To understand why agentic AI matters, it helps to be precise about what traditional automation actually did — and where it reliably fell apart.

What Rules-Based Automation Got Right (and Wrong)

Robotic Process Automation, workflow orchestration platforms, and rule-based automation engines transformed back-office operations over the past decade. They excel in one specific context: tasks that are well-defined, repetitive, and stable. Invoice matching, data entry, report distribution, scheduled API calls — these are the kinds of processes that automation handles beautifully, with near-zero error rates and no complaints about overtime.

The problem emerges the moment you step outside those boundaries. What happens when an invoice arrives with an unusual line item that doesn’t match any existing category? What happens when a customer service ticket requires context from three different systems and a judgment call about whether an exception policy applies? What happens when a supply chain disruption requires simultaneously rerouting deliveries, notifying vendors, adjusting inventory projections, and updating financial forecasts?

Traditional automation answers all of those questions the same way: it stops, flags the exception, and waits for a human. That’s not a flaw in the technology — it’s a deliberate design choice for systems built around deterministic rules. But it creates a hard ceiling on how much of a complex business process automation can actually handle.

The Judgment Gap

The territory between “clearly automatable” and “clearly needs a human” is enormous in most enterprise workflows. This middle ground — what some practitioners call the judgment gap — is where agentic AI lives. These are the tasks that require:

- Interpreting ambiguous or incomplete information

- Synthesizing context from multiple systems simultaneously

- Choosing between competing courses of action based on current conditions

- Generating novel outputs (documents, recommendations, responses) rather than retrieving stored ones

- Recognizing when a situation has changed enough to warrant a different approach

For years, closing the judgment gap required hiring more people. Agentic AI offers a different answer: systems that can reason through ambiguity, integrate context across systems, and make defensible decisions within defined boundaries — all without requiring a human to intervene at every step.

Why This Shift Matters for End-to-End Workflows

The real significance of agentic AI isn’t in any individual task. It’s in what becomes possible when you chain agentic behavior across a complete workflow. A traditional automation setup might handle 60–70% of a purchase order workflow end-to-end, with humans handling exceptions at four or five intervention points. An agentic workflow can push that to 85–92% autonomous completion, with human review reserved for genuinely high-stakes decisions.

That difference — from 70% to 90% autonomous — doesn’t sound dramatic until you calculate what it means at scale. If your organization processes 10,000 purchase orders a month and each manual intervention costs 20 minutes of human time, shrinking the intervention rate from 30% to 10% frees up roughly 33,000 hours annually. That’s a full-time equivalent headcount reallocation, not a marginal efficiency gain.

What Makes a Workflow “Agentic”? The Core Anatomy

The word “agentic” gets applied loosely to anything that feels more autonomous than a chatbot. But there’s a specific architecture that defines genuine agentic behavior, and understanding it is essential for evaluating any system or vendor claiming to deliver it.

The Five Core Components

Every agentic system that handles real end-to-end workflows shares five functional components, regardless of what framework or underlying model powers it:

1. Perception and Context Gathering

The agent must be able to take in information from its environment — structured data from databases, unstructured text from documents or emails, real-time signals from APIs, outputs from other agents. This is more than reading an input field. A genuinely agentic system can pull context from multiple sources, reconcile conflicting information, and build a working understanding of the current state of a workflow before deciding what to do next.

2. Planning and Goal Decomposition

Given a high-level goal — “process this vendor onboarding request” or “resolve this customer issue” — the agent must break that goal into a sequence of concrete steps, decide which tools or sub-agents are needed at each step, and anticipate how results from one step should inform the next. This is fundamentally different from executing a predefined script. The plan itself is generated dynamically based on the specific context of each instance.

3. Memory and State Management

Agentic workflows can span hours, days, or longer — far beyond the context window of any single model call. Production-grade agentic systems maintain state through a combination of vector databases (for semantic retrieval of relevant past context), knowledge graphs (for structured relational data), and conversation or session memory. This allows an agent handling a multi-week procurement process to “remember” what decisions were made three weeks ago and why.

4. Tool Use and Action Execution

Agents don’t just reason — they act. Tool use is how agentic systems reach outside themselves to query databases, call APIs, write to systems of record, trigger downstream processes, or invoke other agents. The Model Context Protocol (MCP), now under Linux Foundation governance, has emerged as a standard interface layer between agents and external tools, significantly reducing integration complexity across enterprise stacks.

5. Reflection and Self-Correction

Production agentic systems include feedback loops. After each action, the agent evaluates the result against its goal, identifies discrepancies, and adjusts its next steps. This is the mechanism that allows agentic workflows to handle exceptions without human escalation — the agent notices when something hasn’t worked as expected and tries a different approach before flagging it for human review.

Design Patterns in Practice

Different workflow types favor different architectural patterns. The two most widely deployed in enterprise settings are:

- ReAct (Reason + Act): The agent alternates between reasoning about its current state and taking a discrete action, building up a chain of reasoning that’s traceable and auditable. This is the dominant pattern for workflows requiring explainability, like financial approvals or compliance checks.

- Plan-and-Execute: The agent builds a complete plan upfront, then executes steps in parallel where possible. This pattern favors throughput — useful in supply chain optimization, where many independent sub-tasks can run simultaneously.

Understanding which pattern fits your workflow isn’t just an academic exercise. The wrong choice can create bottlenecks, inflate costs, or produce outputs that are impossible to audit — all critical failure modes in regulated enterprise environments.

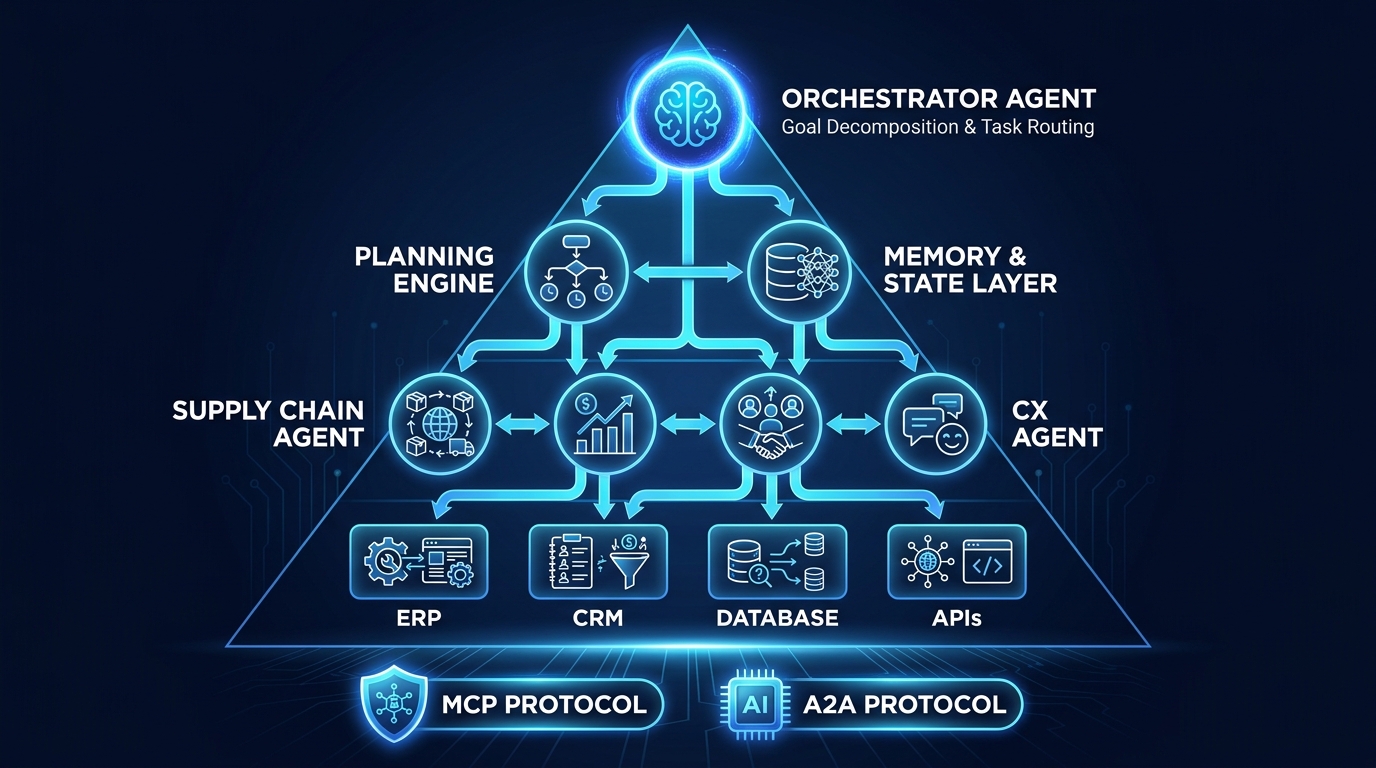

The Multi-Agent Architecture That Powers Complex Workflows

A single AI agent can handle a self-contained task. But most meaningful enterprise workflows are too complex, too long, and too interdisciplinary for a single agent to handle reliably. This is where multi-agent architectures become essential — and where most enterprise deployments in 2026 actually operate.

The Orchestrator Model

In a multi-agent system, a central orchestrator receives the high-level goal, analyzes what needs to happen, and distributes subtasks to specialized agents. Think of the orchestrator as a project manager who never sleeps: it tracks which subtasks are in progress, manages dependencies between them, resolves conflicts when agent outputs contradict each other, and aggregates results into a coherent final output.

The orchestrator doesn’t necessarily execute any individual task itself. Its value is coordination — ensuring that a complex workflow consisting of dozens of interdependent steps completes correctly, efficiently, and with full traceability.

Specialist Agents and Domain Expertise

The agents that receive tasks from the orchestrator are domain-specific. A well-designed multi-agent system for, say, a customer onboarding workflow might include:

- An identity verification agent that interfaces with KYC APIs

- A credit assessment agent that pulls from financial data sources

- A document processing agent that extracts and validates information from submitted files

- A CRM integration agent that creates and populates the new account record

- A communication agent that drafts and sends status notifications

Each specialist agent is optimized for its specific domain — potentially using a different underlying model, a different set of tools, and a different set of guardrails. This modularity is one of the key advantages of multi-agent architectures: you can upgrade, replace, or retune individual agents without rebuilding the entire system.

Coordination Protocols: MCP and A2A

Two protocols have emerged as the infrastructure layer that makes multi-agent systems interoperable at enterprise scale.

Model Context Protocol (MCP) standardizes how agents connect to external tools, databases, and APIs. Before MCP, every agent-to-tool integration required custom code. MCP creates a consistent interface that dramatically reduces the integration burden — an agent that speaks MCP can connect to any MCP-compliant tool without bespoke engineering.

Agent-to-Agent Protocol (A2A) handles communication between agents in a multi-agent system. It defines how agents discover each other’s capabilities, hand off tasks, share context, and report results. Both protocols are now under Linux Foundation governance, which matters significantly for enterprises concerned about vendor lock-in.

Three Coordination Patterns

Multi-agent systems can be structured in several ways depending on the workflow’s requirements:

- Hierarchical: A clear chain of command — orchestrator delegates to managers, who delegate to workers. Provides strong control and auditability. Best for regulated workflows like financial close or compliance reviews.

- Collaborative/Debate: Multiple agents independently work on the same problem and reconcile their outputs through a consensus mechanism. Increases output quality at the cost of compute. Best for high-stakes decision support like contract analysis.

- Swarm: Many lightweight agents work in parallel with minimal central coordination. Extremely fast for well-parallelizable tasks. Best for large-scale data processing or content generation pipelines.

Five End-to-End Workflow Scenarios Already Running in Production

Theory gives way quickly to what organizations are actually running. Across five enterprise domains, agentic AI workflows have moved well beyond pilot projects into production systems handling real business transactions at scale.

1. Supply Chain and Inventory Management

Walmart’s agentic supply chain system is among the most cited examples of what end-to-end autonomous operation looks like in practice. The system ingests real-time data from approximately 4,700 store locations, performs demand forecasting, triggers replenishment orders, and routes inventory through the distribution network — all without requiring human approval at each step. Inventory moves to regional distribution centers before customer orders are even placed, based purely on agentic prediction and execution.

At General Mills, an agentic logistics platform optimizes more than 5,000 daily shipments, coordinating carriers, routing decisions, and load consolidation across the network. The cumulative financial impact since the system’s deployment has exceeded $20 million in savings.

For the Siemens-PepsiCo Digital Twin Composer announced at CES 2026, multi-agent systems simulate supply chain changes with physics-level accuracy before any physical implementation — agents model disruptions, test responses, and validate decisions before they’re committed to the real world.

2. Customer Experience and Service Resolution

TELUS deployed an end-to-end agentic customer service workflow that autonomously handles ticket triage, diagnosis, resolution, and — where appropriate — proactive retention outreach based on behavioral signals. The measured result: 40 minutes saved per customer interaction. At scale, this represents a fundamental shift in what a customer service team can accomplish with the same headcount.

The workflow doesn’t just route tickets. It reads ticket content, queries relevant system records, identifies the appropriate resolution path, executes it (issuing credits, updating accounts, dispatching technicians), and drafts the customer-facing response — all before a human agent ever reviews the case. Human review happens at escalation thresholds, not at every step.

3. Finance and Back-Office Operations

In finance, agentic workflows are making inroads into accounts payable, financial close, and compliance monitoring. Oracle Fusion Workspaces, released in April 2026, includes an agentic Cost Accounting Close feature that autonomously prioritizes exceptions, surfaces anomalies, and works through the close process with minimal manual intervention. The system flags only the items that genuinely require human judgment, routing everything else through automated resolution.

Beyond close cycles, agentic systems are handling continuous compliance monitoring — ingesting regulatory feeds, comparing current practices against requirements, identifying gaps, and generating remediation recommendations without waiting for scheduled audit cycles.

4. HR and Employee Lifecycle Management

Employee onboarding is a workflow that touches IT provisioning, payroll setup, benefits enrollment, compliance documentation, and manager notifications — across multiple systems, in a specific sequence, with dependencies between steps. Agentic orchestration of onboarding has consistently delivered significant cycle time reductions. Documented deployments have shown 67% reductions in onboarding completion time, primarily by eliminating the delays that accumulate when each handoff between systems requires human initiation.

Broader HR applications include performance review orchestration (collecting inputs, synthesizing feedback, flagging anomalies in rating distributions), job posting management (analyzing requisition requirements, drafting descriptions, distributing to relevant channels, screening initial applications), and offboarding workflows that ensure no access credentials or equipment are missed.

5. Procurement and Vendor Management

Coupa and SAP’s agentic procurement agents have brought autonomous decision-making to purchase order processing, vendor qualification, and contract compliance monitoring. The SAP Production Planning Agent, rolled out in Q1 2026, validates production orders autonomously, accelerating the order-to-delivery cycle by eliminating manual validation bottlenecks.

In vendor onboarding, agentic workflows handle document collection, tax ID verification, banking detail validation, risk scoring, and ERP record creation — a process that previously took 2–3 weeks of back-and-forth has been compressed to hours in production deployments.

The Honest ROI Picture: What the Numbers Actually Mean

The ROI statistics around agentic AI are genuinely impressive. They’re also sometimes presented in ways that warrant closer examination. Understanding what drives the numbers — and what conditions they require — matters more than the headline figures.

Where the Returns Come From

The 171% average ROI figure across enterprise deployments breaks down into three primary sources:

Labor cost displacement: The most immediate and measurable. AI agents cost between $0.25 and $0.50 per interaction in many customer service and back-office deployments, compared to $3–15 per human interaction depending on the role and region. At scale, this arithmetic is undeniable.

Throughput expansion: Agentic systems don’t take breaks, don’t require shift changes, and don’t have capacity limits tied to headcount. A 66% increase in task throughput — a figure cited across multiple large-scale deployments — effectively means organizations can handle significantly higher volumes without proportional cost increases.

Error reduction and rework elimination: Manual processes in complex workflows carry error rates that generate significant downstream cost through rework, corrections, and exceptions. Documented deployments have shown error reductions of 70–75% in workflows previously dependent on manual data handling.

The Conditions That Matter

ROI isn’t automatic. The deployments that hit 192% returns share several characteristics: they started with documented, reasonably functional processes (not automating dysfunction); they invested in data readiness before deployment; they designed human-in-the-loop oversight at defined thresholds rather than bolting it on afterward; and they measured rigorously from day one.

The deployments that underperform — or fail outright — share a different profile, which the next section examines directly.

Payback Timeline Realities

For enterprise deployments with proper architecture and process prerequisites in place, payback periods in the 6–12 month range are well-documented. Some high-volume, high-interaction workflows (particularly customer service) have reported payback in as little as 4–6 weeks. But these are concentrated in specific workflow categories with very high transaction volumes and low complexity per transaction.

For workflows that are complex, exception-heavy, or require significant integration work, 12–18 months is a more realistic expectation. Framing every deployment as a six-week payback story is how organizations end up disappointed with results that are actually above average by any reasonable benchmark.

Why 40% of Agentic AI Projects Still Fail

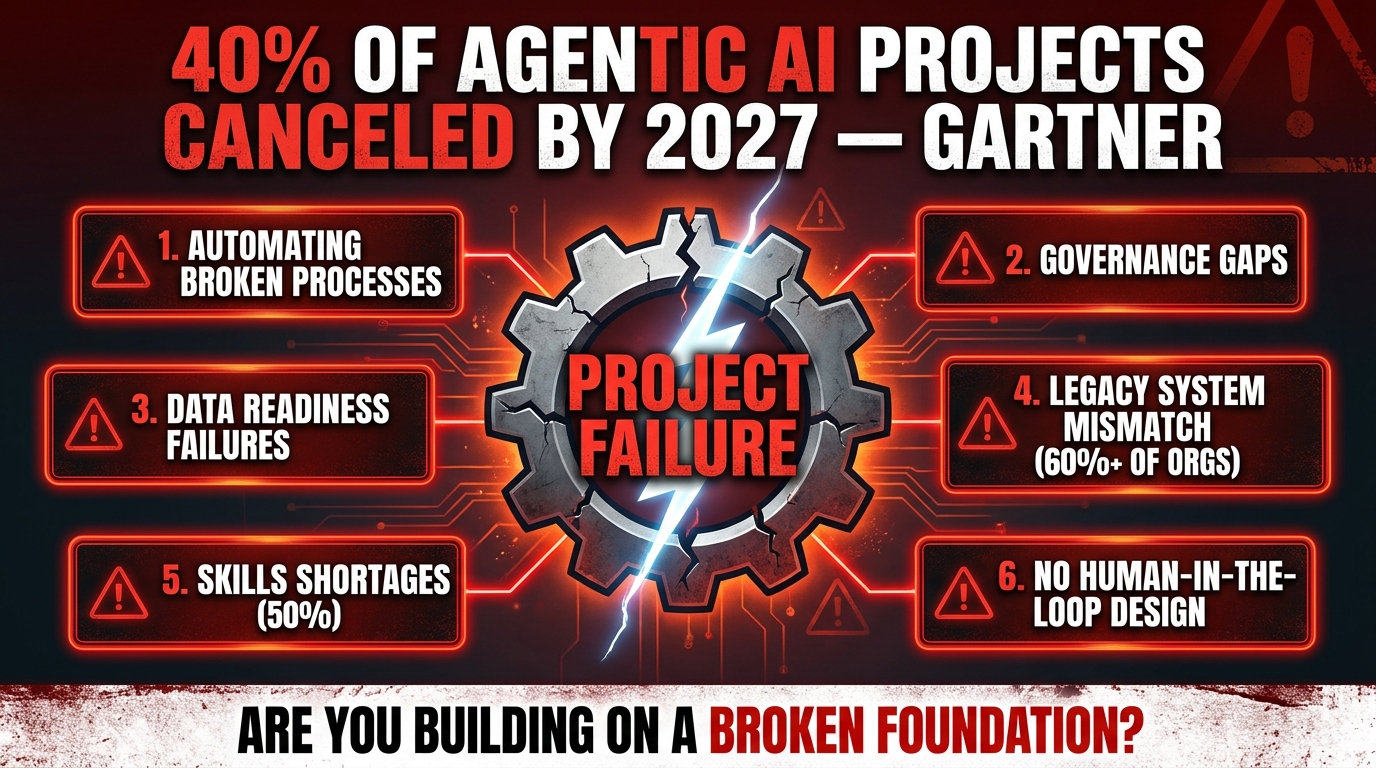

Gartner’s projection that more than 40% of agentic AI projects will be canceled by 2027 is not a commentary on the technology itself. It’s a commentary on how organizations are deploying it. Understanding the failure patterns is essential for avoiding them.

Failure Mode 1: Automating Broken Processes

The most pervasive failure pattern in agentic AI deployment mirrors what plagued the first generation of RPA: organizations deploy agents onto workflows that are already dysfunctional. If a procurement workflow has unclear approval hierarchies, inconsistent documentation requirements, and exceptions that nobody has codified, an agentic system doesn’t fix any of that — it inherits it and executes the dysfunction faster and at greater scale.

The diagnostic question before any agentic deployment should be: “If we gave this workflow to a new human employee, could they complete it correctly with the existing documentation?” If the answer is no, the process needs to be fixed before it gets automated.

Failure Mode 2: Governance Gaps and Late Enforcement

Organizations frequently treat governance as a final-stage activity — something to layer on after the system is built and running. This approach is particularly dangerous with agentic systems because, unlike rule-based automation, agentic systems can take actions that were never explicitly anticipated in the design. An agent reasoning about the best way to resolve a customer complaint might discover a pathway through a system it wasn’t designed to interact with.

Governance — including decision traceability, accountability mapping, action boundaries, and audit logging — must be designed in from the beginning, not retrofitted. The 84% of leaders who identify effective AI governance as essential to agentic AI success are identifying something they’ve learned from experience, not from theory.

Failure Mode 3: Data Readiness Deficits

Agentic systems are only as good as the data they reason from. More than 60% of organizations encounter legacy system misalignment issues that create data inconsistency across the systems their agents need to access. When an agent pulls inventory data from one system and purchase order data from another, and those systems have different definitions of what constitutes an “active” vendor, the agent’s decisions will be consistently wrong in ways that are difficult to trace.

Data readiness assessment — auditing the consistency, completeness, and currency of data across all systems a workflow will touch — is not optional pre-work. It’s the work that determines whether deployment will succeed.

Failure Mode 4: Undefined Human-in-the-Loop Architecture

Some teams build agentic systems with no human review at all, trusting the agent to handle everything. Others design so many escalation points that the system barely reduces human involvement. Both are failures of design.

Effective agentic workflows define explicit thresholds: decision value above X requires human approval; confidence score below Y triggers escalation; any action in regulated category Z gets human review regardless of confidence. These thresholds need to be calibrated to actual risk, not set arbitrarily.

Failure Mode 5: Skills Shortages and Organizational Unreadiness

Fifty percent of organizations cite skilled talent shortages as a primary barrier to agentic AI adoption. But the shortage isn’t just technical. Many organizations lack people who can bridge the gap between operational expertise and agentic system design — professionals who understand both the workflow being automated and the architecture of the system doing it. Without this hybrid expertise, systems get built that are technically functional but operationally disconnected from how the business actually works.

Human-in-the-Loop: Where Autonomy Ends and Oversight Begins

The phrase “human-in-the-loop” is used loosely enough that it’s become almost meaningless in some discussions. In agentic AI deployment, it refers to something specific and consequential: the deliberate design of intervention points where human judgment replaces or validates agent judgment.

The Tiered Autonomy Model

Best-practice deployments in 2026 structure human oversight as a tiered system rather than a binary choice between “fully autonomous” and “always ask a human.” A well-designed tier model looks something like this:

- Tier 1 — Full Autonomy: Routine decisions within clearly defined parameters, high confidence, low risk. The agent executes without notification. Example: routing a standard customer service ticket to the correct category.

- Tier 2 — Notify and Proceed: The agent takes action but logs a notification for human review within a defined window. Humans can intervene retroactively if the action was incorrect. Example: approving a purchase order under $5,000 from a qualified vendor.

- Tier 3 — Request Approval: The agent prepares the action and waits for explicit human approval before executing. Example: issuing a customer refund above a defined threshold.

- Tier 4 — Human Execution: The agent provides recommendation and context, but a human takes the final action. Example: regulatory filings, high-value contract approvals.

Targeting approximately 10–15% of all workflow steps for human review — across tiers 3 and 4 — is a reasonable calibration point for most enterprise workflows during initial deployment, with the expectation of adjusting based on observed error patterns.

Designing for Explainability

Human review only works if reviewers can actually understand what the agent did and why. Systems that present a human with a recommendation and a confidence score, with no visibility into the reasoning behind them, create rubber-stamp review processes that provide the form of oversight without the substance.

Genuine explainability means the review interface shows: what information the agent considered, what alternatives it evaluated, why it chose this particular action, and what conditions would have led it to a different outcome. This level of transparency requires deliberate design — it doesn’t emerge automatically from any off-the-shelf agentic framework.

Regulatory Context: EU AI Act and NIST Alignment

For enterprises operating in regulated environments, human-in-the-loop design isn’t a best practice recommendation — it’s a compliance requirement. The EU AI Act establishes explicit human oversight requirements for high-risk AI systems, including those making or substantially influencing decisions about credit, employment, and essential services. NIST’s AI Risk Management Framework similarly emphasizes human review as a core control in its GOVERN and MEASURE functions.

Building oversight architecture that aligns with these frameworks from the start is significantly cheaper than retrofitting compliance later — and substantially less risky than discovering a compliance gap during an audit or enforcement action.

Building vs. Buying: Navigating the Framework and Vendor Landscape

Every enterprise deploying agentic workflows faces a foundational decision: build on open-source frameworks, buy from a platform vendor, or pursue a hybrid approach. Each path has genuine trade-offs that aren’t resolved by vendor marketing.

Open-Source Framework Options

The open-source landscape for agentic AI has matured considerably through 2025–2026. The primary frameworks currently in enterprise production include:

- LangChain / LangGraph: The most widely adopted framework for agent development. LangGraph specifically handles stateful multi-actor workflows well, with strong community support and documentation. Best for teams with engineering depth who want maximum flexibility.

- CrewAI: Purpose-built for multi-agent collaboration. Offers intuitive role-based agent design and strong orchestration primitives. Popular in organizations that want faster time-to-production on multi-agent use cases.

- AutoGen (Microsoft): Strong for conversational multi-agent systems and workflows that involve human-agent collaboration. Benefits from deep integration with Microsoft’s tooling ecosystem.

- BeeAI (IBM): Enterprise-focused framework with built-in governance features and integration with IBM’s watsonx Orchestrate platform.

Open-source paths offer maximum flexibility and avoid vendor lock-in, but require engineering investment for production hardening — logging, retry logic, observability, security controls — that commercial platforms provide out of the box.

Commercial Platform Options

Enterprise platform vendors have moved aggressively into agentic orchestration. IBM watsonx Orchestrate, SAP’s Business AI, Oracle Fusion Workspaces, Salesforce Agentforce, and Microsoft Copilot Studio all offer varying degrees of no-code/low-code agentic workflow building within their respective ecosystems.

The significant advantage of platform approaches is speed-to-value: pre-built integrations, enterprise security controls, and compliance documentation are included. The trade-off is cost, ecosystem dependency, and limits on customization at the edges of what the platform was designed to handle.

The Hybrid Pattern That’s Winning

65% of enterprises in active agentic deployments are using a mixed model: commercial platforms for core domain workflows (where the vendor’s pre-built integrations are valuable) and open-source frameworks for bespoke or proprietary workflows (where flexibility and IP control matter). This approach avoids the false choice between speed and control — it optimizes each individually based on the specific workflow’s requirements.

The Governance Stack You Actually Need

Governance for agentic AI is different from governance for traditional automation in one critical way: the surface area of possible actions is vastly larger. A rule-based workflow can do exactly what it was programmed to do. An agentic system can do what it reasons it should do — which may include things its builders never anticipated. Governance must account for this.

Decision Traceability Infrastructure

Every significant decision made by an agentic system should be logged with full context: what information the agent had at decision time, which reasoning steps it took, what action it chose, and what the outcome was. This isn’t just for audit purposes — it’s how you identify systematic errors, calibrate confidence thresholds, and demonstrate compliance to regulators.

Vector databases with semantic logging capabilities are increasingly used for this purpose, allowing teams to retrieve and analyze decision patterns in natural language rather than requiring SQL expertise to query log tables.

Action Boundaries and Guardrails

Every agentic system should have explicit, enforced boundaries on what actions it’s permitted to take. These should be defined at deployment time and enforced at the infrastructure level — not just as instructions to the model, which can be reasoned around. Examples:

- Maximum transaction value before human approval required

- Prohibited systems (the agent cannot write to production databases directly)

- Restricted data categories (PII handling requires specific logging and masking)

- Rate limits on external API calls

- Content filters on generated outputs in regulated communications

Continuous Monitoring and Drift Detection

Agentic systems operate in environments that change. Vendor APIs update, regulatory requirements shift, data distributions evolve. A system that was making good decisions in January may be making subtly wrong decisions by June without anyone noticing — unless there’s active monitoring in place.

The monitoring stack for production agentic systems should include: task completion rate trending, escalation rate trending, outcome quality scoring (where measurable), latency monitoring, and regular adversarial testing to verify that guardrails are still functioning as intended.

Accountability Mapping

Who is accountable when an agentic workflow makes a wrong decision that has real consequences? This question must be answered before deployment, not after. Clear accountability mapping — identifying the human responsible for each category of decision the agent makes — is both a governance requirement and a risk management essential. Without it, organizations discover during incidents that nobody can answer the question “who was responsible for this?”

A Practical 30/60/90-Day Deployment Roadmap

The gap between agentic AI as a concept and agentic AI as a functioning production system is closed through a structured deployment process. What follows is a framework derived from documented enterprise deployments, not theoretical best practices.

Days 1–30: Audit, Select, and Architect

Process Audit: Map two to three candidate workflows end-to-end. Document every step, decision point, exception pattern, and system involved. Identify where humans currently intervene and why. Flag any process steps where documentation doesn’t match practice.

Data Readiness Assessment: For each workflow, audit the data sources it touches. Check for consistency of definitions, completeness, update frequency, and access controls. Identify gaps before they become deployment blockers.

Governance Framework: Define action boundaries, accountability mapping, and human-in-the-loop thresholds before any code is written. Engage legal and compliance stakeholders at this stage, not after.

Architecture Decision: Choose your framework and coordination pattern based on workflow characteristics. Document this decision with explicit rationale — it will inform future decisions as the system scales.

Days 31–60: Build and Pilot

Single Workflow Pilot: Deploy one agent or agent cluster on the highest-readiness workflow. Prioritize workflows with high transaction volume, well-documented processes, and lower compliance risk for the first pilot.

Shadow Mode Operation: Run the agent in shadow mode for 1–2 weeks — it processes real workflows but its outputs are reviewed by humans rather than executed directly. This validates decision quality before going live.

KPI Baseline: Establish baseline metrics before going live: current task completion time, error rate, human intervention rate, and cost per transaction. You cannot measure improvement without a credible baseline.

Live Deployment with Full HITL: Go live with human review on all significant actions. Tighten autonomy thresholds gradually as confidence in the system builds.

Days 61–90: Measure, Iterate, and Scale

ROI Measurement: Compare live performance against baseline on your defined KPIs. Identify the gap between projected and actual performance and diagnose the causes.

Threshold Calibration: Adjust autonomy thresholds based on observed error patterns. Where the agent is consistently right, expand autonomy. Where errors cluster, tighten review requirements.

Multi-Agent Expansion: Once the first workflow is stable and measurable, apply lessons learned to the next workflow. The second deployment is always faster than the first because the process and governance infrastructure already exists.

Stakeholder Review: Conduct a formal review at day 90 with operational, technical, legal, and finance stakeholders. Document what worked, what didn’t, and what the expansion roadmap looks like.

Measuring What Matters: KPIs for Agentic Workflows

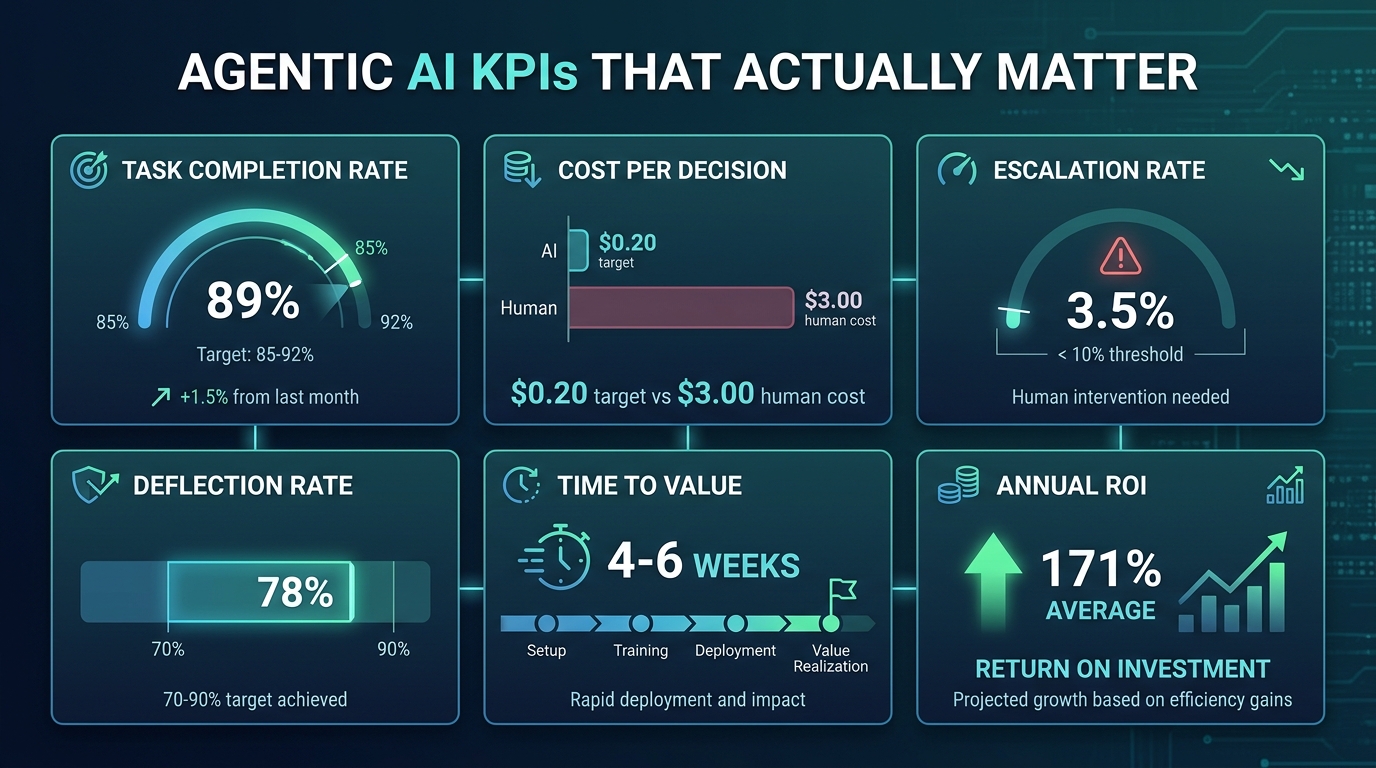

The metrics that matter for agentic workflows are different from the vanity metrics often used to assess AI initiatives. Here is a focused set of KPIs that directly measure whether an agentic workflow is delivering operational value — and where it’s falling short.

Task Completion Rate

What it measures: The percentage of workflow instances the agent completes end-to-end without human escalation.

Target range: 85–92% for mature deployments.

What it signals: Below 85% suggests insufficient process documentation, data quality issues, or overly conservative escalation thresholds. Above 95% in early deployments may suggest under-escalation — the agent may be completing tasks that should have been reviewed.

Cost per Decision (or Cost per Resolved Interaction)

What it measures: Total operating cost divided by number of decisions or interactions handled by the agent.

Target range: Under $0.20 for high-volume transactional workflows.

What it signals: This is the primary financial efficiency metric. Track it against the pre-deployment human cost baseline to measure actual cost displacement, not projected savings.

Escalation Rate

What it measures: Percentage of tasks escalated to human review.

Target range: Under 10% in a mature deployment.

What it signals: High escalation rates indicate the agent is encountering more exceptions than anticipated — often a signal of data quality issues or process ambiguity. Analyze escalation patterns to identify systematic gaps, not just individual errors.

Deflection Rate

What it measures: In customer-facing workflows, the percentage of interactions fully resolved by the agent without human handoff.

Target range: 70–90% depending on workflow complexity.

What it signals: The single most impactful metric for customer service deployments. Each percentage point of deflection rate increase represents direct cost reduction and throughput expansion.

Goal Attainment Rate

What it measures: Whether the agent’s completed actions actually achieved the intended outcome (not just whether they were completed).

Target range: Context-dependent, but should be tracked and trending upward.

What it signals: This is the quality metric that completion rate doesn’t capture. An agent that completes 90% of tasks but achieves the correct outcome only 80% of the time has a compounding quality problem that completion rate alone would hide.

Decision Latency

What it measures: Time from workflow initiation to completion.

Target range: Compare against pre-deployment baseline; a 50–70% reduction is typical in well-designed deployments.

What it signals: Latency spikes often indicate tool call failures, retry loops, or orchestration bottlenecks that need architectural attention.

What Comes After End-to-End: The Emerging Multi-Workflow Frontier

As individual agentic workflows mature and prove their value, the next architectural challenge is already visible in the most advanced enterprise deployments: orchestrating across multiple agentic workflows simultaneously. Not just end-to-end within a single process, but cross-functional coordination where actions in one workflow autonomously trigger and inform actions in another.

Cross-Workflow Triggers and Shared Context

Consider what becomes possible when an agentic supply chain workflow can directly signal a finance workflow: a detected delivery disruption automatically triggers a cash flow forecast update, which in turn triggers a procurement workflow to reassess pending purchase orders. This kind of cross-workflow intelligence requires a shared context layer — a persistent state that multiple agent clusters can read from and write to — along with governance controls that prevent cascading errors from propagating across interconnected systems.

This is frontier territory even for the most advanced deployments. Most organizations in 2026 are correctly focused on making individual agentic workflows reliable before connecting them. But the architectural decisions made today about state management, protocol standards, and governance will determine how easily cross-workflow orchestration can be built when the organization is ready.

Continuous Learning and Process Evolution

One capability that distinguishes the leading enterprise deployments is the use of agent output data to continuously improve the underlying processes — not just the agents. When an agentic system processes thousands of exceptions over months and flags patterns that couldn’t be detected by human analysts, that’s an opportunity to redesign the process itself, not just tune the exception-handling.

Organizations that close this loop — using agentic workflow data to systematically improve the workflows those agents operate on — are compounding their efficiency gains in ways that organizations treating the agent as a static system cannot match.

Getting Started: The Decisions That Determine Everything Else

After examining architecture, case studies, ROI dynamics, failure modes, governance, and measurement, what are the highest-leverage decisions for an organization beginning its agentic AI journey?

Choose the Right First Workflow

The criteria for a good first agentic workflow are: high transaction volume (so you see results quickly), clear documentation (so you’re not building on confusion), measurable outcomes (so you can prove value), and lower regulatory complexity (so governance design is manageable). Don’t start with your most complex, highest-stakes process. Start with something that will teach you quickly and succeed visibly.

Invest in Process Documentation Before Technology

The single highest-return investment before any agentic deployment is rigorous process documentation. Organizations that can precisely describe every step, decision, exception, and system in a workflow before deployment consistently outperform those that treat documentation as a post-hoc activity. This is not a technology investment — it’s an operational discipline that determines whether any subsequent technology investment will succeed.

Build Governance Ahead of Scale

It’s far easier to govern one agentic workflow than thirty. The governance infrastructure you build for the first deployment — accountability mapping, action boundaries, audit logging, escalation thresholds — is the foundation you’ll scale from. Organizations that treat governance as an afterthought discover this at the worst possible time, typically during an incident or audit.

Measure Rigorously and Honestly

Agentic AI deployments generate enormous amounts of data about how they’re performing. Use it. Track the KPIs outlined in the previous section from day one. Publish results — including disappointing ones — to stakeholders. Organizations that sugarcoat early performance data create unrealistic expectations that cause projects to be canceled when they should be iterated. Projects that are measured honestly can be improved; projects that are measured selectively tend to fail slowly and expensively.

Conclusion: The Operational Case for Autonomous Execution

Agentic AI for end-to-end workflows represents a genuine architectural shift in how enterprises can organize work. Not in the sense that everything changes immediately, but in the sense that the boundary between “tasks that require humans” and “tasks that don’t” has moved significantly — and is going to keep moving.

The organizations that will benefit most from this shift are not necessarily the ones that adopt fastest. They’re the ones that adopt most deliberately — building on solid process foundations, designing governance from the start, measuring outcomes rigorously, and iterating based on what the data actually shows rather than what they hoped would happen.

The goal isn’t to replace human judgment. The goal is to deploy human judgment where it actually matters — and let autonomous systems handle everything that doesn’t require it.

The practical path forward is clear: identify one well-documented, high-volume workflow. Audit your data quality. Define your governance boundaries before you write any code. Deploy in shadow mode. Measure against a real baseline. Expand only after the first deployment is demonstrably stable.

Every enterprise that has done this successfully started with one workflow, one team, and one set of metrics. The difference between them and the 40% whose projects fail is almost never the technology they chose. It’s the rigor with which they prepared for the technology to work.

Key Takeaways

- Agentic AI fills the “judgment gap” in workflows — the territory between clearly automatable tasks and decisions that genuinely require human expertise.

- Multi-agent architectures using MCP and A2A protocols are the production standard for complex enterprise workflows in 2026.

- 171% average enterprise ROI is real, but conditioned on process readiness, data quality, and governance design — not on the technology alone.

- Gartner projects 40% of projects canceled by 2027. The primary causes are process dysfunction, governance gaps, and data unreadiness — all preventable with proper preparation.

- Human-in-the-loop design should be tiered, risk-based, and designed in from the start — not retrofitted after deployment.

- Key KPIs: task completion rate (target 85–92%), cost per decision (target <$0.20), escalation rate (target <10%), and goal attainment rate.

- The 30/60/90-day deployment roadmap — audit/architect, build/pilot, measure/scale — is the pattern shared by successful deployments across industries.

- Cross-workflow orchestration is the emerging frontier; the architectural decisions made in first deployments determine how easily organizations reach it.