There is a stat that should bother every operations leader, IT director, and business owner who has sat through a vendor demo in the last two years: 79% of organizations report some form of agentic AI adoption. Only 11% have agentic systems running in production.

That 68-point gap is not a technology problem. The tools exist. The models are capable. The ROI numbers, where deployments do work, are genuinely compelling — average 171% ROI, with U.S. enterprises reporting 192%, according to recent benchmarks. A B2B SaaS firm deployed multi-agent sales workflows and went from 2 to 14 booked meetings per week with the same team, in 90 days. A strategy consulting firm cut research time from 40 hours per project to 14 hours, grew revenue from $2M to $3.1M, and saw ROI within five months.

The gap is an execution problem. And it stems from a specific misunderstanding about what agentic workflows actually are, how they differ from the automation tools most organizations already have, and — critically — how to pick the right tasks, build the right architecture, and govern the system without it quietly failing six weeks in.

This article does not argue that agentic AI will automate everything. It argues something more useful: that there is a specific, well-defined class of routine business tasks that organizations continue to run manually not because automation is impossible, but because they have been reaching for the wrong tools. Agentic workflows are the right tool for that class. Here is what that means in practice.

What “Agentic” Actually Means — And Why the Distinction Matters

The word “agentic” has been applied to so many different products that it has become nearly meaningless in marketing contexts. So let’s be precise.

Three Categories of Automation (And Why They Are Not Interchangeable)

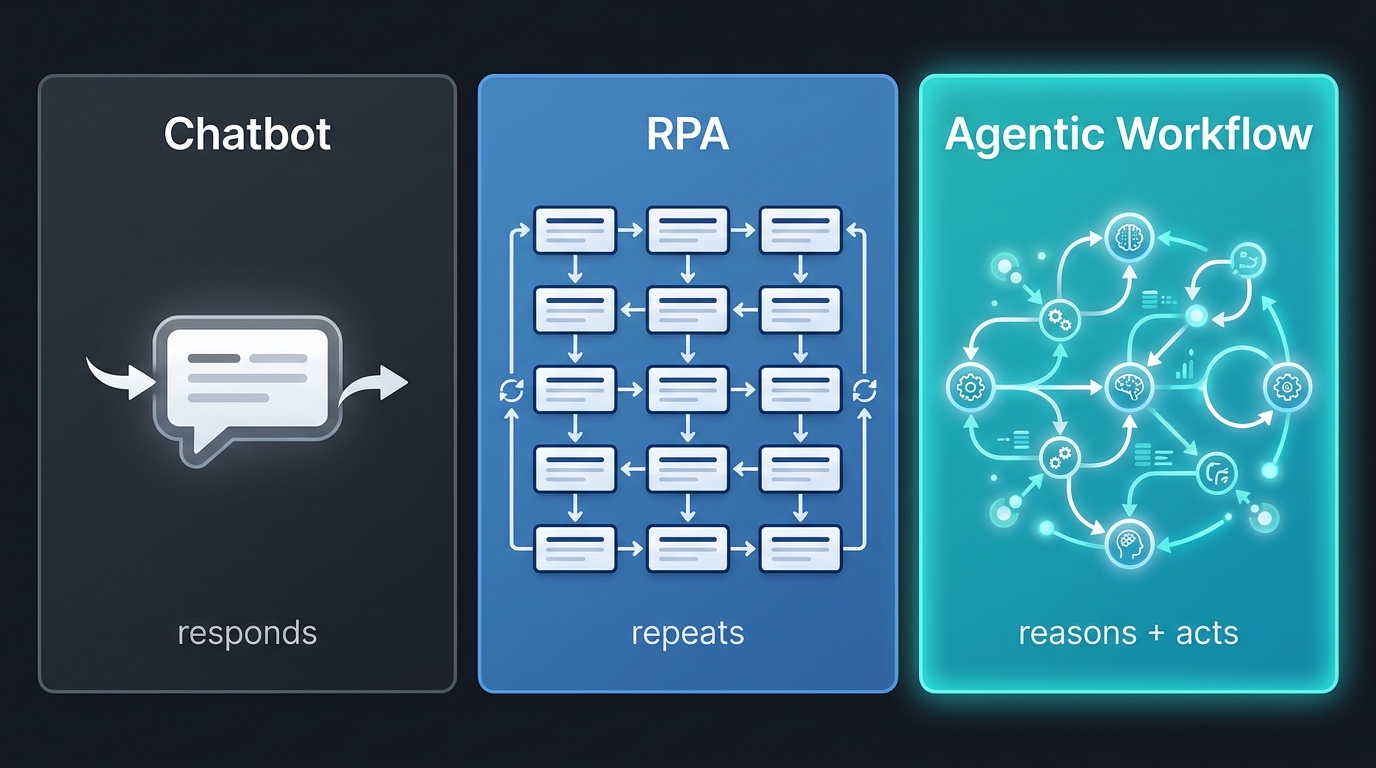

Chatbots and basic LLM interfaces respond to a single input with a single output. You ask a question; you get an answer. There is no persistence, no tool use, no ability to take action in external systems. Most “AI assistants” deployed in 2023 and 2024 fall into this category.

Robotic Process Automation (RPA) executes pre-defined sequences of steps in software — clicking buttons, copying data between systems, filling forms. It is deterministic and rule-based. RPA excels at high-volume, predictable tasks with structured inputs and zero tolerance for variation. Invoice posting in a legacy ERP. Payroll data migration. Compliance field population where the rules never change. RPA delivers 20–40% cost reductions in those contexts and remains genuinely useful. But it breaks the moment the input changes. An invoice arrives in a slightly different format, a field name shifts, a vendor uses a new template — and the entire workflow fails or produces errors that humans must then clean up.

Agentic workflows are fundamentally different in architecture. An agentic system combines a language model’s reasoning capability with the ability to use tools — APIs, databases, browsers, code execution environments — and to plan, adjust that plan based on intermediate results, and take multi-step action across multiple systems. Critically, an agent can decide what to do next based on what it encounters, not just execute a predetermined sequence.

What Makes a Workflow “Agentic” in Practice

An agentic workflow has four characteristics that distinguish it from simpler automation:

- Goal-directed planning: The agent receives a goal (“process this vendor invoice and flag any discrepancies”) rather than a script. It determines the steps needed to reach that goal.

- Tool use: The agent can interact with external systems — querying a database, sending an API call, reading a document, writing to a CRM — as needed to complete its task.

- Adaptive replanning: When the agent encounters an unexpected result — a missing field, an ambiguous value, a system timeout — it can reason about how to proceed rather than stopping entirely.

- Orchestration across agents: In multi-agent architectures, a primary orchestrator delegates subtasks to specialized agents (a validation agent, a routing agent, a reporting agent), coordinates their outputs, and resolves conflicts between them.

The practical result is a system that handles variability — the thing that causes RPA to fail and humans to stay in the loop. Agentic workflows do not replace RPA for high-volume, perfectly structured, never-changing processes. They replace manual work (and failed automation attempts) for processes that are routine in their intent but variable in their execution. That category is enormous.

The Scale of the Opportunity

Multi-agent workflow usage surged 327% in the second half of 2025 according to Databricks data. The agentic AI market stood at approximately $5.25 billion in 2024 and is projected to reach $199 billion by 2034 at a 45.8% compound annual growth rate. Those numbers reflect genuine enterprise demand — not hype cycles or pilot programs. By the end of 2026, Deloitte projects more than 60% of large enterprises will have deployed agentic AI at scale.

The Anatomy of a Routine Task Worth Automating

The most common reason agentic deployments underperform is that organizations choose the wrong starting tasks. They either automate something so simple that a basic script would have sufficed, generating minimal value, or they attempt to automate something so complex and judgment-heavy that the agent fails constantly and requires more human intervention than the manual process did.

The productive middle ground is specific and learnable.

The Four Filters

A task is well-suited for agentic automation if it passes four filters:

Filter 1: High recurrence with variable execution. The task happens frequently — daily, weekly, or thousands of times per month — but no two instances are exactly identical. A customer support ticket arrives every hour, but each ticket has a different issue, different customer history, different urgency level. An invoice arrives daily, but each vendor’s format, line items, and exception scenarios differ. High recurrence justifies the investment; variable execution is what makes agents valuable over simpler tools.

Filter 2: Clear goal, ambiguous path. You can define what “done” looks like — the invoice is approved, the ticket is resolved, the compliance report is filed — but the steps to get there depend on what the agent finds along the way. If the path is always identical, use RPA. If the path varies based on content, context, or intermediate results, use an agent.

Filter 3: Multi-system dependencies. The task requires pulling data from or writing to more than one system. Invoice processing requires an ERP, an email inbox, a vendor database, and an approval system. Onboarding requires an HRIS, an IT provisioning system, a learning management system, and a Slack channel. Every system boundary that requires a human handoff is a candidate for agent-mediated integration.

Filter 4: Quantifiable cost of manual execution. You can measure — or reasonably estimate — the current cost. Time per instance, error rate, escalation frequency, downstream rework. Without this baseline, you cannot build a business case or measure success. Tasks where the cost is invisible tend to stay manual indefinitely even when automation is straightforward.

The Quadrant Map

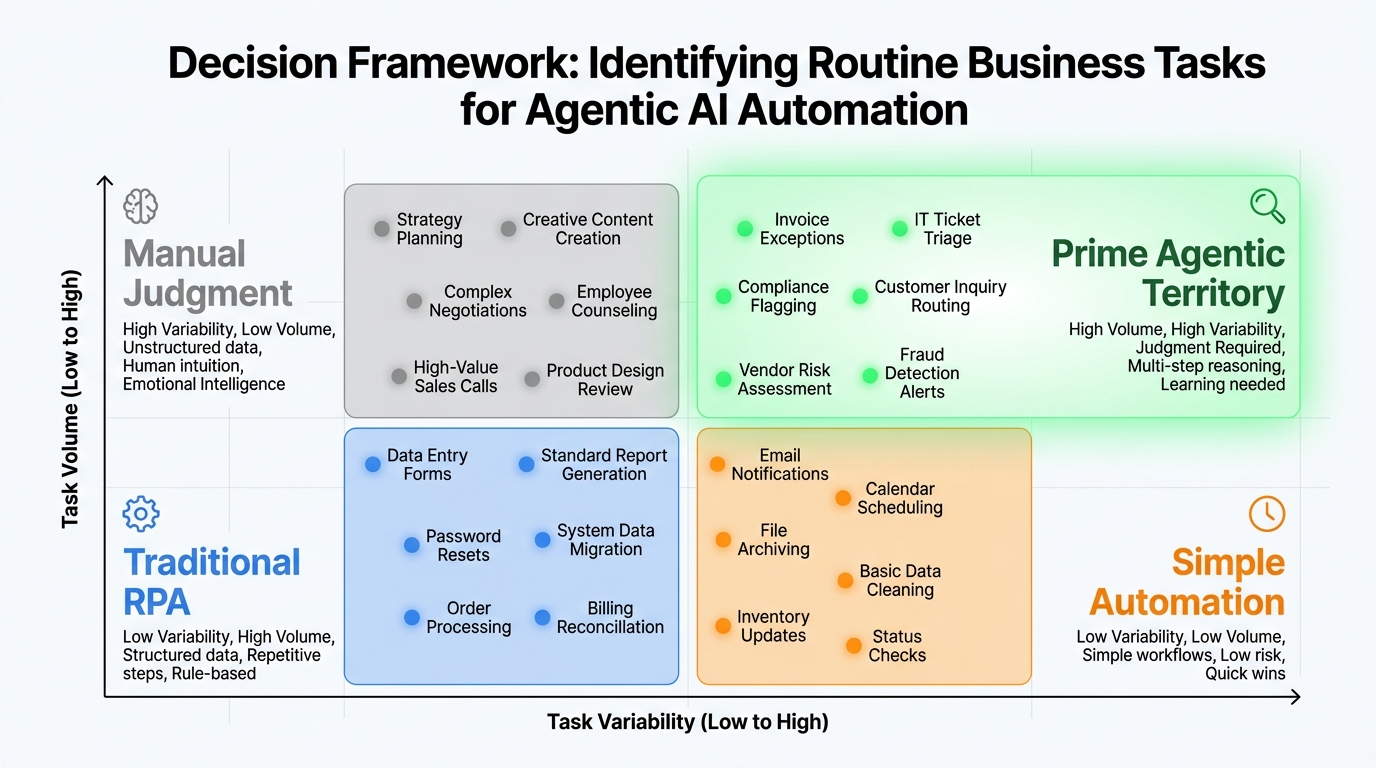

Plot your candidate tasks on two axes: task volume (how frequently it occurs) on the Y-axis, and task variability (how much execution differs between instances) on the X-axis. The quadrants sort themselves logically:

- Low volume, low variability: Automate with simple scripts or RPA. Agent overhead is not justified.

- High volume, low variability: Traditional RPA territory. Fast implementation, reliable results.

- Low volume, high variability: Keep human judgment. Not enough recurrence to justify the build cost.

- High volume, high variability: Prime agentic territory. This is where agentic workflows generate disproportionate returns. Invoice exceptions, IT ticket triage, compliance anomaly flagging, HR onboarding variations — these tasks are the core use case for agentic deployment.

What the Numbers Say About Candidate Selection

Organizations that start with tasks in the high-volume, high-variability quadrant report 30–50% faster time-to-first-automation for knowledge-heavy workflows compared to attempting to RPA-automate similar tasks. Wiley, the publishing company, deployed an agentic customer service system through Salesforce Agentforce and achieved a 40%+ increase in case resolution rates. A financial services firm that shifted from RPA to agentic processing for invoice exceptions saw a 22% reduction in exception handling time and a 30% reduction in manual touches per invoice — not because the volume was automated away, but because the variable cases that used to require a human analyst were handled correctly by the agent on first pass.

Inside a Live Agentic Workflow: How the Pieces Fit Together

Most organizations that have struggled with agentic deployments share a common misunderstanding: they think of an “AI agent” as a single entity, like a very smart chatbot with tool access. In production-grade agentic workflows, the architecture is more nuanced than that, and understanding it is prerequisite to building something that actually works.

The Orchestrator-Agent Model

A production agentic workflow typically separates concerns into distinct roles:

The Orchestrator receives the top-level goal and is responsible for decomposing it into subtasks, assigning those subtasks to specialized agents, monitoring completion, handling failures, and assembling the final output. The orchestrator does not execute tasks directly — it manages the execution chain.

Specialized Agents each handle a defined domain. A document extraction agent processes incoming files and normalizes their content. A validation agent checks extracted data against business rules. A routing agent determines the appropriate approver or next action based on rules and thresholds. A reporting agent assembles outputs in the required format and delivers them to the right system. A specialized agent is narrow in scope and reliable because of that narrowness.

Tool Integrations connect agents to real systems. Each agent has access to a defined set of tools — the APIs, databases, and interfaces it needs to complete its role. A validation agent has read access to the vendor database and the purchase order system. The routing agent has write access to the approval queue. Tool access is scoped and permissioned, not open-ended.

State, Memory, and Context

What makes multi-agent workflows coherent across steps is state management. Each agent needs to know what has already happened in the workflow — what the orchestrator asked for, what previous agents found, what decisions were made upstream. Production workflows typically implement three types of memory:

- Short-term context: The current session’s data, passed between agents as the task progresses.

- Long-term retrieval: Access to organizational knowledge — policy documents, historical cases, vendor records — via vector databases or retrieval-augmented generation (RAG) systems.

- Procedural memory: Learned patterns from past runs, stored as refined prompts or rule updates that improve agent performance over time.

This architecture is what makes agentic workflows qualitatively different from a chain of API calls. Each step informs the next. The system can recognize that it has seen a similar edge case before and apply the same resolution. It can flag that a document appears to be a duplicate because it matches the pattern of a case processed three weeks earlier.

Escalation as Architecture, Not Exception Handling

One of the most important design decisions in any agentic workflow is the escalation path: under what conditions does the agent stop and hand off to a human? This is not exception handling in the software engineering sense — it is a deliberate design parameter. The best-performing deployments define explicit escalation triggers during architecture, not after something goes wrong. Common triggers include confidence thresholds below a defined percentage, financial values above a set threshold, novel scenarios with no matching precedent in long-term memory, and regulatory or compliance-sensitive decisions flagged by policy. Escalation is not failure; it is the safety valve that allows the system to operate autonomously on the 85–90% of cases it handles well while preserving human oversight for the cases that matter most.

Finance and Compliance: Where Agentic Workflows Win First

Finance operations are the highest-value starting point for most organizations deploying agentic workflows. The reasons are structural: finance processes are high-volume, rule-governed, multi-system, and carry measurable cost consequences for errors or delays. They also suffer disproportionately from the exact class of variability that defeats RPA — vendor-specific invoice formats, exception-heavy purchase order matching, jurisdiction-specific compliance requirements.

Invoice Processing: The Canonical Use Case

Invoice processing sits in the highest-value cell of the agentic quadrant for almost every organization above a certain scale. The average enterprise processes thousands of invoices monthly. Each arrives in a different format from a different vendor. Each must be matched to a purchase order, validated against contract terms, routed to the correct approver, and posted to the correct cost center in the ERP. Line-item exceptions — price discrepancies, missing PO references, quantity mismatches — require lookup, judgment, and often a communication back to the vendor.

A three-agent architecture handles this end-to-end. An extraction agent parses incoming invoices regardless of format, normalizes the data, and passes structured output to a validation agent. The validation agent runs rules-based checks (PO matching, price tolerance, duplicate detection) and tags exceptions by type and severity. The routing agent applies approval thresholds and sends approved invoices to the ERP while escalating flagged exceptions to the appropriate human reviewer with a pre-populated summary.

Organizations implementing this architecture report a 30% reduction in manual touches per invoice, a 22% reduction in time to process exception cases, and a meaningful reduction in late payment penalties that resulted from backlogs in manual review queues. Ciena, which deployed agentic workflows across 100+ finance and HR processes including invoice handling, cut approval times from days to minutes and substantially reduced manual handoffs across ERP and payroll systems.

Compliance Reporting and Audit Preparation

Compliance is often the most underestimated use case, because the cost of manual compliance work is distributed across many teams and rarely appears as a line item. Audit preparation alone can consume weeks of analyst time per quarter — pulling data from trading systems, risk databases, HR systems, and financial records; cross-referencing against regulatory requirements; producing audit-ready documentation with proper attestation.

JPMorgan Chase has been explicit about deploying agentic AI for legal and compliance workflows, with documented efficiency gains of up to 20% in compliance cycle times. The agents handle the multi-step process of pulling data from disparate systems, cross-referencing against rule sets, flagging potential breaches, replanning when data is incomplete, and producing final outputs in the required format. The 20% cycle efficiency number understates the value, because it applies to work that was previously among the most skilled — and therefore most expensive — in the finance department.

Linde Group’s deployment for security audit reporting is more dramatic: report preparation time dropped from 24 hours to 2 hours per report, a 92% reduction, with thousands of hours reclaimed annually across 500-plus users.

Cash Management and Treasury Operations

Cash reconciliation, payment forecasting, and treasury position reporting involve pulling data from multiple banking relationships, applying classification rules, and producing daily or weekly summaries that inform decisions. These workflows are high-frequency, moderately complex, and time-sensitive — which makes them poor candidates for batch RPA and strong candidates for agentic systems that can pull, normalize, classify, and report across all connected systems on a continuous basis. Organizations running agentic cash management workflows report reducing reconciliation time from hours to minutes per cycle.

HR, Onboarding, and Operations: The Hidden Time Sinks

HR operations contain some of the most expensive manual workflows in any organization — not because individual tasks are difficult, but because each one requires coordinating multiple systems, multiple approvals, and multiple external parties in a sequence that varies based on role, location, and individual circumstance. The result is processes that are both routine in nature and exhausting to execute manually.

Employee Onboarding: 60–80% Time Reduction on the Table

Standard employee onboarding involves provisioning accounts across IT systems (email, Slack, VPN, development tools), enrolling the new hire in payroll and benefits, assigning mandatory training modules in the LMS, scheduling orientation sessions, collecting compliance documents (I-9, NDAs, tax forms), and sending welcome communications from the manager. Each of these steps requires action in a different system, and each depends on information from the steps before it.

Manual onboarding takes an average of 10–15 days to fully complete across a typical mid-size organization, with HR teams spending an estimated 5–8 hours per new hire on coordination tasks alone — work that generates zero value and consumes staff who could be doing work that actually requires human judgment.

Agentic onboarding workflows achieve 60–80% reductions in per-hire coordination time. An orchestrator agent receives notification of a new hire from the HRIS, triggers parallel provisioning in each connected system, monitors completion of each subtask, handles errors (like a duplicate username conflict) without human intervention, and escalates only when a system is unavailable or a compliance document cannot be verified. The new hire gets a faster experience; HR gets hours back.

Performance Review Preparation and Documentation

The administrative burden around performance cycles — gathering peer feedback, compiling productivity data, summarizing prior goals, drafting review frameworks for managers — is substantial and largely uniform in character. It is the kind of work that is important to do well but does not require the manager to be the one doing the data aggregation. Agentic workflows that handle document retrieval, data compilation, and draft generation can return meaningful hours to managers, with a financial advisory firm reporting 55% reduction in manual effort around reporting workflows and an 18% improvement in client retention metrics correlated with faster, more consistent reporting outputs.

Procurement and Vendor Management Operations

Procurement workflows — vendor onboarding, purchase request routing, contract renewal alerts, supplier performance tracking — share the same structural characteristics as finance workflows but sit in operations rather than finance. They are high-recurrence, rule-governed, multi-system, and variable in execution. Multi-agent procurement systems show 8–15% reductions in procurement costs through faster cycle times, fewer manual errors, and earlier identification of contract discrepancies. Vendor onboarding, which typically requires coordinating across legal, finance, IT, and the business unit making the request, can drop from a two-week process to a two-day process when an orchestrator agent manages the coordination chain.

IT and Customer Support: The Numbers Are Hard to Ignore

IT support and customer service operations were among the first functional areas to adopt conversational AI, and they are now the most advanced in terms of agentic deployment. The data from mature deployments in these areas provides the clearest proof of what agentic workflows actually deliver at scale.

IT Service Management: 80% Auto-Resolution

Tier-1 IT support is definitionally routine: password resets, software access requests, VPN troubleshooting, hardware provisioning, software installations. These tasks follow defined playbooks, require actions in multiple systems (Active Directory, IT asset management, ticketing system, provisioning tools), and collectively consume enormous amounts of IT staff time that could be redirected to higher-value work.

Organizations deploying agentic ITSM workflows report 80% auto-resolution rates for Tier-1 requests. At scale, this translates to substantial cost reduction — enterprises running high-volume IT environments report ITSM licensing cost reductions of up to 50% by shifting resolution from human-handled to agent-handled tiers, with total annual savings exceeding $5 million in large enterprises. Response times drop from hours or days to minutes, and staff are redirected to infrastructure, security, and development work.

Customer Support: From 40–65% Deflection to Measurable CSAT Gains

At 1,000 customer support tickets per month with a $22 average cost per ticket, an agentic system that auto-resolves 40–65% of tickets generates $105,000–$171,000 in annual savings — from a single deployment. That ROI calculation is straightforward and well-documented. What is less often quantified is the CSAT improvement: when agents handle Tier-1 tickets with sub-30-second response times and consistent, accurate answers, customer satisfaction scores improve 10–15 points. That improvement compounds — higher satisfaction drives retention, retention drives revenue, and revenue growth is the metric that closes the executive business case.

A banking organization that deployed agentic loan processing reduced loan processing time from 60 minutes to 10 minutes per application, achieving ROI within 0.33 months of deployment. The speed improvement also increased conversion rates, because applicants were less likely to abandon the process while waiting.

Sales Development and Outreach Workflows

Sales development involves a class of routine tasks — prospect research, personalization of outreach, CRM updates, follow-up sequencing — that are individually low-complexity but collectively consume the majority of an SDR’s working week. The B2B SaaS case cited earlier is instructive: a multi-agent system with separate agents for prospect research, outreach personalization, CRM updates, and workflow orchestration allowed the same sales team to increase booked meetings from 2 per week to 14 per week within 90 days. The system did not replace salespeople; it removed the research and administration work that was preventing salespeople from doing the high-value work they were hired for.

Why So Many Deployments Fail — And What the Survivors Do Differently

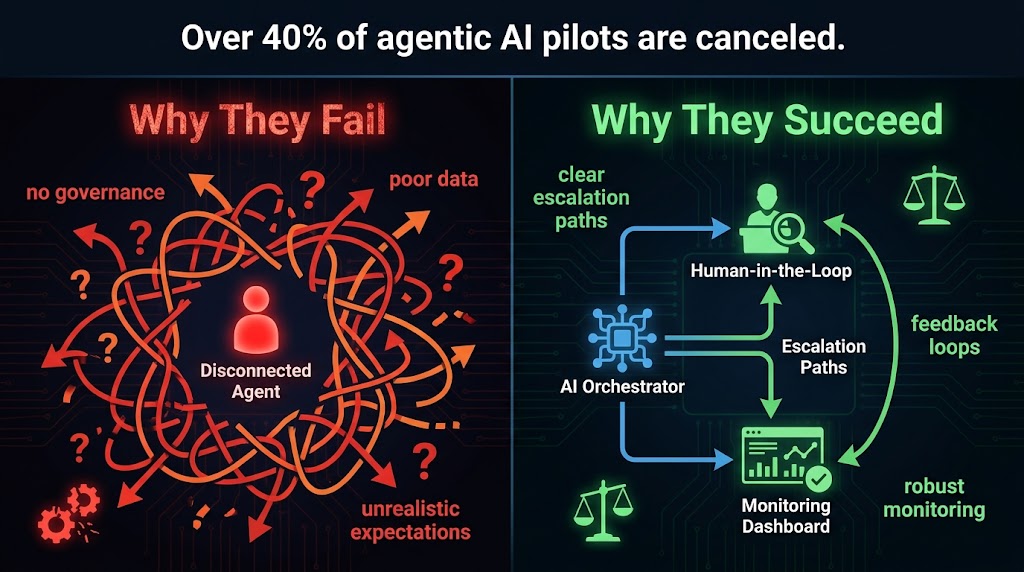

Over 40% of agentic AI pilots are canceled before reaching production, according to Gartner projections. MIT research places the failure rate even higher — at 95% for pilots that are treated like set-it-and-forget-it RPA deployments. Understanding where the failures occur is as important as understanding the success cases, because most organizations will encounter at least one of these failure modes.

Failure Mode 1: Technology Before Process Architecture

The most common failure is beginning with a technology choice — selecting a platform, purchasing licenses, standing up infrastructure — before achieving clarity on the process being automated. A workflow that lacks atomic steps, explicit escalation rules, and a defined success state will fail regardless of the quality of the underlying model. The agent needs to know what it is trying to accomplish, what constitutes an acceptable output, and what to do when it encounters a state it has not seen before. Organizations that skip the process mapping phase are essentially asking an intelligent system to execute an unintelligent process, and the results reflect that.

Failure Mode 2: Data Readiness Gaps

Agentic workflows operate on data. If the data feeding the workflow is incomplete, inconsistently formatted, stored in disconnected systems without APIs, or protected by access controls that the agent cannot navigate, the workflow will fail or produce unreliable outputs. Organizations frequently discover that the real barrier to automation is not agent capability but data infrastructure — vendor records that have never been normalized, HR data spread across three legacy systems that do not talk to each other, compliance documents stored in folders with inconsistent naming conventions. Data readiness is prerequisite to agentic deployment, not a parallel workstream.

Failure Mode 3: Governance After the Fact

Organizations that deploy agentic workflows without governance frameworks in place — audit trails, access controls, performance monitoring, model drift detection — discover the problem when something goes wrong at scale. An agent that makes an incorrect decision on 2% of cases is making thousands of incorrect decisions if it is processing a high volume of tasks. Without monitoring, those errors compound before anyone notices. Without audit trails, it is impossible to diagnose what went wrong. The EU AI Act, which has its key provisions effective in August 2026, mandates specific governance requirements for agentic systems used in regulated contexts. Even organizations not directly subject to that regulation benefit from the governance discipline it enforces.

Failure Mode 4: Unrealistic Autonomy Expectations

Agents fail multi-step tasks nearly 70% of the time in simulation testing without structured human oversight for high-stakes decisions. Organizations that deploy agents with full autonomy over consequential actions — financial transactions, customer communications, system configuration changes — and expect zero errors are building toward an incident. The survivors do something counterintuitive: they start with higher human oversight than they think they need, use the first 30–60 days to observe agent decisions in context, and gradually expand autonomy as confidence accumulates. This is not hesitation; it is the evidence-based path to sustainable production deployment.

What the Survivors Do Differently

Organizations with agentic workflows in stable production share several practices. They define success metrics before building — not ROI projections, but operational metrics: resolution rate, time-to-completion, escalation frequency, error rate per thousand tasks. They instrument everything from day one, building observability into the workflow architecture rather than adding it as an afterthought. They pilot with a narrow, well-understood task before expanding to adjacent processes. And they treat the agent as a system that requires ongoing maintenance — prompt refinement, rule updates, data quality management — rather than infrastructure that runs without attention once deployed.

Human-in-the-Loop: Not a Limitation, But the Strategy

The phrase “human-in-the-loop” is often used as a concession — an acknowledgment that AI cannot be trusted to act autonomously, so humans must supervise. That framing is backwards. In the most effective agentic deployments, human oversight is not a constraint imposed by distrust. It is a deliberate architectural feature that makes the entire system more capable, more trustworthy, and more scalable than full automation would be.

The Three-Tier Oversight Model

Mature deployments typically implement oversight in three tiers based on risk and confidence:

Human-in-the-loop (HITL): A human reviews and approves the agent’s proposed action before it is executed. This applies to high-stakes decisions — transactions above defined thresholds, communications to key customers, changes to regulated systems. HITL creates a natural quality checkpoint and builds institutional trust in the system over time, because every escalated decision becomes a learning case.

Human-on-the-loop (HOTL): The agent executes autonomously, but a human monitor reviews a sample of decisions on a defined schedule and can intervene if patterns indicate drift or error. This applies to medium-stakes tasks that the agent has handled reliably for an extended period. HOTL balances efficiency with accountability without requiring approval for every transaction.

Full autonomy with audit: The agent executes without human review for routine tasks within well-defined parameters, with complete audit trails enabling retrospective review. Password resets, standard notifications, data normalization, and similar low-stakes, high-volume tasks run here. Audit trails remain essential — not for intervention, but for compliance, debugging, and performance measurement.

Building Trust Through Transparency

The key enabler of moving tasks from HITL to HOTL to full autonomy over time is explainability. Agents that can articulate why they made a specific decision — which rule was applied, which data point triggered a flag, why a particular escalation threshold was reached — generate observable track records that teams can evaluate. Organizations using explainable AI tools for their agentic systems report faster accumulation of institutional trust and earlier expansion of agent autonomy, because stakeholders can see the reasoning, not just the outcome.

Regulatory context matters here. The EU AI Act’s provisions effective in August 2026 require human oversight, transparency, and audit capability for AI systems deployed in high-risk contexts. Dynamic risk-based escalation — where workflows trigger human review for 10–15% of cases based on confidence levels, anomaly detection, or transaction size — has become the emerging standard for organizations building toward compliance.

The Autonomy Expansion Curve

One of the most consistent patterns in successful deployments is the autonomy expansion curve: organizations that start conservative and expand carefully end up with significantly broader agent autonomy after 12 months than organizations that attempted broad autonomy from launch. The reason is straightforward. Starting with HITL on a well-chosen pilot generates a dataset of human decisions that can be used to refine agent behavior. By the time oversight is relaxed, the agent has been calibrated against real organizational decisions — not just training data. The resulting system is more accurate and more trusted, enabling expansion into adjacent processes that the organization would not have considered automating in year one.

How to Build the Business Case: Cost Frameworks and ROI Timelines

The challenge with agentic workflow business cases is that the costs are front-loaded and the benefits are distributed across multiple functions over time. Finance teams accustomed to evaluating point solutions — where you buy a thing and it does a defined thing — sometimes struggle with the multi-dimensional ROI profile of agentic deployments. Here is a framework that addresses that challenge directly.

The Cost Stack

Agentic workflow deployment costs fall into three categories:

Build cost: Development, integration, testing, and initial training. Depending on complexity, MVP deployments range from $25,000 to $150,000. Enterprise-grade multi-agent systems with complex integrations run $150,000 to $300,000 or more. These costs are sensitive to data readiness — organizations with well-structured, accessible data build faster and cheaper.

Operational cost: Ongoing model inference costs, platform fees, and maintenance. Typically $2,000–$15,000 per month depending on task volume and model selection. This is the number most often underestimated in initial business cases — organizations focus on build cost and forget that inference at scale has real expense.

Maintenance cost: Prompt refinement, rule updates, integration maintenance as upstream systems change. Budget approximately 15–30% of build cost annually. Agentic systems that are not maintained drift — the processes they automate change, the data formats they process evolve, and agent performance degrades without corresponding updates.

The Benefit Stack

Benefits from agentic deployment fall into four categories:

- Labor cost reduction: The most visible and easiest to quantify. Hours per task multiplied by frequency multiplied by fully-loaded labor cost. For a single workflow automating 40% of a finance analyst’s routine work, annual labor cost reduction typically exceeds build cost within 6–12 months.

- Error cost reduction: Reduced downstream rework, fewer compliance penalties, lower exception handling costs. Often equals or exceeds labor savings but requires more careful measurement to document.

- Revenue acceleration: Faster processing times that improve conversion (loan applications, sales outreach response times), better customer experience that improves retention, and freed analyst time redirected to revenue-generating activities.

- Capacity expansion: The ability to process higher volumes without proportional headcount increases. The strategy consulting firm that cut research time from 40 to 14 hours per project did not eliminate staff — it used that capacity expansion to take on more clients and grow revenue from $2M to $3.1M.

Payback Timelines by Use Case

Based on documented deployments, payback timelines cluster by use case. Customer support auto-resolution: 6–8 weeks. IT Tier-1 automation: 3–6 months. Finance invoice processing: 4–9 months. HR onboarding automation: 6–12 months. Compliance reporting: 4–10 months. Sales development workflows: 2–5 months. The overall benchmark across enterprise agentic deployments: 74% achieve ROI within Year 1, with an average of 171% ROI (192% for US enterprises). The payback floor — the fastest realistic timeline for a well-scoped, well-executed deployment — is measured in weeks, not years.

The 90-Day Deployment Map

Organizations that reach production deployment within 90 days follow a recognizable pattern. Those that do not typically spend those same 90 days in discovery and vendor evaluation, emerging with a comprehensive strategy document and no working system. Here is what the 90-day path looks like in practice.

Days 1–30: Map and Prioritize

The first 30 days are entirely about process intelligence — understanding the actual work that happens, not the work that should happen according to a process diagram.

Start with a task inventory. Interview process owners in 2–3 target departments. For each task, document: frequency, time per instance, number of systems involved, error rate, current cost, and the most common exception scenarios. Do not start with technology evaluation during this phase.

Apply the four filters to every candidate task. Rank the resulting list by the product of volume, variability, and cost per manual instance. The top three items on that ranked list are your first deployment targets.

Assess data readiness for each of the top three. Confirm that the data sources required exist, are accessible via API or integration, and are of sufficient quality to train against. If data readiness gaps are identified, document them as prerequisite work to be completed in parallel with Days 31–60.

Define success metrics for the first deployment before writing a single line of code or configuring a single tool. Baseline the current state precisely — time per task, error rate, escalation frequency — so that improvement is measurable.

Days 31–60: Build and Pilot

Choose the single highest-ranked, data-ready task from your prioritized list. Build a minimal working agent for that one task. Resist the temptation to build all three simultaneously — parallel construction of untested workflows produces parallel failures.

Design the agent architecture first: which agents are needed, what tools each requires, what the escalation triggers are, and what the output format is. Review this architecture with the process owner before building. If the process owner cannot understand the workflow from the diagram, the agent will fail at the edge cases the diagram misrepresents.

Build with human oversight configured at HITL level. Every agent decision is reviewed and approved by a human during the pilot phase. Log every decision, every escalation, and every correction. This log is the most valuable asset generated in the entire deployment — it is the calibration dataset for the next phase.

Run the pilot on real work for three weeks. Use actual inputs from the live process, not synthetic test data. Synthetic data does not surface the edge cases that will cause production failures.

Days 61–90: Measure and Scale

At day 60, analyze the pilot log. What percentage of decisions were approved without modification? What were the common correction patterns? Which escalation triggers fired most frequently, and were they appropriately calibrated? This analysis directly informs two parallel actions.

First, refine the agent: update prompts to address common error patterns, adjust escalation thresholds based on observed confidence distributions, fix integration issues surfaced during the pilot. This is the maintenance cycle that will run ongoing — you are now running it for the first time.

Second, relax oversight to HOTL level for the decision classes where the pilot showed reliable performance. Maintain HITL for exception categories that showed lower confidence or higher correction rates. Document the rationale for each oversight level assignment — this becomes the governance record that satisfies audit requirements.

By day 90, you should have one workflow running in production with measurable performance against baseline metrics, a refinement process in place, and a prioritized backlog of the next two deployments. The goal is not to have automated everything in 90 days. It is to have established a deployment capability — the process, the infrastructure, the governance model, and the organizational knowledge — that allows each subsequent deployment to go faster and cost less than the one before it.

The Real Competitive Advantage: Deployment Velocity, Not First-Mover Status

There is a common framing of agentic AI as a first-mover competition — a race to deploy before competitors do, after which the window closes. That framing is largely wrong, and acting on it leads organizations into the trap of deploying broadly and badly in an attempt to move fast.

The sustainable competitive advantage from agentic workflows is not the fact of deployment. It is deployment velocity — the organizational capability to identify, build, and put into production new automated workflows faster and more cheaply than competitors. Organizations that build this capability correctly compound it over time. The second workflow is faster than the first because the data infrastructure is already in place. The third is faster than the second because the governance model is established. By the time an organization reaches its tenth deployed workflow, it has an institutional capability that took years to build — and that capability is genuinely difficult to replicate quickly.

The Compounding Effect in Practice

The strategy consulting firm referenced earlier is a clean illustration of this dynamic. Research time per project dropped from 40 hours to 14 hours — a 65% reduction. But the more important outcome was the 60% capacity increase that resulted. The firm did not use that capacity to cut headcount. It used it to take more clients and grow revenue by $1.1 million. That revenue growth — achieved with the same team — funded the next round of workflow investment, which freed more capacity, which funded the next round. The compounding is not hypothetical; it is the documented outcome of deployment velocity applied over time.

The Talent Dimension

A dimension of the business case that frequently goes unmeasured is talent retention. The tasks most suited for agentic automation — data entry, reconciliation, ticket routing, compliance documentation, onboarding administration — are also the tasks that skilled employees find least rewarding. Organizations that eliminate these tasks from high-value roles report measurable improvements in retention and engagement among the staff whose work is most affected. The financial value of reduced turnover — hiring costs, onboarding time, productivity ramp — is substantial and additive to the direct labor savings from automation.

Keeping Humans in the Value Chain

The most practically important insight from mature agentic deployments is that the goal is not to remove humans from processes but to remove humans from the parts of processes that do not require human judgment, creativity, or relationship. Agents handle the data retrieval, the cross-system coordination, the rules application, the standard-case resolution. Humans handle the judgment calls, the relationship decisions, the creative problem-solving, and the oversight. That division of labor produces better outcomes for both the organization and the individuals within it — which is precisely why organizations that implement it well end up deploying more agentic workflows, not fewer human roles.

Getting Started: Three Decisions That Determine Everything Else

If you are deciding whether and how to begin deploying agentic workflows, three decisions will shape everything that follows. Get these right, and the downstream work is tractable. Get them wrong, and no amount of technical sophistication recovers the deployment.

Decision 1: Which Process to Start With

Use the four filters and the quadrant map to identify your first target. Do not let the first deployment be chosen by which team lobbied hardest or which vendor demo looked most impressive. Choose based on evidence: highest volume, highest variability, clearest baseline cost, strongest data readiness. The first deployment is not just a workflow; it is a proof of concept for your organization’s deployment capability. Make it the best possible candidate, not the most ambitious one.

Decision 2: Build vs. Buy vs. Configure

The spectrum of implementation options has expanded significantly. At one end, custom agent development using frameworks like LangGraph, AutoGen, or CrewAI gives maximum flexibility at maximum build cost and timeline. In the middle, purpose-built agentic platforms (Salesforce Agentforce, Microsoft Copilot Studio, ServiceNow’s AI-native workflows) offer pre-built integrations and governance infrastructure at the cost of less flexibility for complex custom logic. At the other end, emerging no-code and low-code agentic tools allow non-technical teams to configure basic workflows without developer involvement.

The right choice depends on your IT maturity, the complexity of your target process, and the scale of your integration requirements. Organizations with strong engineering teams deploying complex cross-system workflows typically choose frameworks. Organizations deploying within a single established platform ecosystem (Salesforce, ServiceNow) typically choose the platform-native option. Organizations doing initial proof-of-concept work sometimes start with low-code tools to validate the use case before committing to custom build. The common mistake is assuming one choice is universally correct.

Decision 3: Who Owns the Workflow

The most persistently underestimated governance decision is ownership. Agentic workflows sit at the intersection of technology (the agent infrastructure), process (the business logic being automated), and data (the feeds and records the agent uses). When something goes wrong — and something will, in early deployments — ownership ambiguity is the primary reason problems persist rather than get resolved. Assign a named workflow owner before launch. That person is responsible for monitoring performance, approving escalation threshold changes, initiating maintenance cycles, and deciding when oversight levels should shift. Without that person, the workflow drifts and eventually fails quietly — which is worse than failing loudly.

Conclusion: The Gap Is Closeable, But Only With the Right Approach

The 68-point gap between agentic AI adoption and production deployment is not permanent. It reflects the organizational learning curve of a genuinely new capability, and that curve compresses as more deployments generate documented case studies, best practices, and institutional knowledge.

The organizations closing that gap most effectively share a common approach: they choose starting tasks empirically, using volume and variability rather than ambition as selection criteria. They design for human oversight from the beginning rather than adding governance after the first incident. They treat the first deployment as a capability-building exercise, not just an efficiency project. And they measure outcomes rigorously, building the evidence base that justifies the next deployment and the next.

The returns for organizations that get this right are well-documented. An average 171% ROI. Payback periods measured in weeks for the highest-value use cases. Capacity expansion that translates directly to revenue growth without proportional headcount increases. A 92% reduction in time spent on security audit preparation at Linde Group. Forty percent more customer cases resolved at Wiley. Fourteen booked meetings per week from the same sales team that was booking two.

The routine tasks consuming your team’s capacity today are not inevitable features of operating a business. They are solvable problems, with a specific class of solution that has matured to the point where the failure modes are understood, the success patterns are documented, and the path to production is a 90-day map rather than a multi-year research project.

The question is no longer whether agentic workflows work. The question is which ones to deploy first.

Key Takeaways

- The 68-point gap between agentic AI adoption (79%) and production deployment (11%) is an execution problem, not a technology limitation.

- Agentic workflows are most valuable for high-volume, high-variability tasks that defeat RPA and consume skilled human time on work that does not require human judgment.

- The orchestrator-agent model, with scoped tool access and explicit escalation paths, is the architecture that produces reliable production deployments.

- Finance, IT support, HR onboarding, and sales development offer the fastest, most measurable ROI timelines — most under 12 months.

- Human-in-the-loop oversight is not a concession to AI limitations; it is the mechanism that builds institutional trust and enables autonomous expansion over time.

- The 90-day deployment framework — map and prioritize, build and pilot, measure and scale — produces production-ready workflows without the multi-year timelines that stall broader adoption.

- Ownership, governance, and data readiness are prerequisites, not afterthoughts.