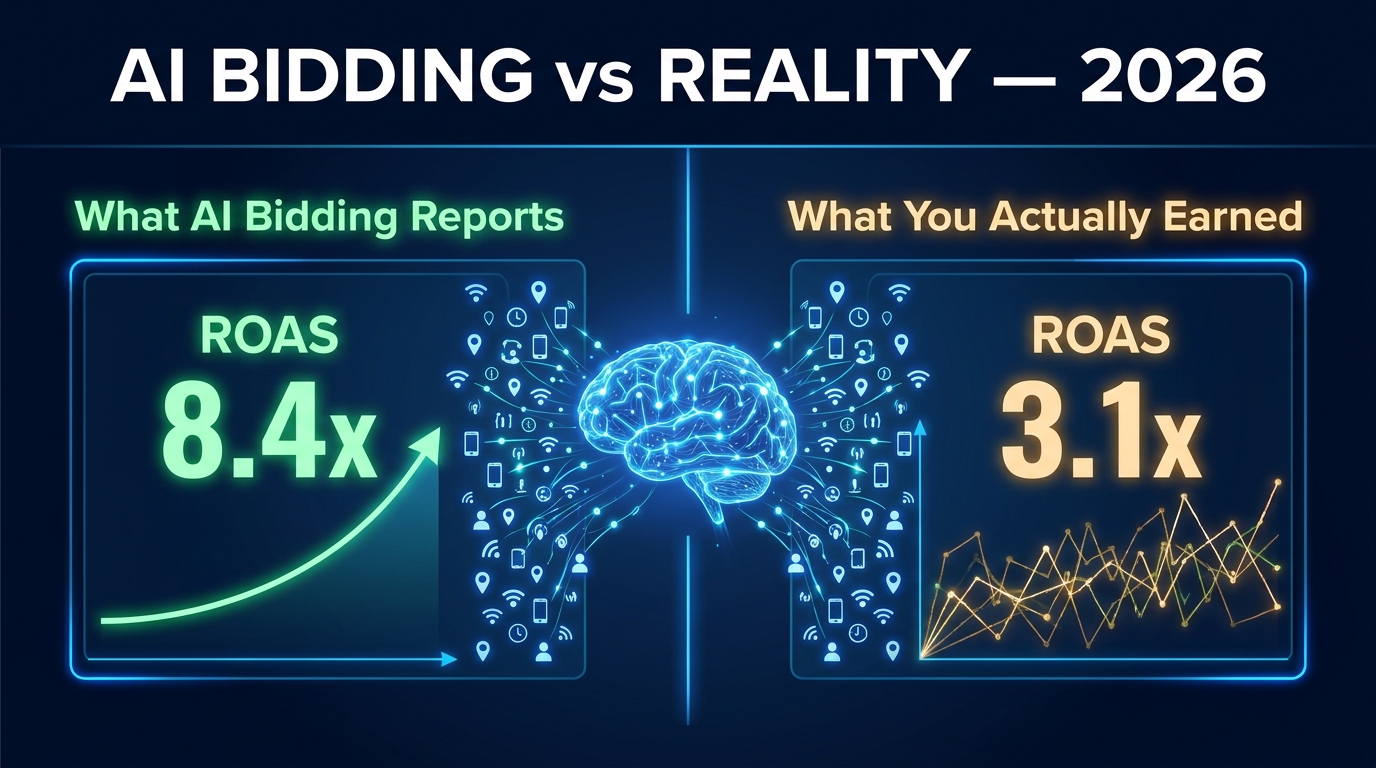

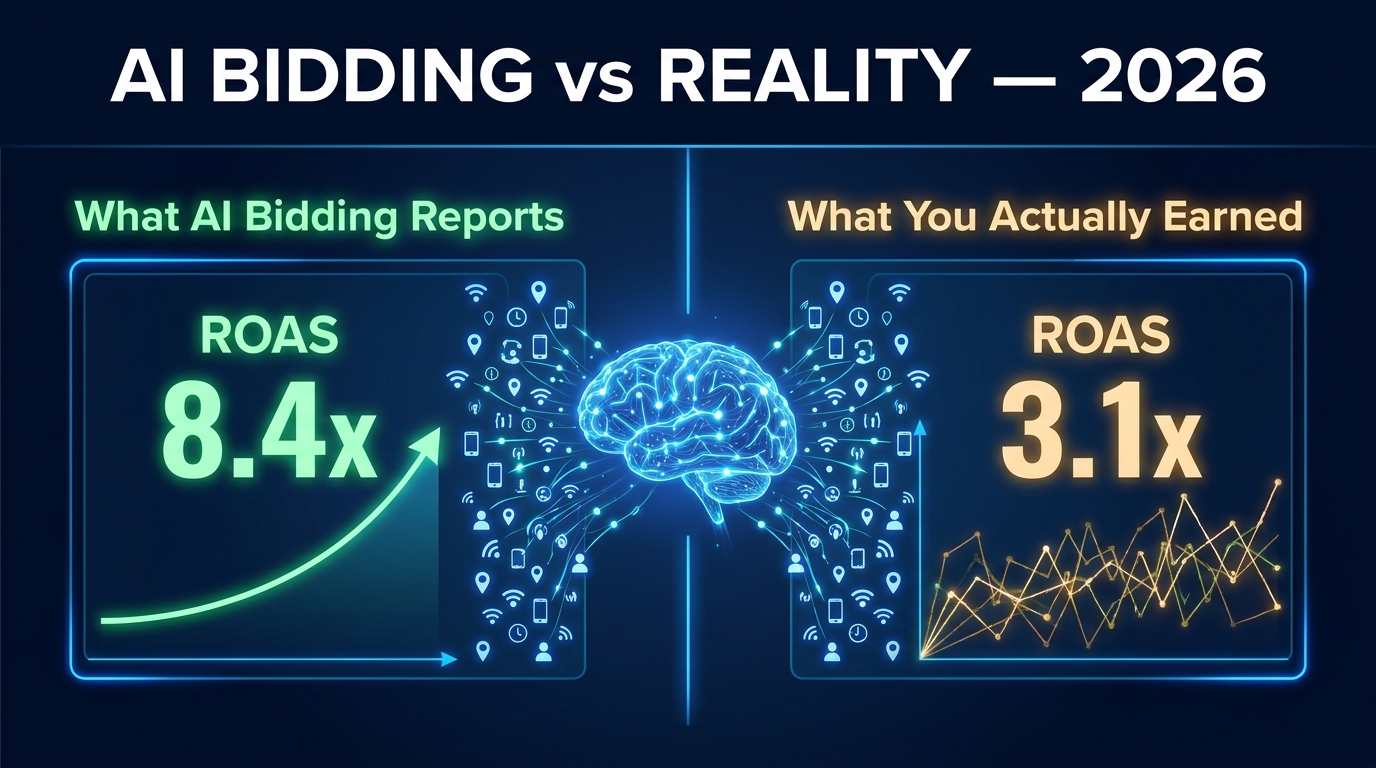

Your Target ROAS campaign is reporting 7.2x. Your finance team is asking why revenue is flat. Both things are true — and that contradiction is exactly the problem this article exists to solve.

AI ad bidding has become the default operating mode for serious advertisers. Smart Bidding now manages 78% of all Google Ads spend, and 91% of enterprise accounts use at least one automated bidding strategy. Meta’s Advantage+ Shopping campaigns have been adopted by 68% of ecommerce advertisers. The tools are everywhere. The adoption numbers are impressive. And yet, a troubling pattern keeps emerging in audits: reported ROAS and actual business results frequently don’t match.

The disconnect isn’t because AI bidding doesn’t work. It does — impressively, under the right conditions. The disconnect exists because most advertisers hand their campaigns to an algorithm without understanding what that algorithm actually optimizes for, what data it’s using to make decisions, and where the edges of its competence end. When you don’t understand those three things, you’re not running AI bidding. You’re running a very expensive black box with a flattering dashboard.

This article is not a beginner’s introduction to Smart Bidding. It’s a practitioner’s examination of how AI bidding actually functions in 2026 — the signal architecture, the data dependencies, the structural mistakes that inflate reported ROAS while starving actual growth, and the strategic frameworks that separate advertisers who consistently compound performance from those who chase numbers that don’t move their bottom line. We’ll cover Google, Meta, the portfolio strategies that most brands skip, and the measurement approaches that tell you whether your ROAS is real or just well-presented attribution.

Start with what you can verify, build on what you can measure, and don’t trust any number the platform reports until you understand how it was calculated.

How AI Bidding Actually Works in 2026: The 70 Million Signal Auction

To understand why AI bidding succeeds or fails, you first need to understand its fundamental architecture. Every time a Google Ads auction occurs — and billions happen daily — Google’s Smart Bidding system processes over 70 million real-time signals in the milliseconds before the bid is submitted. That number is not a marketing figure. It’s a functional description of how modern programmatic bidding operates.

What Those Signals Actually Are

The 70 million signals span an enormous range of contextual data points. The obvious ones include device type, location, time of day, and day of week. But the system also factors in browser and operating system, search query characteristics, landing page context, recent search behavior across the user’s history, remarketing list membership, in-market audience segments, predicted conversion probability based on cross-device patterns, and hundreds of audience demographic indicators derived from Google’s broader data ecosystem.

What makes this materially different from manual bidding isn’t just the volume — it’s the combination logic. A human can adjust bids for mobile vs. desktop, or increase bids during business hours. But the AI simultaneously evaluates a user who is on mobile, located in a specific zip code, searching at 8:47 PM on a Tuesday, who visited your pricing page three days ago, and who is also in the “home improvement, high purchase intent” audience segment. No human bidding operation can compute that combination in real time across thousands of simultaneous auctions.

The Learning Phase: What It Is and Why It Matters

Every AI bidding strategy operates through a learning phase — typically 4 to 6 weeks — during which the algorithm collects enough conversion data to build reliable bid models. This phase is frequently misunderstood and mismanaged, leading to premature strategy changes that reset the clock and perpetually trap accounts in suboptimal early-stage behavior.

During the learning phase, the algorithm intentionally explores bids above and below its current estimates to gather data on price sensitivity, conversion elasticity, and audience response curves. This exploration means short-term performance during the learning phase will often appear worse than both your prior manual performance and your eventual automated performance. Many advertisers see the dip and intervene — changing targets, restructuring campaigns, adjusting budgets — which forces the algorithm back to square one.

The practical rule: once you commit to a Smart Bidding strategy, give it a minimum of four weeks with no significant structural changes. “Significant” means budget changes greater than 20%, target adjustments greater than 15%, or substantial creative or audience modifications. Small refinements are acceptable; major overhauls restart the learning clock.

The Conversion Threshold Problem

Smart Bidding’s effectiveness is directly tied to conversion volume. The benchmarks are well-established at this point: Target ROAS needs at least 50 conversions per month per campaign to function reliably; Target CPA requires 30 or more; even Maximize Conversions benefits from hitting at least 15 to 30 monthly conversions before producing consistent results.

Below these thresholds, the AI doesn’t have enough data to build accurate prediction models. It compensates by using broader proxy signals and making more speculative bid decisions — which is why low-volume accounts frequently see erratic performance under automated bidding. This is the specific scenario where manual CPC, which requires no conversion minimum, often outperforms Smart Bidding. Understanding the threshold boundary is what separates strategic bidding decisions from cargo-cult automation.

The Signal Quality Problem: Why Garbage In Means Garbage ROAS

Here is an uncomfortable truth about AI bidding that the platforms have no incentive to advertise: the algorithm is only as good as the conversion data you feed it. And for a significant percentage of advertisers in 2026, that conversion data is incomplete, incorrectly attributed, or actively misleading the AI’s optimization decisions.

The Three Signal Corruption Patterns

The first and most common signal corruption pattern is counting the wrong conversion events. Many accounts include micro-conversions — newsletter signups, add-to-cart events, page views above a certain duration — in their primary conversion tracking alongside actual purchases. When you run Target ROAS optimizing toward a signal that includes page engagement events, the AI happily maximizes for whichever conversion type is easiest to generate at the target return ratio. That’s often not your revenue-generating event.

The second pattern is double-counting from overlapping tracking implementations. Advertisers running both Google Tag and Google Analytics 4 import frequently double-count conversions that fire from both sources for the same transaction. The algorithm sees twice the signal intensity and calibrates bids accordingly — driving costs up and distorting ROAS calculations in ways that can take months to diagnose.

The third pattern is the attribution window mismatch. Smart Bidding uses data-driven attribution by default — a model that distributes credit across all touchpoints in a conversion path. If your business has a 45-day purchase consideration cycle but your conversion window is set to 30 days, the algorithm never sees a large portion of the conversions its bids contributed to. It perceives those campaigns as underperforming and reduces bids, potentially abandoning high-value audience segments that simply require longer nurture cycles.

Micro-Conversion Staging: A Better Architecture

The more sophisticated approach is to use a staged conversion architecture where you have a clear hierarchy: primary conversions (purchases, qualified leads) that Smart Bidding optimizes toward, and secondary conversions (micro-events) that are tracked but excluded from bidding optimization. This keeps your optimization signal clean while still allowing you to monitor funnel health through secondary metrics. Google Ads allows you to designate conversions as “Primary” or “Secondary” precisely for this reason — yet a surprisingly large number of accounts still use the default all-in-one tracking setup.

First-Party Data: The Fuel Your Bidding AI Actually Runs On

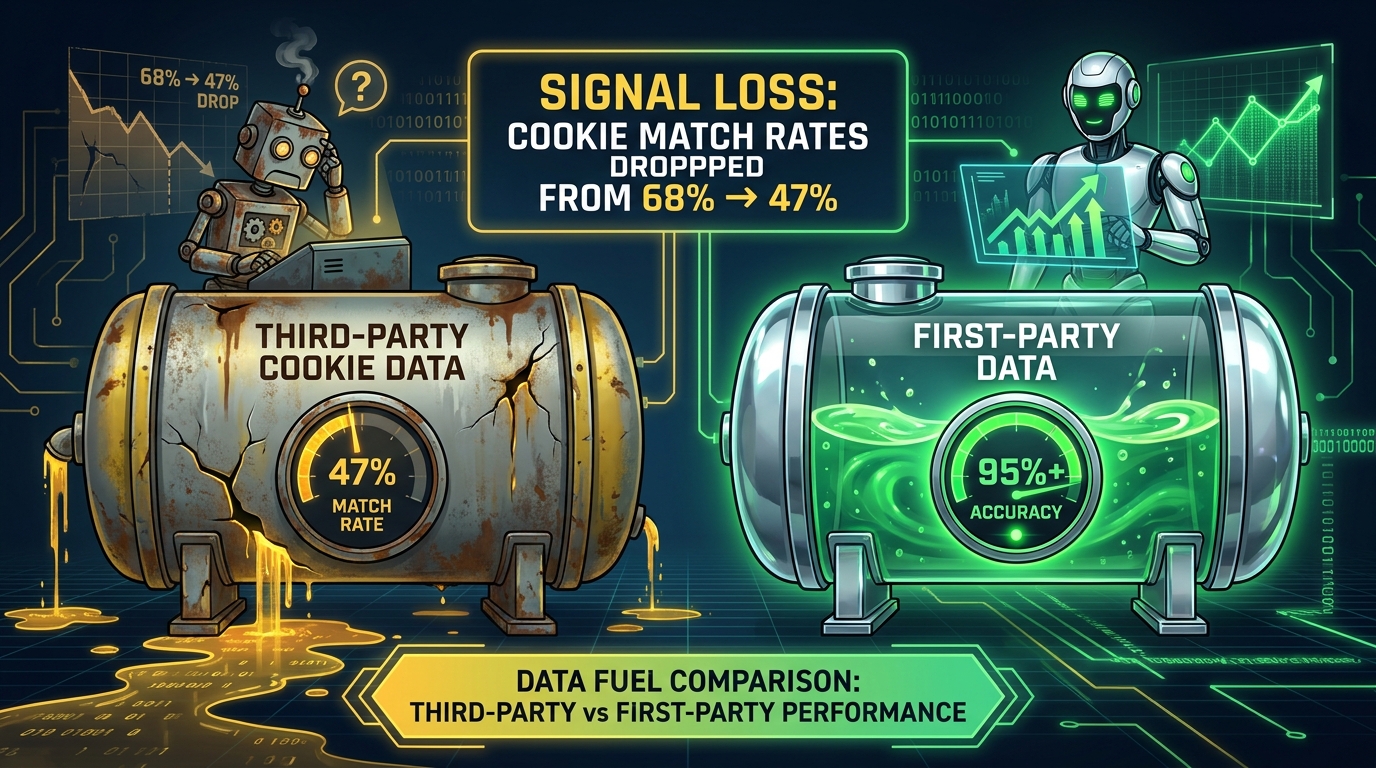

The deprecation of third-party cookies and the broader signal loss driven by iOS privacy changes and regulatory restrictions has fundamentally changed the input environment for AI bidding systems. Cookie-era match rates across the open web sat at roughly 68%. In 2026, authenticated traffic match rates in cookieless environments have dropped to approximately 47% — a structural reduction in the data resolution that platform AI systems use to make bidding decisions.

That missing 21 percentage points isn’t just a measurement problem. It’s a targeting problem. When the algorithm can’t reliably identify users across sessions, it loses confidence in its conversion probability predictions, which means it either bids more conservatively (losing impressions) or more broadly (driving up costs for lower-quality traffic). The impact materializes as seemingly unexplained ROAS degradation that coincides not with any changes in your account but with browser updates and privacy policy changes outside your control.

Why First-Party Data Changes the Equation

Advertisers who have built mature first-party data strategies are achieving 25 to 35% better ROAS compared to peers who still rely primarily on platform-native signals, according to BCG/Google research. The mechanism is straightforward: your own customer data — purchase history, email list segments, CRM records, loyalty program data — provides deterministic identity signals that don’t depend on cookies or third-party matching. When you feed that data into your bidding system via Customer Match, Enhanced Conversions, or server-side conversion APIs, you’re giving the algorithm a much higher-quality signal to train on.

71% of brands and agencies are actively expanding their first-party data collection efforts in 2026, according to IAB State of Data reporting. But expansion and effective deployment are different things. Many brands are collecting email addresses and CRM data but haven’t connected those assets to their bidding infrastructure. The data sits in a marketing database while the ad platform bidding continues to rely on cookie-degraded signals.

The Practical First-Party Data Stack for Bidding

An effective first-party data integration for AI bidding consists of several connected layers. The foundation is Enhanced Conversions — Google’s mechanism for hashing and matching first-party data (email, phone, address) collected at conversion points to Google accounts, allowing more accurate conversion attribution even when cookies are absent. Implementation requires minimal technical lift but dramatically improves signal fidelity, particularly for purchase conversions on mobile devices where cookie persistence is lowest.

The next layer is Customer Match audience uploads. Your CRM segments — customers with high LTV, lapsed purchasers, high-frequency buyers — become audience signals that Smart Bidding can use to adjust bids toward users who match these profiles. This is particularly powerful for Target ROAS campaigns where the algorithm needs to distinguish between a $50 first-time buyer and a $500 annual customer who is about to reorder.

For more sophisticated operations, server-side conversion tracking via the Google Ads Conversion API (or Meta’s Conversions API) eliminates browser-side tracking failures entirely. Server-to-server event transmission doesn’t depend on cookies, JavaScript execution, or browser privacy settings. This is the most reliable conversion signal architecture available in 2026, and for any account spending more than $20,000 per month, the implementation cost is recoverable within weeks through improved bidding accuracy.

Value-Based Bidding: Stop Optimizing for Revenue and Start Optimizing for Profit

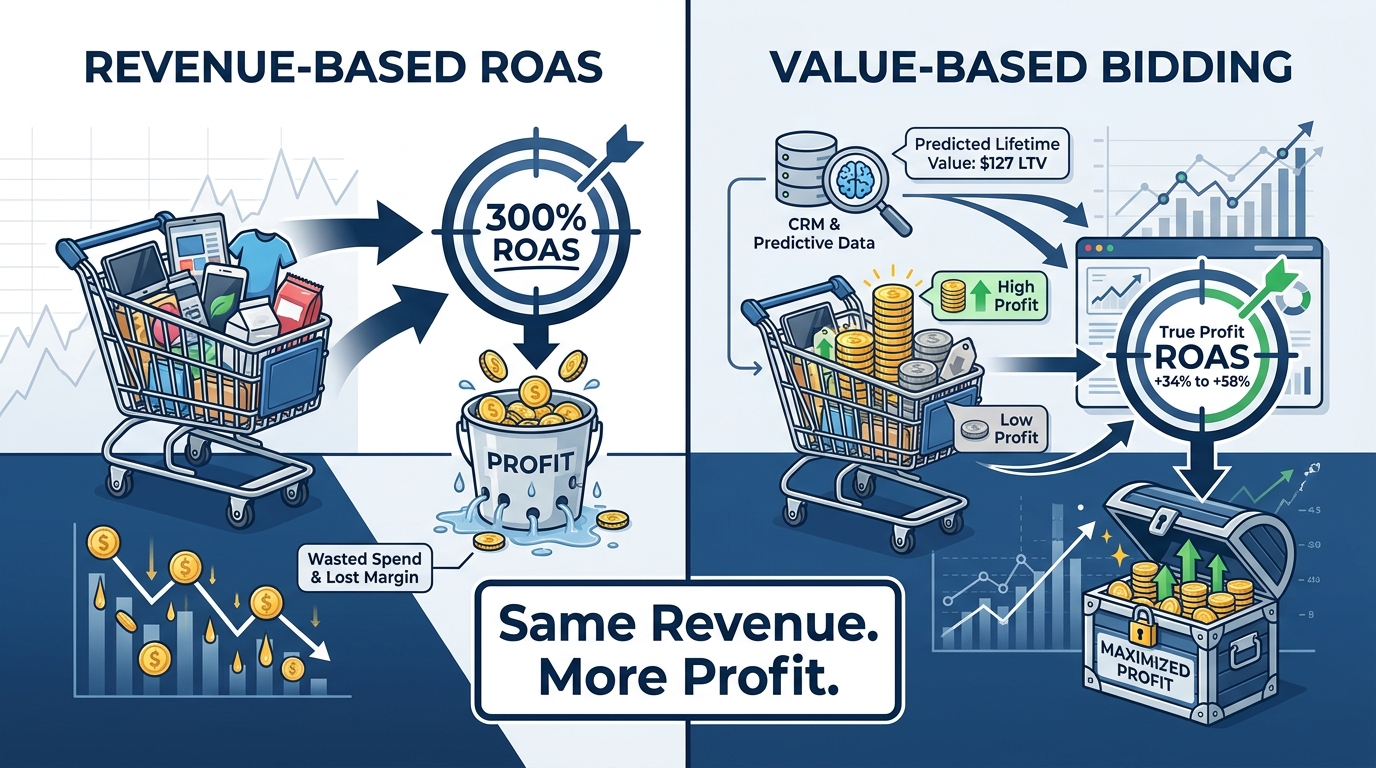

Most advertisers running Target ROAS campaigns are optimizing for revenue. The platform reports a return on ad spend ratio based on the conversion value the algorithm is chasing — typically the order value passed in the purchase event. This creates a pervasive strategic error: the AI maximizes for gross revenue figures while the business’s actual profitability goes completely unmeasured by the bidding system.

A business selling products with 60% gross margins and products with 12% gross margins has exactly the same ROAS on both — if all it’s measuring is order value. The algorithm will happily generate a 6x ROAS on the low-margin product at scale while ignoring the high-margin product because its conversion volume is lower. The dashboard looks healthy. The profit and loss statement tells a different story.

What Value-Based Bidding Actually Means

Value-based bidding (VBB) is the practice of passing profit-adjusted values — rather than raw order values — into your conversion events. Instead of telling Google “this order was worth $150,” you tell it “this order generated $47 in gross profit after COGS.” The AI then optimizes toward generating the most profit per dollar spent, not the most revenue per dollar spent.

The results, when implemented correctly, are substantial. One documented case study from ALM Corp showed that switching from revenue-based Target ROAS to profit-based conversion values improved true profit ROAS by 34 to 58% within 60 days. A separate ecommerce analysis in the Lyra Report, which examined 94 accounts spending $3.01 million over a ten-month period, found that Maximize Conversion Value bidding achieved a 6.44x ROAS versus 1.96x for Maximize Conversions — a 3.3x outperformance attributable directly to the quality signal difference.

Integrating Customer Lifetime Value into Bids

The most advanced form of value-based bidding goes beyond order-level profit to incorporate predicted customer lifetime value (LTV). When you can feed predicted LTV into your conversion values — typically via CRM integration using rule-based or machine-learning LTV prediction models — the bidding AI begins to make acquisition decisions based on long-term customer value rather than immediate transaction profitability.

The practical discovery from LTV integration is often counterintuitive. One documented case found that the product category being most heavily promoted had a 127% higher average order value but a 49% lower 12-month LTV compared to a consumables category. Revenue-based ROAS made the promoted category look like the star performer. LTV-adjusted ROAS revealed it was the worst investment in the portfolio. Reallocating spend toward LTV-optimized products produced $2.3 million in additional annual revenue with 18% less ad spend.

The Conversion Multiplier Framework

For businesses that can’t immediately connect CRM-based LTV predictions to their bidding systems, conversion multipliers offer a practical intermediate step. The approach assigns conversion value weights based on attributes you already know at transaction time: product category margin tier, customer type (new vs. returning), acquisition channel, and geographic market. A high-margin product from a new customer in a high-LTV market might receive a multiplier of 2.5x, while a low-margin repeat purchase from an existing customer who requires minimal acquisition spend might receive 0.8x. These multipliers, applied consistently to conversion values, give the algorithm directionally accurate signals that move it toward profit optimization even before full CRM integration is achieved.

The Performance Max Trap: When Black-Box Budgets Eat Your Margins

Performance Max has become the dominant campaign format for enterprise Google Ads accounts. More than 80% of enterprise spend runs through PMax, and Google’s own benchmarks show 35% more conversions at 20% lower CPA compared to equivalent manual campaigns. Those are real numbers. PMax, under the right conditions, does generate more output per dollar.

The problem is that PMax’s efficiency metrics frequently mask where that efficiency is coming from — and a significant portion of it, in poorly structured accounts, is coming from conversions the advertiser would have generated anyway.

The Brand Cannibalization Problem

A study by Optmyzr analyzing 503 accounts found a 91% keyword overlap between Performance Max campaigns and Search campaigns running in the same accounts. PMax, left unmanaged, aggressively takes credit for branded search traffic — users who already know your brand and would have converted through organic search or a direct branded search campaign. When PMax “converts” these users, it reports them as conversions driven by its AI-powered optimization. The ROAS looks extraordinary. But the incremental value is minimal.

The fix is structural. Running a dedicated branded Search campaign with explicit brand terms and setting brand exclusions within PMax forces the campaign to compete for genuinely new demand. Without this separation, your PMax ROAS is partly a measure of how well-known your brand already was — not a measure of how well the algorithm is generating new customer acquisition.

The Asset Group Problem

PMax’s “campaign as creative brief” model means the algorithm selects from your asset groups — headlines, descriptions, images, videos — to assemble ads across channels. Unsegmented asset groups that mix different product lines, price points, and audience types produce muddled creative combinations that confuse both the algorithm’s targeting decisions and the users who see the resulting ads.

Best practice in 2026 is to create themed asset groups that mirror your product category structure, each with tailored headlines, images, and audience signals. An outdoor furniture brand should have separate asset groups for patio sets, individual chairs, storage solutions, and seasonal clearance — not a single group with assets from all categories. The algorithm’s ability to match creative intent to user context depends on you giving it organized, coherent creative inputs.

The 70/20/10 Budget Framework

Top-performing advertisers have converged on a structural budget allocation framework for accounts where PMax coexists with standard Search and Shopping campaigns. The split: 70% to Performance Max, 20% to branded Search, 10% to experimental standard campaigns. The branded Search allocation protects against attribution inflation. The experimental bucket allows controlled testing of audience and keyword hypotheses that can then inform PMax audience signals once validated.

Budget scaling rules matter as much as the initial allocation. Increasing PMax budget by more than 20% in a single week forces the algorithm back into exploration mode, disrupting the optimization patterns it has built. The practical discipline is to increase budgets in 15 to 20% weekly increments, monitor ROAS for a 15% or greater drop, and pause additional scaling if the drop triggers. Adding new asset groups before increasing budgets often produces better marginal returns than simply feeding more dollars into existing campaign structures.

The Reporting Gap and How to Close It

PMax’s historically limited reporting has been one of its most significant structural weaknesses. In 2026, Google has added search categories reporting, asset-level performance metrics, and negative keyword support to PMax — addressing long-standing advertiser criticisms. But these improvements require active use. Asset-level metrics now allow you to see which creative combinations are driving performance and which are consuming budget without contributing conversions. Implementing a monthly creative audit against asset performance data is no longer optional for well-run PMax campaigns.

Meta Advantage+: The Second AI Bidding System That Deserves Strategic Attention

Meta’s Advantage+ Shopping Campaigns have quietly become one of the most consequential AI bidding developments for ecommerce advertisers. While Google’s ecosystem dominates search-intent advertising, Meta’s AI system operates in a fundamentally different context — it reaches users who aren’t actively searching, and its effectiveness depends on creative quality, audience signal breadth, and budget architecture rather than keyword strategy.

The Performance Numbers in Context

In Q1 2026 data drawn from 35,000+ advertisers, Meta Advantage+ Shopping campaigns delivered a median 2.87x ROAS across ecommerce verticals, with the top 10% of advertisers hitting 8.4x. Compared to manually managed Meta campaigns, Advantage+ delivers approximately a 22% higher ROAS on average, with cost-per-purchase reductions of 12 to 18% and a 13% lower cost-per-catalog sale. The system reaches these results by removing most manual audience targeting and allowing its ML system to identify converting users from scratch — a counterintuitive approach that outperforms hand-tuned audience segments in the majority of mature accounts.

The key operational change Meta made in early 2026 is reducing the minimum weekly conversion threshold for Advantage+ learning from 50 to 25 weekly conversions. This makes the system accessible to a larger range of advertisers, particularly mid-market brands spending $15,000 to $75,000 per month who previously couldn’t generate sufficient conversion volume to exit the learning phase reliably.

What Advantage+ Actually Needs to Perform

The algorithm’s reliance on creative quality as its primary optimization lever is what most advertisers underestimate. Advantage+ doesn’t have keyword targeting levers to pull. It can’t narrow by interest or demographic in the traditional sense. What it has is a creative testing engine that progressively allocates budget toward combinations of headlines, images, and videos that generate conversions — and deprioritizes creative combinations that don’t.

This means the quantity and quality of your creative library is the single most important input variable. Meta’s own data suggests that Advantage+ campaigns with 15 to 50 active creative variations generate the highest sustained ROAS because the algorithm has enough creative variety to continually test and rotate. Campaigns running three to five creatives plateau quickly as the algorithm exhausts its testing options and begins reallocating budget based on limited evidence.

Connecting Meta and Google Bidding Systems

Sophisticated advertisers are increasingly managing Google and Meta bidding systems as connected parts of a single customer acquisition architecture rather than independent channels with separate ROAS targets. The practical implication: customers who encounter Meta ads at the awareness stage but convert through Google Search should be attributed through a cross-channel model, not counted as a Google-only conversion. When you set Google’s Target ROAS without accounting for the assist value from Meta’s upper-funnel work, you systematically underbid on branded search terms and underinvest in Google’s retargeting layers. A unified measurement approach — even a simplified one using UTM parameter tracking and a single analytics platform — is the minimum viable framework for managing multi-channel AI bidding rationally.

Portfolio Bidding: Pooling Campaigns for Smarter AI Learning

One of the most consistently underutilized techniques in AI bidding strategy is portfolio bidding — the practice of grouping multiple campaigns under a single shared bidding strategy with a unified ROAS or CPA target. Instead of each campaign having its own isolated learning pool, the algorithm draws on aggregated conversion data across all campaigns in the portfolio, dramatically accelerating the pace at which it builds reliable prediction models.

Why Isolated Campaign Bidding Creates Artificial Intelligence Poverty

Consider an advertiser running twelve Search campaigns with an average of 15 conversions per campaign per month — 180 total monthly conversions across the account. Each campaign, in isolation, is below the 30-conversion minimum for reliable Target CPA bidding. But grouped into a portfolio strategy, the 180 combined conversions are well above threshold. The algorithm’s learning pool is 12x larger, its prediction models are more reliable, and its bid adjustments are more precisely calibrated to actual user behavior patterns.

Research on portfolio bidding shows 10 to 27% ROAS lifts over equivalent isolated campaign strategies in accounts where the conversion volumes per campaign are insufficient to sustain strong isolated learning. The effect is most pronounced in accounts with fragmented campaign structures — multiple geographic campaigns, category splits, or audience-segmented campaigns that each individually have thin conversion data.

When Portfolio Bidding Works and When It Doesn’t

Portfolio strategies work best when the campaigns being pooled share similar conversion intent and similar business objectives. Grouping a brand Search campaign, a competitor keyword campaign, and a PMax campaign into a single tROAS portfolio is likely to produce muddled optimization because the algorithm can’t distinguish between the very different conversion economics of each campaign type. Brand searches convert at 15% with minimal CPC. Competitor keywords convert at 3% with aggressive CPCs. PMax operates across the full funnel. Lumping these together produces averaged optimization that serves none of them particularly well.

The effective portfolio structure groups by campaign type and funnel stage: a Search portfolio for non-branded high-intent terms, a Shopping/PMax portfolio for product-focused campaigns, and a retargeting portfolio for lower-funnel audience campaigns. Within each portfolio, tROAS targets can be set at the campaign level for budgeting purposes while the learning data is pooled at the portfolio level — giving you both data efficiency and campaign-level control.

Incrementality Testing: The Only Way to Know If Your ROAS Is Real

Everything discussed so far — signal architecture, value-based bidding, portfolio structures — is aimed at making your AI bidding perform better. But none of it answers the most fundamental question a serious advertiser needs to ask: would these conversions have happened anyway?

Platform-reported ROAS is an attribution measurement. It tells you which ads were in the last-touch (or weighted multi-touch) path to a conversion. It does not tell you whether removing those ads would have reduced conversions. Incrementality testing is the methodology that answers that question — and the results, when advertisers run it for the first time, are frequently humbling.

Geo-Based Holdout Testing

The most accessible incrementality test for most advertisers is a geo-based holdout experiment. You identify geographically similar market pairs — cities or regions with comparable demographics, purchase behavior, and brand awareness levels — and run your standard campaigns in the treatment markets while pausing or significantly reducing spend in the holdout markets. After three to four weeks, you compare conversion rates and revenue between groups, controlling for baseline differences. The gap between treatment and holdout is your incremental conversion rate — the fraction of your platform-reported conversions that wouldn’t have happened without the advertising.

For well-established brands running heavy branded search campaigns, these tests frequently reveal that 40 to 60% of reported conversions are non-incremental — users who were already going to purchase and simply clicked an ad in their natural path to conversion. The ad got attribution credit, but removing it wouldn’t have changed the outcome. This is the structural attribution inflation that makes platform ROAS numbers look significantly better than actual business impact.

Conversion Lift Studies and Controlled Experiments

Both Google and Meta offer native conversion lift studies that use randomized user-level holdouts to measure incrementality at the audience level. Meta’s Conversion Lift tool randomizes exposure at the user level rather than geographic area, which removes the geographic correlation noise that can complicate geo-based tests. Google’s Campaign Experiments tool allows A/B testing of bidding strategy changes at the campaign level with statistical rigor built into the reporting interface.

Running a lift study should be a standard practice for any campaign or channel where spend exceeds $10,000 per month. The data it produces — a clean estimate of cost per incremental conversion rather than cost per attributed conversion — is the most honest measure of bidding efficiency available. It also provides the empirical foundation you need to make budget allocation decisions between channels, a context where platform-reported ROAS figures from competing platforms are almost never apples-to-apples comparable.

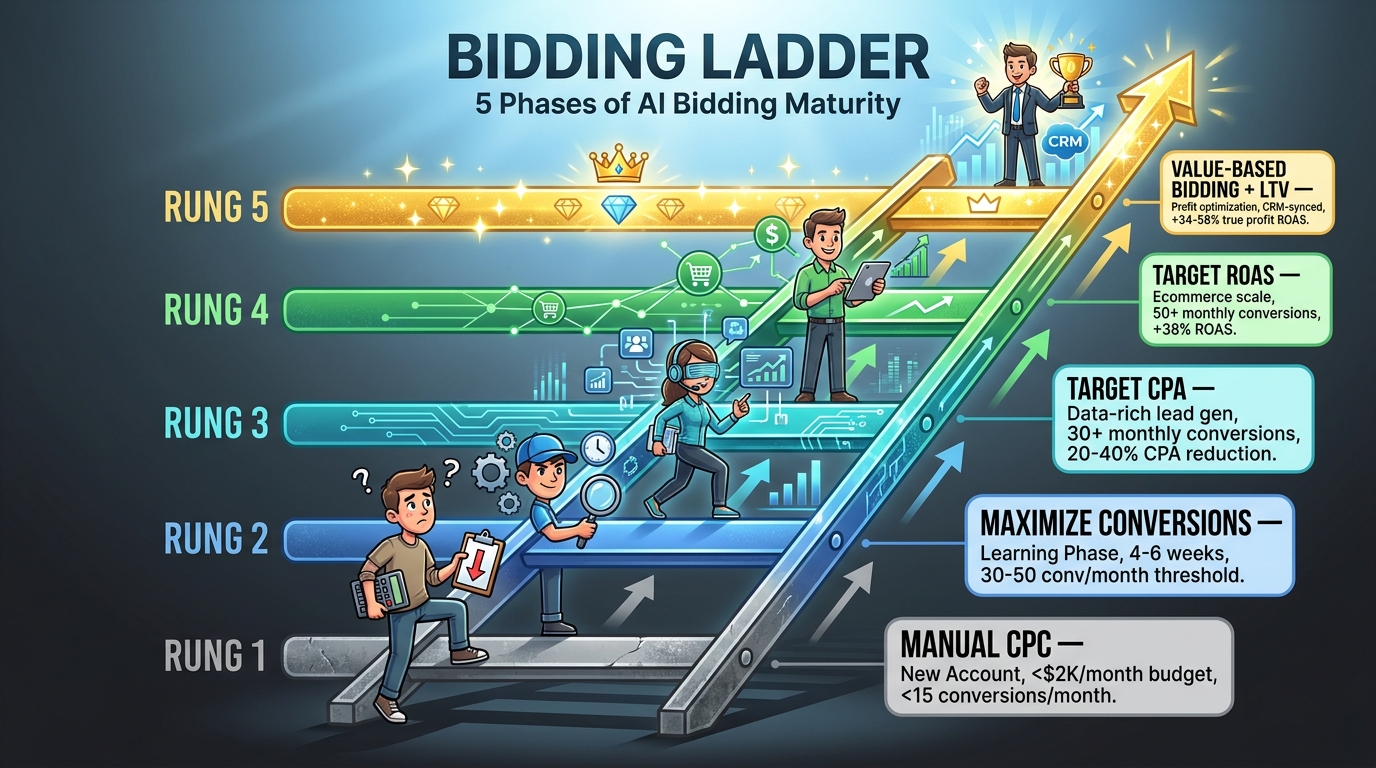

The Bidding Ladder: A Phased Approach from New Account to Scale

One of the most common structural errors in AI bidding is attempting to run strategies that require data volumes the account doesn’t yet have. Target ROAS with 20 monthly conversions will produce worse results than manual CPC in that account — not because Smart Bidding is bad, but because it’s operating below its minimum effective data threshold. The right bidding strategy depends on where your account is, not where you want it to be.

Phase 1: Manual CPC (0–15 Monthly Conversions)

New accounts and campaigns with fewer than 15 monthly conversions belong on manual CPC or Enhanced CPC. The priority at this stage is generating enough conversion data to graduate to automated strategies. That means maximizing conversion volume over efficiency — accepting a lower ROAS target temporarily, testing broad match keywords with manual bid adjustments, and ensuring conversion tracking is correctly implemented before scaling spend.

Manual CPC also provides the baseline performance data against which all future automated strategy performance should be benchmarked. Running manual CPC for four to six weeks in a new campaign gives you a credible historical ROAS reference point that prevents you from setting an automated strategy target that’s either impossibly restrictive or unchallenging.

Phase 2: Maximize Conversions (15–50 Monthly Conversions)

Once conversion volume reaches the 15 to 30 range, transitioning to Maximize Conversions (without a CPA cap) allows the algorithm to begin learning from real bidding data without being constrained by a target it doesn’t yet have the data to hit reliably. Set a reasonable daily budget limit to control total spend during this phase. The goal is conversion volume growth that moves the account toward the 50-conversion-per-month threshold that unlocks Target ROAS reliability.

Phase 3: Target CPA (30–50 Monthly Conversions)

For lead generation businesses where conversion values are relatively uniform, Target CPA becomes viable at 30 or more monthly conversions. Set the initial target 10 to 20% above your current average CPA — not at your ideal CPA — to avoid over-restricting the algorithm during the transition. Adjust downward in 10 to 15% increments over successive weeks as the algorithm demonstrates it can hit progressively tighter targets while maintaining volume.

Phase 4: Target ROAS (50+ Monthly Conversions)

Ecommerce accounts with 50 or more monthly conversions and properly implemented conversion value tracking are ready for Target ROAS. Q1 2026 benchmarks show tROAS delivering 38% higher ROAS versus manual CPC in mature accounts. Set the initial target at your current ROAS — again, not at your aspirational target — and allow the algorithm a full four-week learning phase before making adjustments. Increase targets in 10 to 20% increments monthly, not weekly, to avoid forcing the algorithm into restrictive mode that tanks volume.

Phase 5: Value-Based Bidding with LTV Integration (Advanced Accounts)

The ceiling of AI bidding effectiveness in 2026 is value-based bidding with integrated LTV signals. This requires a functioning CRM-to-ads data pipeline, a LTV prediction model (rules-based or ML), and profit-adjusted conversion values at the product or category level. The setup cost is real — typically 30 to 60 hours of technical and analytics work to implement properly. The return, documented across multiple case studies, is 34 to 58% improvement in true profit ROAS over equivalent revenue-optimized campaigns.

Nine Bidding Mistakes That Are Actively Killing Your ROAS

With the strategic framework established, here are the specific tactical errors most commonly diagnosed in underperforming AI bidding accounts — and the precise fixes for each.

1. Setting Target ROAS Aspirationally Rather Than Historically

If your account currently delivers 3.5x ROAS and you set a 7x tROAS target, you haven’t told the algorithm what you want. You’ve told it to only bid in situations where a 7x ROAS is achievable — which in most accounts means a tiny fraction of total available auction opportunities. The campaign will have extremely low impression share, minimal conversions, and you’ll interpret the result as “Smart Bidding doesn’t work.” Set targets at or slightly above historical performance and adjust gradually. The fix: start at current ROAS + 15%, not at your goal ROAS.

2. Interrupting the Learning Phase

Every budget change above 20%, target change above 15%, or major structural change resets the learning phase. Accounts that make weekly adjustments based on short-term fluctuations never exit the learning phase. The AI perpetually explores rather than exploits, and performance is chronically unstable. The fix: define a review cadence of four to six weeks minimum, use statistical significance thresholds rather than gut reactions, and document every change with a date so you can correlate performance shifts to specific interventions.

3. Running PMax Without a Branded Search Campaign

PMax without a branded Search campaign will harvest your brand-driven conversions, report them as AI-generated wins, and inflate your PMax ROAS while leaving your brand terms bidding undefined. Run a separate branded Search campaign with your exact brand terms and set brand exclusions in PMax. This is a 30-minute fix with potentially significant attribution impact.

4. Using Last-Click Attribution with Smart Bidding

Last-click attribution severely undervalues upper-funnel impression touchpoints and overvalues the final click. Smart Bidding trained on last-click data learns to prioritize high-intent, bottom-funnel keywords at the expense of awareness-stage terms that drive the consideration cycle. The result is a campaign structure that’s optimized for the last yard of the conversion path while neglecting everything that got users there. Data-driven attribution — which Google sets as the default for Smart Bidding in most account types — is the right measurement model. If your account is still on last-click, change it before expecting Smart Bidding to reach its potential.

5. Not Providing Audience Signals to PMax

PMax allows — but doesn’t require — audience signals: first-party data segments, in-market audiences, custom intent audiences that tell the algorithm where to focus its early exploration. Without these signals, PMax spends its initial budget broadly exploring the audience landscape, which is expensive and slow. Providing strong audience signals doesn’t restrict the campaign to those audiences; it gives the AI a better starting point from which to expand. High-LTV customer lists, email subscriber lists, and in-market audiences relevant to your products are all valuable signals. Providing none is leaving performance on the table during the most expensive phase of the algorithm’s learning cycle.

6. Ignoring URL Expansion Controls

PMax’s URL expansion feature automatically selects landing pages from your website beyond the specific ones you’ve designated, based on the algorithm’s assessment of relevance. In practice, this can send users to category pages, blog posts, or informational pages that are poorly optimized for conversion. Implementing URL exclusions for non-conversion pages and ensuring your designated landing pages match the intent of the asset groups is basic hygiene that a substantial number of PMax accounts skip.

7. Conflating ROAS Across Different Customer Acquisition Types

New customer acquisition and retargeting operate at fundamentally different economics. Retargeting warm audiences consistently delivers dramatically higher attributed ROAS — users who already know you convert at much higher rates. When you pool new acquisition and retargeting under a single tROAS target, the algorithm naturally concentrates spend on retargeting (where the ROAS target is easiest to hit) and under-invests in new customer acquisition. Segment your bidding strategy to reflect these different economic realities: accept a lower tROAS target for prospecting campaigns and a higher one for retargeting, managing them as distinct investments rather than as a single ROAS average.

8. Budget Scaling in Single Large Jumps

Doubling a campaign budget over a weekend sends a massive signal shift that forces the algorithm back into exploration mode — exactly the behavior you were trying to get past. For campaigns with established learning, budget increases above 20% in a single adjustment window disrupt the bid models. The mechanism: the algorithm assumes a budget doubling means something fundamental about the campaign’s context has changed, and it begins re-exploring bid ranges it had previously settled. Scale slowly, in 15 to 20% weekly increments, and give the algorithm two to three weeks at each budget level before scaling again.

9. Treating AI Bidding as Set-and-Forget

AI bidding reduces the need for manual bid adjustments. It doesn’t eliminate the need for strategic management. Campaigns still require regular creative refreshes (ad fatigue is real and measurable), audience signal updates as your CRM data evolves, conversion tracking audits as your website and analytics infrastructure changes, and competitive monitoring to understand when your impression share shifts demand strategic responses. Accounts managed on a “set it and check monthly” cadence consistently underperform accounts with weekly strategy reviews even when the bidding mechanism is fully automated. The human job changes from bid manipulation to signal optimization and strategic oversight — it doesn’t disappear.

Building a Bidding Architecture That Compounds Over Time

The most important reframe in AI bidding strategy is moving from a campaign-level optimization mindset to an account-level architecture mindset. Individual campaigns are components of a larger system, and the system’s performance depends on how well those components are structured to share learning, prevent attribution inflation, and deliver increasingly accurate signals to the AI over time.

The Compounding Advantage

Accounts that have been running Smart Bidding for twelve or more months with clean conversion tracking, stable campaign structures, and progressively enriched first-party data achieve results that new entrants to automated bidding simply can’t replicate in months. The algorithm’s prediction models deepen with account history. The audience data becomes richer as more customer segments are built. The LTV prediction accuracy improves as more purchase cycles are observed. This isn’t just the Matthew effect of “the rich get richer” — it’s a genuine information asymmetry that gets wider every quarter.

The implication for 2026: the window to build this compounding advantage is shorter than it was two years ago. Smart Bidding adoption has grown 14 percentage points in two years, with 91% of enterprise accounts now using at least one automated strategy. The accounts that haven’t built clean signal infrastructure, haven’t connected first-party data to their bidding systems, and haven’t implemented value-based conversion values are falling further behind mature-account competitors every month. The gap between a well-architected AI bidding setup and a poorly managed one — in terms of cost efficiency and true ROAS — widened significantly in 2025 and continues to widen in 2026.

The Three Structural Investments That Pay the Longest Dividends

If you’re going to prioritize three changes based on everything in this article, these are the ones with the highest long-term ROI:

First: Clean, profit-adjusted conversion tracking. Audit your conversion events, eliminate double-counting, ensure your primary conversion goal is the business outcome that actually matters, and shift to passing profit-adjusted values rather than raw order values. This is the single highest-leverage change available to most accounts and requires no media budget increase to implement.

Second: First-party data integration. Implement Enhanced Conversions and the Conversions API minimum. Upload your customer email lists as Customer Match audiences. Connect CRM segments to bidding signals. Do this before you touch your bid strategies, because the strategies can only be as good as the data feeding them.

Third: Incrementality measurement. Run at least one geo-based holdout test annually for your highest-spend campaigns. The result will tell you more about actual advertising effectiveness than any platform dashboard can, and it will give you the empirical basis to make budget allocation decisions with genuine confidence rather than platform-reported attribution.

Practical Takeaways

- Never set tROAS targets above your historical ROAS. Start at current performance + 15% and adjust gradually.

- Audit conversion tracking before changing bid strategies. Bad signal inputs produce bad bidding outputs regardless of which strategy you use.

- Separate branded and non-branded campaigns structurally. Attribution inflation is silent, persistent, and expensive.

- Build a creative library of 15–50 variations for Advantage+ campaigns. Creative quality is the primary optimization lever in Meta’s AI bidding system.

- Use portfolio bidding when individual campaigns are below conversion thresholds. Pooled learning beats isolated underpowered learning every time.

- Run incremental lift tests on your top-spend campaigns at least once per year. Platform ROAS and incremental ROAS are different numbers. Know both.

- Move toward profit-based conversion values at whatever pace your technical capabilities allow. Even a rough margin-tier multiplier is better than pure revenue optimization.

The advertisers extracting the most value from AI bidding in 2026 are not the ones with the biggest budgets or the most sophisticated machine learning teams. They’re the ones who understood that the algorithm is only a tool — and that the quality of what you feed it, the architecture of how you structure it, and the honesty with which you measure its results are what actually determine whether you’re compounding performance or just paying for an increasingly expensive illusion of efficiency.

Get the inputs right. The AI will handle the rest.