There is a moment most affiliate marketers know intimately: you spend a week crafting content around a product, the commission rate looks good on paper, and you genuinely believe your audience will respond. Then the data comes back. Clicks are modest. Conversions are flat. The product never quite clicked with the people who showed up to watch.

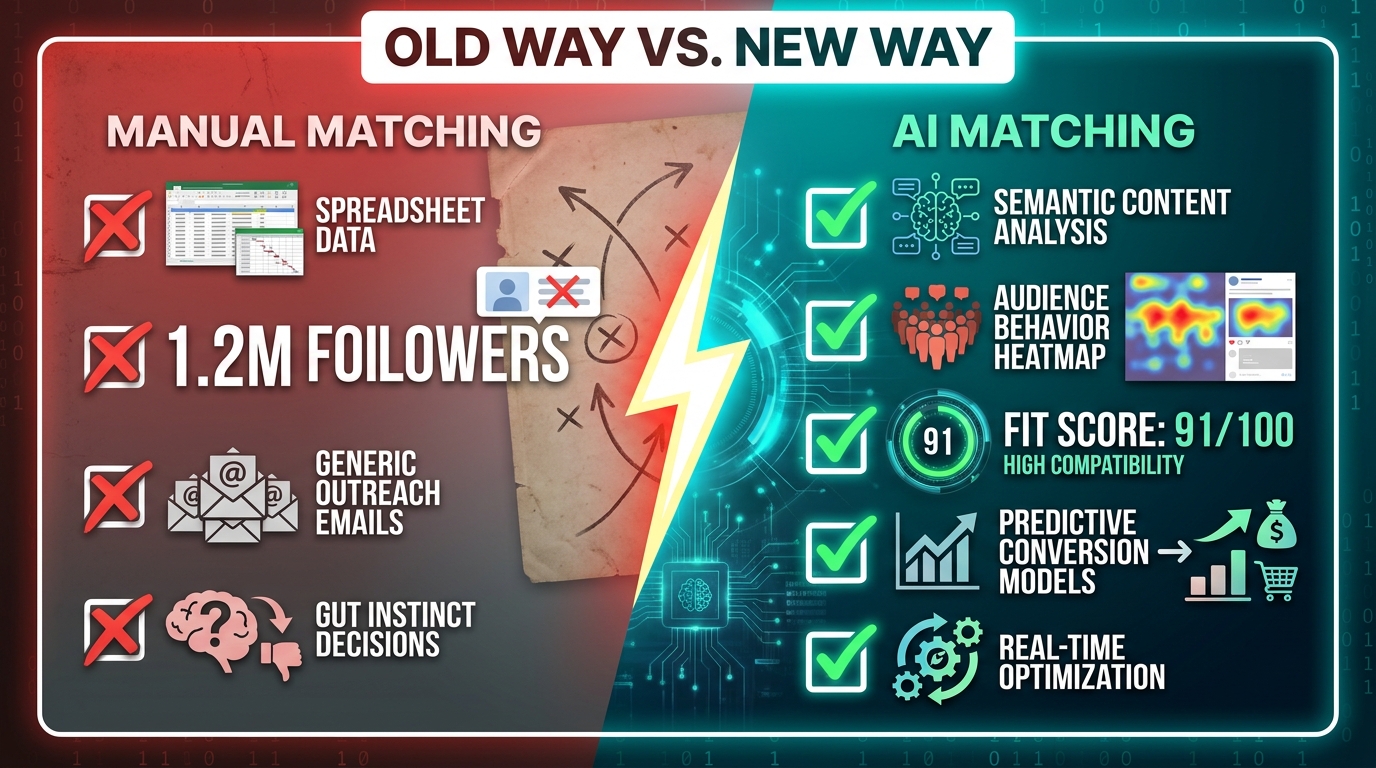

This is not a content problem. It is not even really a traffic problem. It is a matching problem — and for most of the affiliate industry’s history, matching has been done by gut instinct, follower counts, and category labels that are far too broad to mean anything useful. A creator who posts about “health and wellness” might be the perfect fit for a meditation app or a complete mismatch for a sports nutrition supplement. The label doesn’t tell you which. The follower count tells you even less.

What’s changed in 2026 is that AI has started reading the signals that actually matter. Not what category a creator claims to be in, but what their content says at a semantic level, who their audience actually is behaviorally, what those viewers have historically clicked on, and — critically — whether there is genuine purchase intent in their community. The result is a new discipline emerging at the intersection of machine learning and affiliate strategy: AI-powered creator-product matching.

This article is not about AI as a buzzword layer on top of the same old processes. It’s about how the underlying mechanics of matching are being rebuilt from scratch — what signals drive the new systems, which platforms are operationalizing them, where the gaps still exist, and what affiliates and brands need to do right now to stay ahead of the shift.

Why Creator-Product Mismatch Costs More Than Anyone Admits

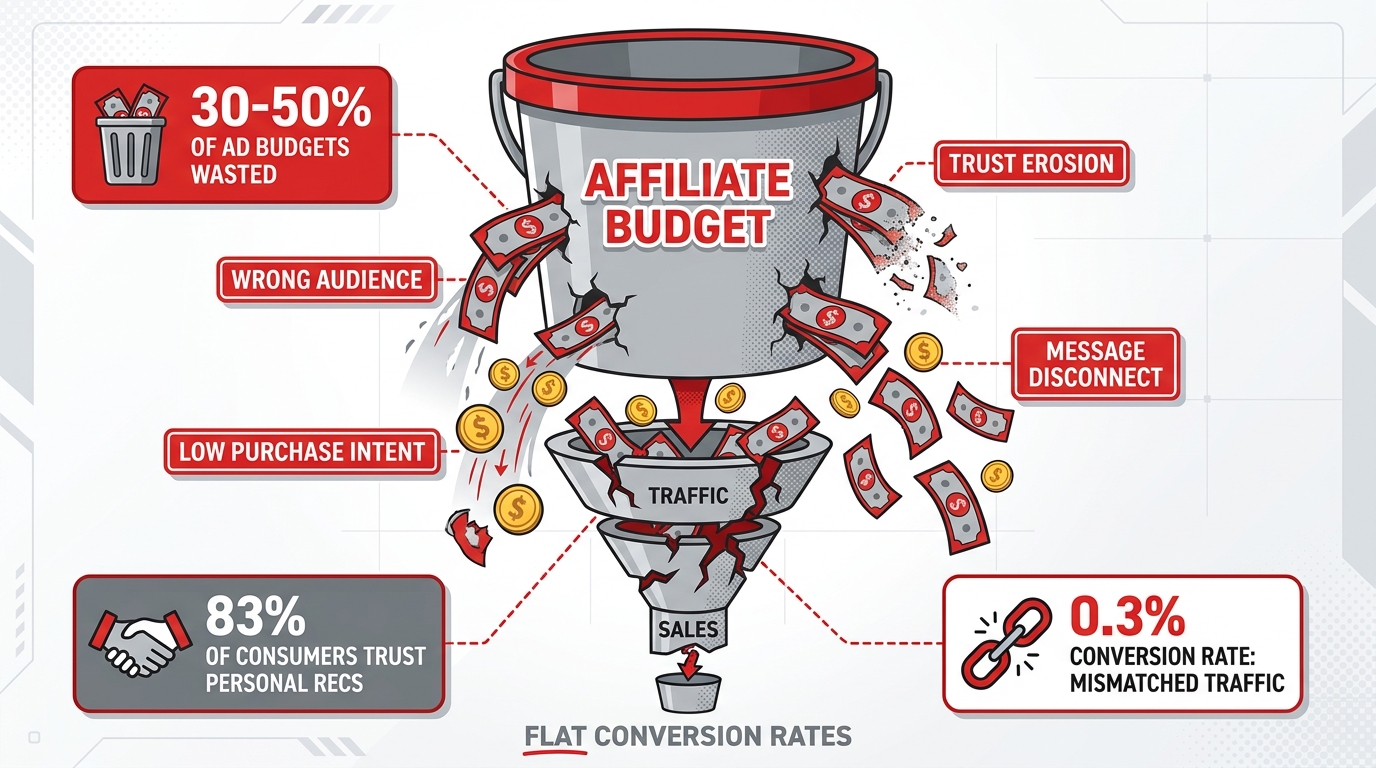

The affiliate industry tends to measure mismatch at the conversion layer — low click-through rates, weak landing page engagement, flat revenue numbers. These are real, but they understate the full cost by a significant margin. The damage from mismatched creator-product partnerships runs much deeper than what shows up in a monthly dashboard.

The Wasted Budget Problem

Recent data on digital marketing efficiency suggests that poor targeting — including product-audience mismatch — consumes somewhere between 30 and 50 percent of active affiliate budgets. In a broader context, misaligned targeting globally accounts for an estimated 37% of wasted digital ad budgets, which aggregates to hundreds of billions annually across the industry. For individual affiliate programs, the internal version of this problem plays out when creators are recruited based on surface metrics, then sent products that their audience simply does not have the intent to purchase.

The mechanics of this waste compound quickly. When a creator promotes a product that does not resonate, ad algorithms — if paid amplification is involved — begin optimizing toward the wrong signals. They chase cheap clicks from non-buyers. Lookalike audiences built from this low-quality traffic produce conversion rates well below what the same budget could achieve with correctly matched traffic. One documented pattern shows conversion rates as low as 0.3% for mismatched lookalike audiences compared to 2% or higher for correctly targeted segments.

The Trust Erosion Nobody Tracks

Beyond the conversion data, there is a second cost that affiliate programs almost never assign a dollar figure to: audience trust erosion. Research consistently shows that 83% of consumers trust personal recommendations over branded content. That trust premium is the entire reason creator-led affiliate marketing works. When a creator repeatedly promotes products that do not align with their content or their audience’s actual interests, that trust deteriorates — gradually at first, then sharply when audiences begin ignoring recommendations as a category.

This effect does not show up in monthly reporting. It shows up six months later when a creator’s conversion rate on any affiliate link has inexplicably dropped, even for genuinely well-matched products they promote later. The mismatch damage is cumulative and usually misattributed to “audience fatigue” or “platform algorithm changes.”

The Invisible Churn Signal

Perhaps the most insidious outcome of product-creator mismatch is what it does to downstream customer quality. When affiliates send traffic that isn’t aligned with a product’s actual value proposition — often because they were never properly briefed on the product’s positioning, or because the product was never a real fit for their audience — the customers who do convert tend to have higher churn rates. They cancel subscriptions earlier. They return physical products more frequently. They generate more support tickets.

Brands often interpret this as the affiliate delivering “low-quality customers” and reduce commissions or end the relationship. But the actual problem is structural: the match was wrong from the start, and neither party had the data infrastructure to know it before launch.

What AI Actually Reads: The Signals Behind Smarter Matching

The question that matters most is not whether AI is being used for matching — it clearly is, across a growing number of platforms and programs. The question is what data is actually being analyzed, and whether those signals are genuinely predictive of affiliate performance or just sophisticated proxies for the same shallow metrics that were failing before.

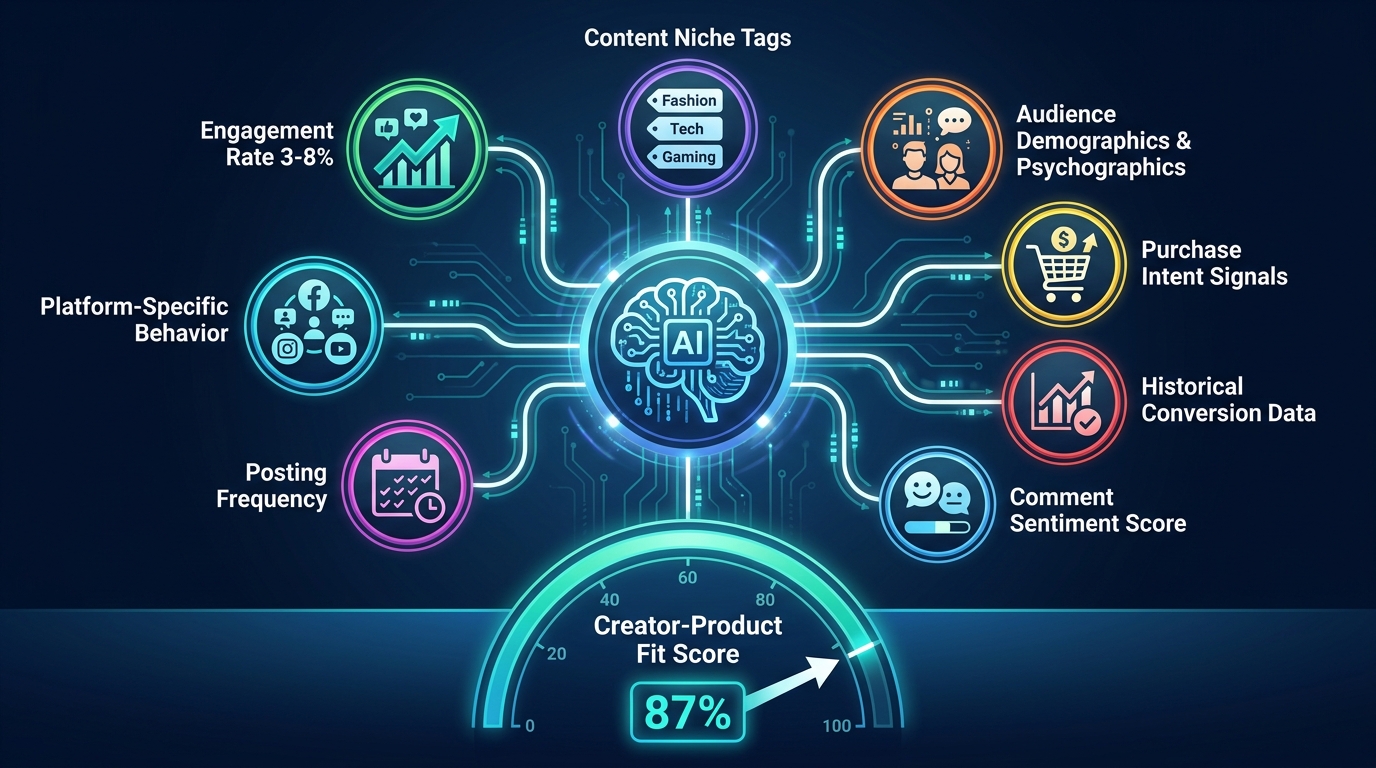

Current AI matching systems, at their most capable, operate across several distinct signal layers simultaneously:

Layer 1: Semantic Content Analysis

This is where the meaningful departure from legacy matching begins. Rather than relying on self-reported categories (“I’m a fitness creator”) or platform taxonomy labels, advanced matching systems analyze the actual language and topic distribution of a creator’s content. Using large language model embeddings, these systems can identify what a creator genuinely discusses at a granular level — not just “fitness” but specifically “powerlifting for people over 40” or “low-impact cardio for postpartum recovery.”

This semantic layer matters enormously because two creators in the same labeled niche can have almost zero audience overlap and radically different purchase behaviors. A skincare creator whose content centers on dermatological concerns and prescription-adjacent products attracts a very different buyer than one whose content focuses on sustainable, clean-ingredient beauty. Semantic analysis surfaces that distinction in ways that category labels never could.

Layer 2: Audience Behavioral Data

The second layer looks not at the creator but at the audience the creator has built. AI matching systems increasingly ingest behavioral signals from audience activity: what they click on, how long they engage with specific content formats, what they purchase through previous affiliate links, and even the time-of-day patterns that predict purchase intent.

Platforms using neural-network-based micro-segmentation can build dynamic audience clusters — sometimes 100 or more distinct behavioral segments — from a single creator’s follower base. A creator with 200,000 followers might have a subsegment of 14,000 people who consistently engage with product reviews, regularly click affiliate links, and show purchase history signals suggesting mid-tier discretionary spending. That subsegment is worth far more to the right brand than the creator’s headline follower count would suggest.

Layer 3: Engagement Quality, Not Quantity

Engagement rate has been a matching signal for years, but AI systems are now moving beyond simple ratios to analyze engagement quality. Comment sentiment analysis determines whether audience interactions are genuinely enthusiastic, skeptical, or passive. Reply patterns identify whether a creator’s community trusts their recommendations or treats them as content to consume and scroll past.

The data on engagement rates by creator size is worth understanding clearly: nano-creators (1,000–10,000 followers) achieve engagement rates of 3.5–8%, micro-creators (10,000–100,000) land between 3–5%, while macro and mega creators typically fall between 0.5–2%. But these aggregate numbers hide enormous variance within each tier. AI systems are increasingly scoring the type of engagement, not just its volume, and using that to predict whether recommendations will generate action or merely awareness.

Layer 4: Historical Conversion Behavior

This is the signal with the highest predictive power and the hardest to access without platform-level data. When a creator has an established track record of affiliate promotions — even if the products differed — the conversion data from those campaigns contains information about their audience’s purchase propensity, average order value tendencies, and the types of offers that resonate. AI systems that can access this historical conversion data produce dramatically more accurate match predictions.

Platforms like impact.com’s AI partner discovery engine build what they call an Ideal Partner Profile (IPP) from the historical performance data of a brand’s existing top-converting affiliates, then use those profiles to scan for creators with similar content, audience, and behavior signatures. The system is essentially asking: “Who else, on the open web and within our partner network, looks like the people who already convert well for us?”

Layer 5: Purchase Intent Signals

The most forward-looking matching systems are beginning to incorporate real-time purchase intent signals — data that indicates not just who a creator’s audience is, but what they are actively in the market for right now. This includes signals like search behavior patterns visible through platform data, engagement with competitor or category content, and behavioral triggers like recently watching product comparison videos. When these intent signals align with a specific product’s value proposition, the match confidence score rises substantially.

The Architecture of Today’s AI Matching Platforms

Understanding what AI reads is useful. Understanding how the platforms are built around those signals helps brands and affiliates know where they actually sit in the ecosystem — and where to direct their energy.

Ideal Partner Profiles and Lookalike Discovery

The dominant architecture among enterprise-grade affiliate matching platforms starts with a data-driven IPP. Rather than letting brand managers manually define what a good affiliate looks like, the system analyzes the top 10–20% of existing affiliate partners by conversion quality metrics (not just volume) and extracts their shared attributes: content topics, audience demographics, typical post frequency, platform mix, and historical conversion behavior.

That profile then becomes a search query. The AI scans partner networks and, increasingly, the open web to identify creators who match the profile across the most predictive dimensions. Results are returned ranked by match confidence, not by follower count or application order. This fundamentally changes the talent discovery dynamic — a brand that has historically recruited affiliates through open applications or influencer marketplaces is suddenly operating with a targeted, data-driven outreach list.

Semantic Scoring and Embedding Models

A number of platforms are now deploying large language model embeddings to score the semantic relevance between a creator’s content corpus and a product’s category, value proposition, and target customer description. An embedding is essentially a mathematical representation of meaning — the system converts both the creator’s content history and the product description into high-dimensional vectors, then measures the distance between them.

Creators whose content vectors sit close to a product’s category vector in this embedding space are flagged as strong semantic matches. Those who are in a nominally similar category but whose actual content covers different territory register as weaker matches, regardless of their follower count or self-reported niche. This is the technology that finally makes category labels redundant as a primary matching tool.

Dynamic Micro-Segmentation

More sophisticated platforms — particularly those operating at scale on social commerce channels — are using neural networks to build dynamic micro-segments of creator audiences in real time. Rather than treating a creator’s audience as a monolithic group, these systems continuously cluster audience members based on behavioral data and update those clusters as new behavior is observed.

The practical implication: a platform can identify that 22% of a creator’s audience exhibits strong purchase intent signals for a specific product category, even if the creator’s overall content is only loosely related. The match recommendation might be narrower in scope (“this creator’s audience includes a purchaser-intent segment for home office products”) rather than a blanket endorsement, which allows brands to structure campaigns more precisely.

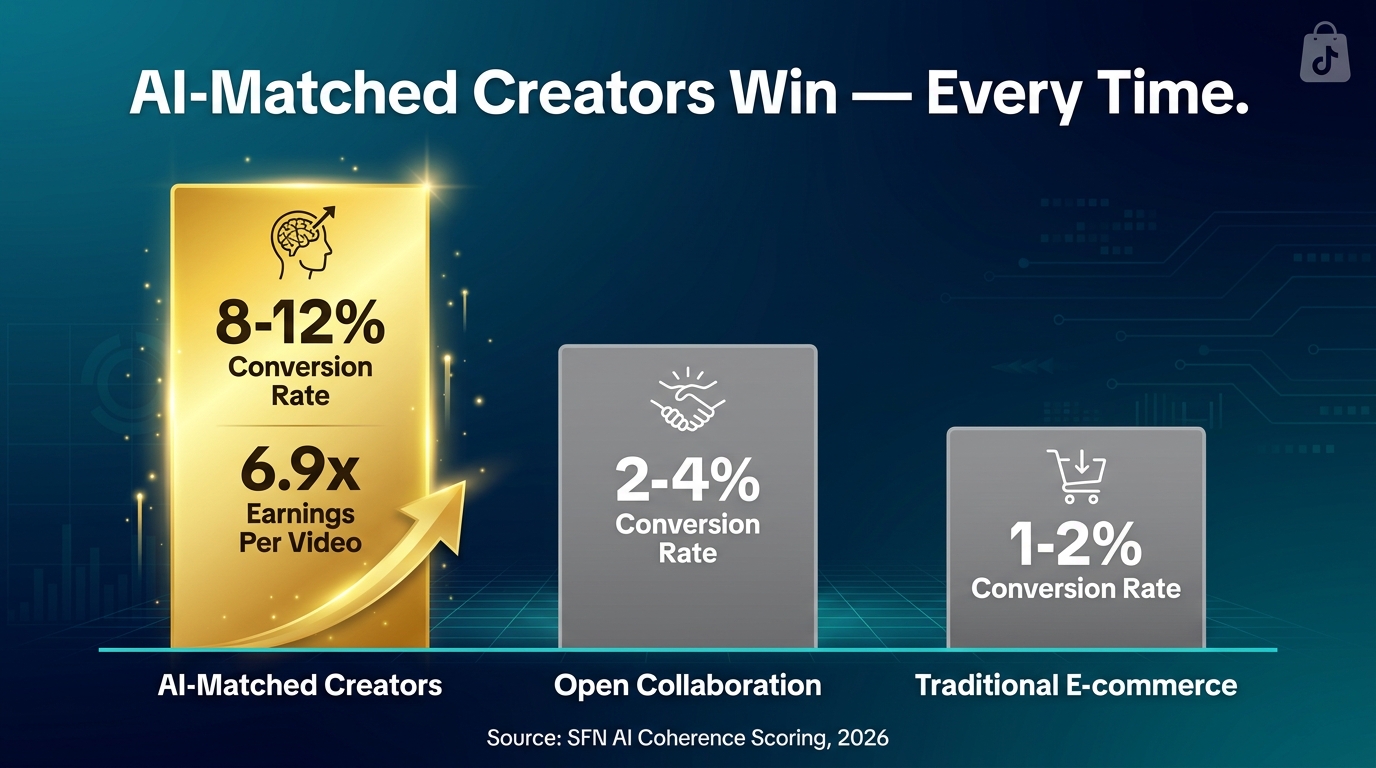

Coherence Scoring: The Metric That Is Changing Everything

Of all the developments in AI-powered creator matching, coherence scoring deserves the most attention — because it is simultaneously the most data-driven and the most actionable signal available to affiliates right now.

Coherence scoring, as implemented by platforms like SFN AI in the TikTok Shop ecosystem, measures the degree to which a creator’s video content logically and thematically aligns with the specific product they are promoting. Not just whether they are in the right niche, but whether the actual video — its hook, its framing, its language, its visual presentation — is genuinely coherent with the product’s use case and target customer.

What the Data Shows

The performance differential created by coherence scoring is striking. Creators who score 90% or above on video-product coherence assessments average 6.9 times higher earnings per video compared to creators in the same product category with lower coherence scores. Targeted collaborations built on high-coherence matches achieve conversion rates of 8–12%, compared to 2–4% for open collaborations where creators are simply given product access without coherence vetting.

These are not marginal improvements. A 6.9x earnings multiple for high-coherence matches, compared to unvetted placements, is the kind of ROI differential that should be driving structural decisions about how affiliate programs are built and managed — not just which creators to invite next month.

Why Coherence Works

The reason coherence scores are predictive of conversion is relatively intuitive once you see it clearly. Audiences do not just watch a creator — they develop a specific expectation for what that creator’s content will address, how it will be framed, and what it signals about the creator’s knowledge and taste. When a product integration is coherent with that established frame, the recommendation feels organic. The audience’s guard is down because the recommendation makes contextual sense.

When the integration is incoherent — a skincare creator suddenly promoting software tools, a gaming creator endorsing a nutrition product they have never referenced before — the recommendation triggers skepticism. Audiences are highly sensitive to out-of-context recommendations, even when they cannot articulate exactly what feels off. Coherence scoring quantifies what audience psychology has always operated on.

Measuring Coherence Without a Platform

For creators and brand managers who do not have access to a formal coherence scoring system, there is a practical approximation. Before a product collaboration is confirmed, ask: if you searched this creator’s last 50 posts for the keywords most associated with this product’s use case, how many would appear organically? If the answer is near zero, the coherence is low. If the keywords, themes, and adjacent topics appear naturally throughout their content history, coherence is high. This manual check is imperfect but catches the most egregious mismatches before a campaign launches.

TikTok Shop as the Live Laboratory for AI Matching

No ecosystem has accelerated the development and real-world stress-testing of AI matching tools faster than TikTok Shop. The scale of what has happened there in the last 18 months — and what the data shows — makes it the clearest case study available for understanding where creator-product matching is heading across all affiliate channels.

The Scale of the Problem That Drove the Solution

TikTok Shop’s US GMV hit $23.4 billion in 2026, with global GMV exceeding $80 billion. Affiliates now drive an estimated 42% of the platform’s total GMV. With those numbers came a volume problem: brands trying to recruit and manage hundreds or thousands of creators simultaneously found that manual vetting was simply not viable. The cost of mis-matched creator placements — in wasted product samples, lost campaign spend, and diluted brand positioning — became large enough to justify significant investment in AI-based matching infrastructure.

The tools that emerged from this pressure — platforms like SFN AI, Stormy AI, and Cruva — were built specifically to solve the TikTok Shop matching problem at scale. Their data is the clearest available picture of what AI matching actually delivers in a high-volume, real-commerce environment.

Case Study: Physician’s Choice

Physician’s Choice, a supplement brand, scaled to more than 2,000 active TikTok Shop affiliate creators and generated $2.4 million in revenue within 28 days by implementing an intelligence-first system from launch. The key was not just recruiting a large number of creators — it was using coherence-based matching to ensure that each creator’s content history was genuinely aligned with the supplement category, the specific product’s use case, and the health-conscious consumer profile the brand served. The volume was the result of precision, not a substitute for it.

Case Study: MySmile

MySmile, a teeth whitening brand, reached seven-figure monthly revenue within three months using 500 active creators, again built on an intelligence-driven matching approach that prioritized creator-product coherence over broad outreach. The brand did not approach creator recruitment as a numbers game. They treated it as a data matching exercise — and the revenue numbers reflect that discipline.

What the Power Law Reveals

TikTok Shop data reveals a sharp power law in creator performance. The top 1% of affiliate creators average $600,000 in annual GMV. The gap between the top performers and median performers is not primarily about follower count. It is about the quality of the match between what the creator authentically talks about, who their audience is, and what the product offers. AI systems that can identify the characteristics of top performers and use them to recruit the next cohort of high-match creators are, in effect, shortening the path to the power law tail.

Micro vs. Macro: What AI Has Learned About Creator Size and Conversion Fit

One of the clearest findings to emerge from AI-powered affiliate analysis is the systematic mispricing of creator size as a matching variable. The affiliate industry has historically treated larger creators as inherently more valuable because the potential reach is higher. AI analysis has exposed this assumption as expensive and often wrong.

Engagement Rate by Creator Tier

The engagement data by creator tier is consistent and clear: nano-creators (1,000–10,000 followers) achieve the highest engagement rates, typically between 3.5% and 8%, with some niche communities exceeding that. Micro-creators (10,000–100,000 followers) maintain 3–5% engagement. Mid-tier creators (100,000–1,000,000) see rates drop to 1.5–3%. Macro and mega creators frequently land between 0.5–2%.

What this means in practice: for products where conversion depends on authentic recommendation and audience trust — which describes the majority of affiliate marketing — a micro-creator with a deeply engaged, niche-aligned audience will frequently outperform a macro-creator with a broader but shallower relationship with their audience. Micro-creators with aligned niches achieve up to 28% better conversion rates than macro creators in equivalent product categories, according to 2026 affiliate benchmarks.

The Cost Efficiency Angle

Beyond conversion rates, there is a pure cost efficiency argument for smaller, well-matched creators. Micro-creators typically cost 60–70% less per campaign than macro-creators. When that cost advantage is combined with equal or superior conversion rates, the ROI differential becomes substantial. A brand that allocates budget across 20 precisely matched micro-creators rather than 2 macro-creators is spreading risk, increasing creative diversity, and often achieving better aggregate performance — assuming the matching is rigorous.

This is where AI becomes necessary rather than optional. Manually identifying 20 high-quality micro-creator matches, vetting their content history, assessing audience behavior, and building collaboration briefs is operationally intensive. AI matching systems make that process viable at scale, which is why the shift toward micro-creator programs is accelerating alongside the adoption of AI matching tools.

When Macro Still Wins

AI matching analysis has not invalidated macro-creator partnerships — it has clarified when they make sense. For product launches where awareness generation is the primary goal, where the product has broad demographic appeal, or where brand safety and production quality are paramount, macro-creators retain clear advantages. The error that AI analysis corrects is the assumption that macro is always better. The right answer is situational, and AI systems are increasingly capable of recommending creator tier as part of the match output, not just individual creator selection.

Beyond Follower Count: The New Data Hierarchy for Affiliate Decisions

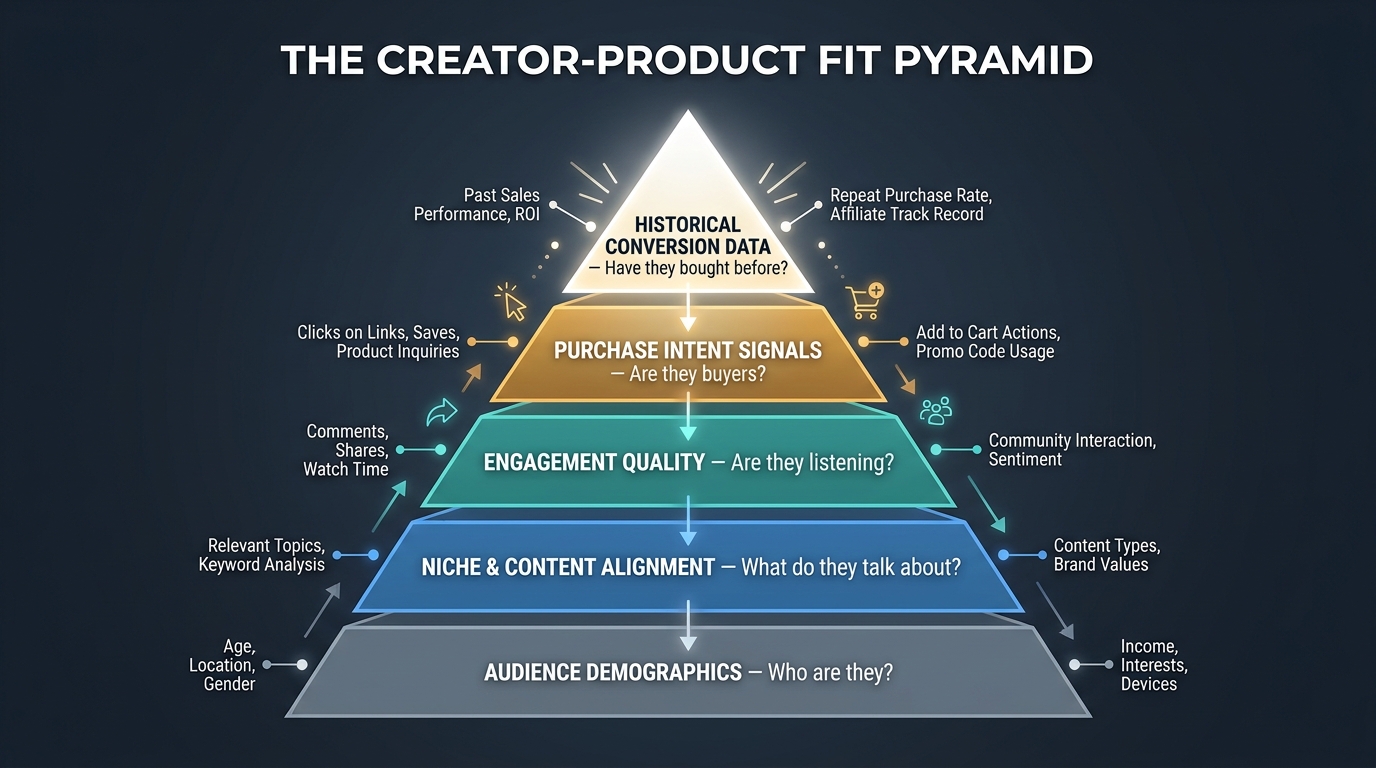

If follower count is no longer the primary matching signal, what replaces it? The emerging consensus from AI matching platforms, performance data, and affiliate marketing research points to a clear hierarchy of signals ordered by their predictive power for conversion outcomes.

Tier 1: Historical Conversion Data

This sits at the top of the hierarchy because it is the most direct evidence of what a creator’s audience will actually do. A creator who has driven measurable conversions for products in adjacent categories — even at modest volume — is a far better match candidate than a large-following creator with no conversion track record in any affiliate context. Historical conversion data tells you whether the relationship between creator and audience has the commercial dimension that affiliate marketing requires.

The challenge is that this data is often siloed within platforms, programs, or brand-specific tracking systems. AI matching platforms that can aggregate across these sources have a significant competitive advantage in prediction accuracy.

Tier 2: Purchase Intent Signals from Audience Behavior

Second in predictive power are real-time and historical signals of purchase intent within a creator’s audience. This includes data on what the audience clicks on when affiliate links are present, how they engage with product review content versus entertainment content, and behavioral patterns that suggest active shopping mindset versus passive content consumption.

A creator whose audience consistently demonstrates active shopping behavior — even if their overall follower count is modest — is fundamentally more valuable for affiliate purposes than one whose audience is highly engaged with entertainment content but shows low commercial activation.

Tier 3: Engagement Quality and Community Depth

Engagement quality — as distinct from engagement quantity — ranks third. The key signals here are comment authenticity, reply patterns, the degree to which audience members reference creator recommendations in their own comments, and whether the community shows signs of reciprocal trust rather than one-way consumption. These are the signals that indicate whether a creator’s recommendation power is real or merely superficial.

Tier 4: Niche and Semantic Content Alignment

The depth of semantic alignment between a creator’s actual content and a product’s category and use case ranks fourth. This is where coherence scoring lives. A creator who discusses the specific problem a product solves — not just the broad category — represents a stronger natural match than one who covers the category at a surface level.

Tier 5: Audience Demographics and Psychographics

Demographic matching — age, gender, income, location — sits at the base of the hierarchy. It is necessary but not sufficient. A creator whose audience is demographically correct but behaviorally mismatched (they watch but do not buy) will underperform against a creator whose demographics are slightly off but whose audience is commercially activated and niche-aligned. Demographics are a filter, not a predictor.

How Affiliates Can Signal Better Fit to AI Systems

Most conversations about AI matching are written from the brand or platform perspective — how to use AI to find better creators. But creators who understand how these systems work can actively position themselves to appear in the right match results, receive better-fit product offers, and build the track record that AI systems increasingly rely on for high-confidence recommendations.

Niche Depth Over Niche Breadth

The single most impactful thing a creator can do to improve their AI match visibility for relevant products is to go deeper into their niche rather than broader. A creator who covers general “personal finance” generates embedding vectors that are diffuse and hard to match with precision. A creator who specifically covers “debt payoff strategies for households in the $50,000–$100,000 income range” generates much sharper embedding signals that match clearly with specific financial products targeting that demographic.

This runs counter to some creators’ instincts, which push them toward broader content to maximize potential audience size. But in a world where AI matching systems are increasingly gatekeeping which affiliate opportunities reach which creators, niche depth is a matchability asset that directly translates into better partnership opportunities and higher conversion rates when those partnerships materialize.

Build a Trackable Conversion History

Creators who have affiliate links but have never actively tracked or shared their conversion data are invisible to AI systems that depend on conversion history for high-confidence matching. Even participating in small affiliate programs and generating modest but real conversion data starts building the signal that platforms need to assign a meaningful predictive score.

Practically, this means accepting lower-commission partnerships with well-matched products early in a creator career, treating those campaigns as data-building exercises rather than pure revenue events. The conversion history created by those early campaigns feeds into future matching algorithms and can substantially accelerate the quality of offers received over time.

Make Your Audience Behavior Readable

AI systems match based on what they can observe. Creators whose audience behavior is concentrated on a platform with rich behavioral data — where audience members click affiliate links, engage with product content, and show purchase signals — are more matchable than creators who spread their audience thinly across multiple platforms with limited shared data.

This does not mean ignoring multi-platform strategies. It means ensuring that the platform where affiliate content is primarily distributed has strong enough behavioral data collection that matching systems can actually build an accurate picture of the creator’s audience purchasing behavior.

Consistency Compounds

AI matching systems are pattern-recognition engines at their core. A creator who produces consistent content in a clearly defined niche, with predictable posting frequency and stable audience engagement patterns, is far more matchable than one whose content is erratic, whose audience varies dramatically in engagement, or whose niche signals are inconsistent week to week.

Consistency does not mean repetitiveness — it means the algorithmic representation of your content and audience is stable enough for a matching system to make a reliable prediction. That stability is itself a competitive advantage in an ecosystem where matching quality determines which partnership opportunities a creator sees.

The Risks Nobody Talks About When AI Matching Goes Wrong

AI matching systems are powerful and, when working correctly, represent a genuine improvement over legacy manual processes. But they carry specific failure modes that brands and affiliates both need to understand before relying on them uncritically.

Overfitting to Historical Data

The most common failure mode in AI matching is overfitting: the system becomes so optimized toward the patterns of past top performers that it systematically fails to identify new creator types who could be highly effective but do not yet look like historical winners. This creates a kind of algorithmic conservatism that reinforces existing winner categories and makes it harder for creators in emerging niches or formats to break through.

For brands, this means AI matching should be treated as a starting filter, not a final decision system. Human review of match recommendations — especially for creators in adjacent or emerging niches — remains important for catching high-potential opportunities that algorithmic systems may be slow to recognize.

Data Quality Problems Upstream

AI matching is only as good as the data flowing into it. When historical conversion data contains fraud (invalid affiliate clicks, incentivized traffic, bot-generated engagement), that contaminated data can produce confident-looking but fundamentally incorrect match recommendations. Platforms that do not have robust fraud detection at the data ingestion layer will produce matching scores that look precise but are calibrated against corrupted signals.

Brands using AI matching platforms should ask specifically about data quality controls, fraud detection in historical conversion data, and how the system handles creators whose past performance data is thin or inconsistent before trusting high-confidence match scores at face value.

The Diversity Risk

AI matching systems optimize for fit — and in doing so, can inadvertently narrow the creator pool that a brand works with. If the IPP is built from existing top performers and the system only recommends lookalikes, the program will tend to converge toward an increasingly homogeneous creator base over time. This creates fragility: if that creator archetype falls out of favor with audiences, the entire program is exposed simultaneously.

Intentional diversity in creator recruitment — across content style, audience demographics, and creator size — is a risk management strategy as much as a values statement. The best AI-assisted affiliate programs use algorithmic matching for efficiency while preserving human judgment for strategic diversification.

The Prompt Manipulation Problem

As AI matching becomes more central to who receives affiliate partnership offers, some creators will attempt to game the signals — artificially inflating engagement, using keyword-stuffed content to appear in semantic matches, or misrepresenting audience demographics to matching systems. This is the affiliate equivalent of SEO keyword stuffing, and it creates the same problem: short-term visibility gains at the cost of long-term trust and performance credibility.

Platforms are aware of this risk and are increasingly incorporating signals that are harder to game — like actual historical conversion data and behavioral patterns across extended time periods — as stronger weights in their matching algorithms. But gaming attempts will likely accelerate as AI matching becomes more consequential, and brands need detection capabilities alongside matching capabilities.

Building a Creator-Product Matching Framework You Can Actually Use

For brands and affiliate program managers who do not yet have access to a fully automated AI matching platform — or who want to supplement platform recommendations with their own analytical layer — there is a practical framework that captures the most important matching signals without requiring sophisticated technology infrastructure.

Step 1: Define Your Ideal Converter, Not Your Ideal Creator

Most affiliate programs start by describing what they want in a creator: follower count, engagement rate, content quality. The more useful starting point is describing the customer who has the highest lifetime value, the lowest churn rate, and the strongest retention signals in your existing data. What content do they consume? What problems were they trying to solve when they found your product? What language do they use to describe their purchase decision?

That customer profile is your real matching target. You are looking for creators whose audiences contain large concentrations of people matching that profile — not creators who superficially look appealing based on reach or aesthetics.

Step 2: Audit Content for Semantic Alignment

Once candidate creators are identified, conduct a semantic audit of their last 60–90 days of content. Are the core themes, vocabulary, and topics genuinely adjacent to your product’s value proposition? Do they reference the problems your product solves — not generically, but specifically? Do their audience members ask questions in comments that your product would answer?

This can be done manually with a structured content review checklist, or partially automated using AI content analysis tools that can scan a creator’s post history and generate a topic distribution summary. The objective is to produce a coherence assessment before investing in outreach or sample inventory.

Step 3: Score on Commercial Activation, Not Just Engagement

Pull any available data on a creator’s history with affiliate or commercial content. Do their product-related posts perform differently from their editorial content — positively or negatively? Have they received comments from followers indicating actual purchases driven by recommendations? Are their affiliate links (if publicly visible) generating click data that suggests commercial activation?

Commercial activation — the tendency of an audience to take purchasing action in response to a creator’s recommendations — is distinct from general engagement. A creator with 50,000 highly engaged followers who never click on anything commercial is less valuable as an affiliate partner than a creator with 30,000 followers whose audience consistently responds to product recommendations with purchase behavior.

Step 4: Start with a Structured Test Before Full Commitment

Regardless of how confident a match assessment is, the cost of a false positive is real: product samples, briefing time, campaign spend, and the opportunity cost of deploying budget on a partnership that underperforms. A structured pilot approach — a single campaign with clear KPIs and a defined decision threshold — before committing to a long-term partnership reduces this risk significantly.

Define in advance what a successful test looks like in terms of conversion rate, click-through rate, and content coherence quality. Review the results against those thresholds before scaling. The test-first discipline is not a lack of confidence in the matching assessment; it is the mechanism by which the matching system generates the conversion data that makes future predictions more accurate.

Step 5: Feed Results Back Into the System

The final and most important step is closing the loop. The campaign data generated by each creator partnership — conversion rates, audience response patterns, content performance — needs to feed back into the matching framework to improve future predictions. This is true whether the “system” is a sophisticated AI platform or a well-maintained spreadsheet model.

Programs that track and analyze what made their top-performing partnerships successful, and systematically apply those insights to future recruiting, compound their matching accuracy over time. Programs that treat each campaign as a standalone event lose the institutional learning that drives continuous improvement.

Matching Is Now a Competitive Moat — If You Build It Deliberately

The affiliate marketing industry is in the early stages of a genuine structural change. The question is no longer whether to use data and AI in creator-product matching — it is how quickly brands and creators adapt to a world where the quality of the match is the primary determinant of affiliate program performance, not the volume of creator relationships or the size of the commission structure.

The data makes the direction clear. AI-matched creator placements achieve conversion rates 2–4 times higher than unvetted open collaborations. Coherence-scored placements produce earnings multiples nearly 7 times higher than random assignments. Micro-creators with deep niche alignment outperform macro-creators by 28% in conversion rates while costing 60–70% less per placement. These are not marginal refinements — they are the kind of performance differentials that separate programs that grow sustainably from those that plateau or quietly dissolve.

What This Means for Brands

Brands that invest in matching quality — whether through platform partnerships, internal data infrastructure, or rigorous manual frameworks — are building a compounding advantage. Every well-matched campaign generates conversion data that improves the next round of matching. Every performance review that feeds back into the IPP refines the model. Over 12–18 months, a brand operating with a data-driven matching system and one operating with legacy intuition-based recruiting will have affiliate program performance profiles that look completely different, even if they started from the same place.

The investment required to build this capability is front-loaded. The infrastructure, the data habits, and the analytical discipline are not trivial to establish. But the programs that do establish them are building something that is genuinely difficult for competitors to replicate quickly — because the real advantage is not the technology itself but the institutional learning and data accumulation that the technology enables.

What This Means for Affiliates

For creators, the implications are equally significant. The era of broadcasting affiliate links broadly and hoping something converts is being replaced by a world where the creators who are findable by AI matching systems — because they have sharp niche signals, consistent content, and trackable conversion history — will receive a disproportionate share of the best partnership opportunities. The affiliate ecosystem is becoming merit-based in a new sense: not merit as measured by follower count, but merit as measured by the depth and quality of creator-audience-product alignment.

Creators who understand this and actively build toward it — by deepening their niche, building their conversion track record, and making their audience behavior readable to the systems that allocate partnership opportunities — are positioning themselves well. Those who continue optimizing primarily for reach and follower count without attention to commercial activation and niche coherence will find themselves increasingly invisible to the programs that offer the most favorable terms.

The Honest Caveat

AI matching is not a solved problem. The systems are improving rapidly, but they still carry the failure modes described above: overfitting to historical winners, vulnerability to data quality problems, risk of creator pool homogenization, and exposure to gaming as the stakes of matching rise. Human judgment remains essential — not to override data, but to ask the questions that data alone cannot answer: Is this creator genuinely excited about this product? Does the partnership make narrative sense for where this creator is going? Is there creative chemistry that a coherence score cannot capture?

The best affiliate programs in 2026 will be the ones that treat AI matching as a powerful tool operating within a human strategic framework — not as a replacement for the human judgment that understands context, creative potential, and long-term relationship value. The signal matters. So does the person reading it.

Actionable Takeaways

- Audit your current affiliate roster for coherence, not just reach. How many of your active creator partnerships would score above 80% on a content-product coherence assessment? The ones that would not are costing you more than you realize.

- Build your Ideal Partner Profile from your best converters, not your highest-traffic referrers. Identify the shared signals — content focus, audience behavior, posting patterns — and use those to drive future recruitment.

- If you are a creator, spend the next 90 days going deeper into your niche rather than broader. The sharpness of your content signal is the primary factor that determines how well AI matching systems can find you for the right partnerships.

- Treat every small affiliate campaign as data infrastructure. The conversion data you generate now is the training signal that improves your match predictions — and your match visibility — in every future program you participate in.

- Implement a coherence check as a mandatory step before confirming any creator collaboration. If you cannot articulate in two sentences why this creator’s specific content history makes them a natural fit for this specific product, the match has not been validated yet.

- Ask your matching platform about data quality controls. The confidence of an AI match score is only meaningful if the historical data behind it is clean. Know what fraud detection, data validation, and anomaly handling your platform is running before trusting its outputs at scale.

The signal behind the sale has always existed. What has changed is our ability to read it, systematize it, and act on it before a single piece of content is created. That capability — built deliberately and improved continuously — is what separates the affiliate programs of the next three years from those still running on the same matching logic as the previous decade.