Every brand team has a version of the same story. Someone on the marketing side spends three weeks scrolling through Instagram, building a spreadsheet of potential creators, eyeballing follower counts, and watching a handful of videos before making a gut-call decision. A deal is struck. Content goes live. The results land somewhere between disappointing and disastrous — and nobody can quite explain why.

That story is now expensive enough to matter. The influencer marketing industry reached $32.55 billion in spend in 2025, up 35.6% year-over-year, with projections for 2026 pushing even higher as the broader creator economy approaches an estimated $214–$314 billion in total ecosystem value. At that scale, guessing which creators fit which products isn’t just inefficient — it’s financially reckless.

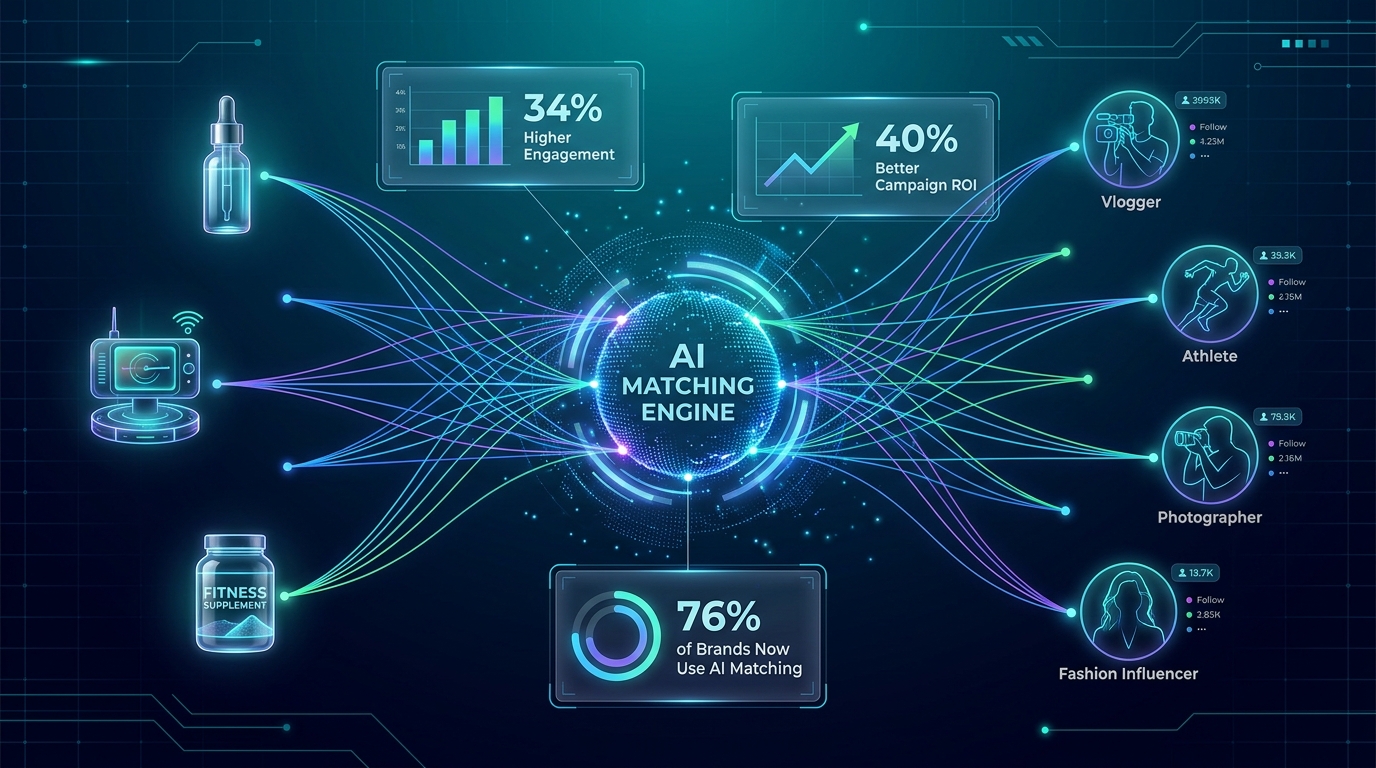

AI-powered creator-product matching has moved from a niche capability to a core operational function. 76% of brands now use some form of automated creator matching, and those campaigns are delivering measurable performance advantages over manually curated partnerships. But the interesting story isn’t simply “AI is better than humans.” It’s why the old system was structurally broken, what the algorithms are actually measuring, where they still fall short, and how brands that understand the technology at a deeper level are pulling ahead of those treating it as a black box.

This article breaks all of that down — from the data signals being processed to the attribution problems finally being solved, to the genuine tensions that still require human judgment.

Why Follower Count Was Always the Wrong Signal

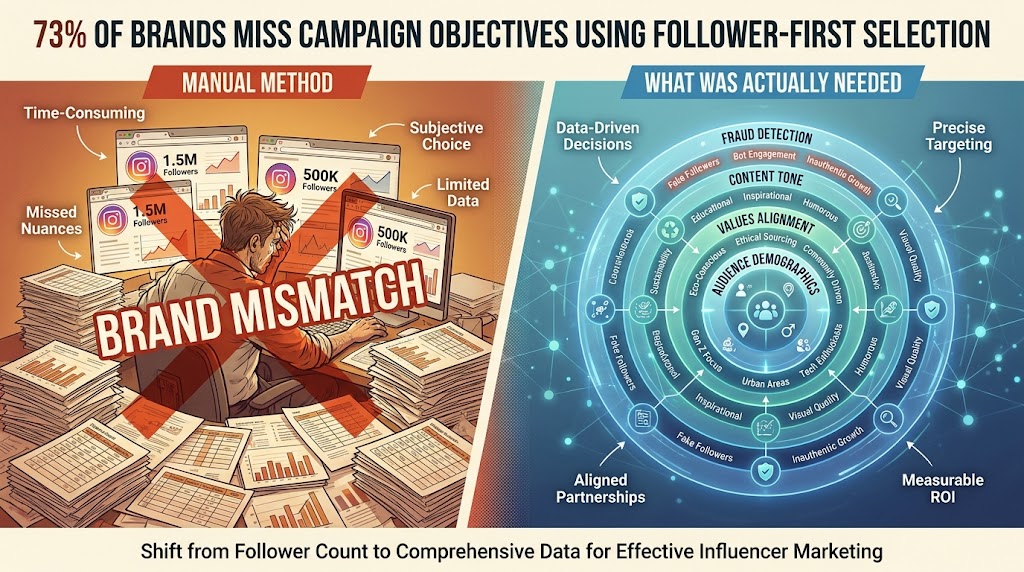

To understand why AI matching works, you first need to understand exactly how badly manual selection methods performed — and why they failed in predictable, structural ways rather than by accident.

The traditional influencer selection process revolved around two primary filters: category and follower count. A brand selling fitness supplements would search for fitness creators with large followings, review their content briefly for obvious red flags, and make a decision. This approach seemed logical. It was not.

The Follower Count Illusion

Follower count is a measure of who once clicked “follow” — not who actively engages, not who resembles your target customer, and certainly not who is likely to convert on your specific product. A creator with 800,000 followers in the “fitness” category might have built that audience on powerlifting content. If your product is a low-calorie snack for casual gym-goers, that audience is largely irrelevant — and the campaign will reflect that in its numbers.

Keyword-based category filters compound the problem. A creator who posts primarily about “clean living” and “wellness” might appear in fitness searches, but their audience might skew heavily toward middle-aged women in suburban markets who are more interested in stress reduction than performance nutrition. The category label tells you almost nothing about audience composition.

Real Brand Safety Costs

Manual vetting also failed catastrophically on brand safety. The Morphe Cosmetics collapse offers an extreme but instructive example — the brand’s heavy reliance on James Charles as a primary creator partner left it dangerously exposed when his controversies erupted, contributing to reputational and financial damage that the brand never fully recovered from. Tarte Cosmetics faced a different kind of mismatch: exclusive influencer trips that generated significant backlash for lacking diversity, a values-alignment failure that a content history analysis would have flagged. Boots UK’s Zoella advent calendar debacle — in which influencer marketing drove enormous hype for a product that customers felt was overpriced and under-delivered — eroded consumer trust in a way that outlasted the campaign by years.

These aren’t edge cases. According to Influencer Marketing Hub, 72% of brands experienced at least one influencer-related brand safety incident in 2025. The majority of those incidents trace back to insufficient vetting of creator audience alignment, content history, or values compatibility — exactly the things a manual process struggles to assess at scale.

The Bandwidth Ceiling

There’s a harder, more operational problem with manual selection: it doesn’t scale. Reviewing a creator thoroughly — auditing their last six months of content, cross-referencing audience demographics, checking engagement authenticity — takes time that marketing teams simply don’t have. The result is either shallow vetting on a handful of creators or surface-level vetting on a slightly larger pool. Neither approach produces consistently good outcomes. The process selects for visibility (who’s easy to find) rather than fit (who will actually perform).

What the Algorithm Actually Measures

Modern AI matching systems don’t operate on a single signal. They synthesize multiple data streams simultaneously, weight them according to campaign objectives, and produce a ranked list of creators whose profiles align with a specific brand and product context. Understanding the individual components reveals why this produces qualitatively different outcomes from manual selection.

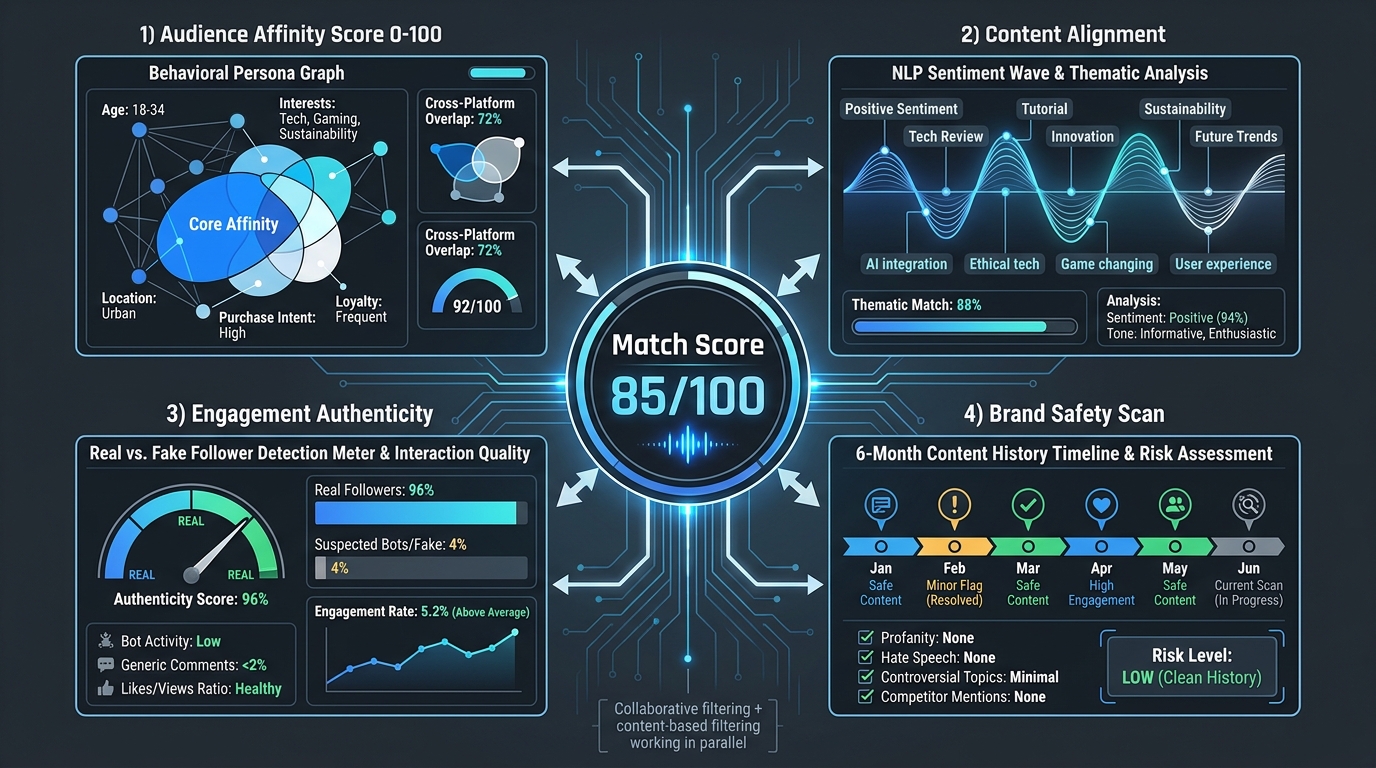

Audience Affinity Scoring

This is the signal that manual processes miss most completely. Audience affinity scoring evaluates the behavioral alignment between a creator’s followers and a brand’s target customer profile. Rather than relying on demographic labels alone, advanced systems like Simon AI’s Affinity Agent build real-time behavioral personas — scored from 0 to 100 on opportunity fit — using semantic matching against first-party brand data and cross-category behavioral patterns.

The distinction matters enormously. Two creators in the same category with similar follower demographics can have radically different audience affinity scores for the same product, because their followers’ actual behavioral patterns — what they buy, what content they engage with most deeply, how they respond to product recommendations — diverge significantly. Traditional filters can’t see this. Affinity scoring can.

Collaborative Filtering

Borrowed from recommendation engine architecture (the same logic that powers Netflix and Spotify recommendations), collaborative filtering for creator matching identifies patterns from past successful partnerships. If brands with a similar customer profile, price point, and product category achieved strong results with a specific set of creators, the algorithm uses those patterns to surface new creators with similar profile characteristics — even if they’ve never worked with a comparable brand before.

This is particularly useful for emerging creators who don’t yet have extensive collaboration history. The algorithm can identify structural similarities between a new creator’s audience and established patterns from proven partnerships, enabling brands to act on those signals before competitors do.

Content-Based Filtering via NLP and Image Analysis

Content alignment goes beyond “does this creator post about fitness?” to “does this creator’s content tone, vocabulary, aesthetic, and narrative style match the brand’s voice?” Natural language processing analyzes captions, comments, and scripted content to assess sentiment, topic clustering, and communication style. Computer vision models review visual content for aesthetic alignment — color palette, production quality, framing — and flag content that includes competing brands or category-adjacent products that could create confusion.

The values-alignment layer is particularly valuable for brands in sensitive product categories. A supplement brand partnering with a creator who regularly posts content that glorifies extreme restriction or disordered eating patterns faces obvious risks. NLP can surface these signals from content history in ways that a human reviewer, operating under time pressure and reviewing a curated portfolio, frequently misses.

Engagement Authenticity Analysis

Influencer fraud remains a meaningful problem. Bot-inflated follower counts, purchased engagement, and comment pods that artificially spike interaction metrics all distort the signals that manual reviewers depend on. AI systems trained on authentic engagement patterns can identify statistical anomalies — follower growth spikes inconsistent with organic growth curves, engagement-to-follower ratios that fall outside normal distributions, comment patterns suggesting coordinated activity rather than genuine response.

Predictive Performance Modeling: Pricing the Outcome Before You Pay for It

One of the most significant structural shifts in AI-powered creator matching is the move from post-campaign analysis to pre-campaign forecasting. The old model was essentially: select a creator, define deliverables, pay a fee, run the campaign, and measure results afterward. If the results were poor, you’d have data to inform the next campaign — but you’d already lost the budget on the current one.

Predictive performance modeling inverts this logic. Before a contract is signed, AI systems generate projected outcomes for a specific creator-product pairing: estimated reach, projected engagement rate, expected click-through rates, and — increasingly — forecasted conversion rates and cost-per-acquisition figures. These forecasts are generated by running the creator’s historical performance data, audience affinity profile, and content alignment scores through models trained on thousands of comparable partnerships.

From “Pay and Pray” to Pre-Campaign Projections

The practical implication is that brands can now compare expected ROI across multiple creator candidates before making any commitments. Instead of asking “who seems like a good fit?” the question becomes “which creator, for this specific product, in this specific audience segment, projects the strongest return at this budget level?” That’s a fundamentally different and more disciplined decision framework.

Platforms like CreatorIQ and Traackr have built performance forecasting into their core product offering, generating pre-campaign projections that account for seasonality, product category norms, creator growth trajectories, and audience engagement patterns. The accuracy of these models improves continuously as more campaign outcome data feeds back into the training pipeline.

Look-Alike Creator Analysis

A particularly useful application of predictive modeling is look-alike creator analysis — identifying creators whose audience and content profile closely mirrors that of a proven performer, even if those creators have never run a campaign for your brand or category. This is how brands can scale their creator programs without simply running back to the same handful of proven partners on every campaign. The algorithm surfaces structurally similar profiles across a much wider creator pool, enabling portfolio diversification without proportional increases in manual research time.

Dynamic Re-Scoring During Campaigns

Prediction doesn’t stop at the contract stage. Active campaigns feed real-time performance data back into the matching model, adjusting creator scores dynamically based on how actual results compare to projections. A creator whose early content dramatically outperforms projections gets flagged for increased investment. One whose content is underperforming gets flagged for creative review before the next deliverable. This continuous feedback loop between performance data and matching intelligence is something no manual workflow can replicate at speed.

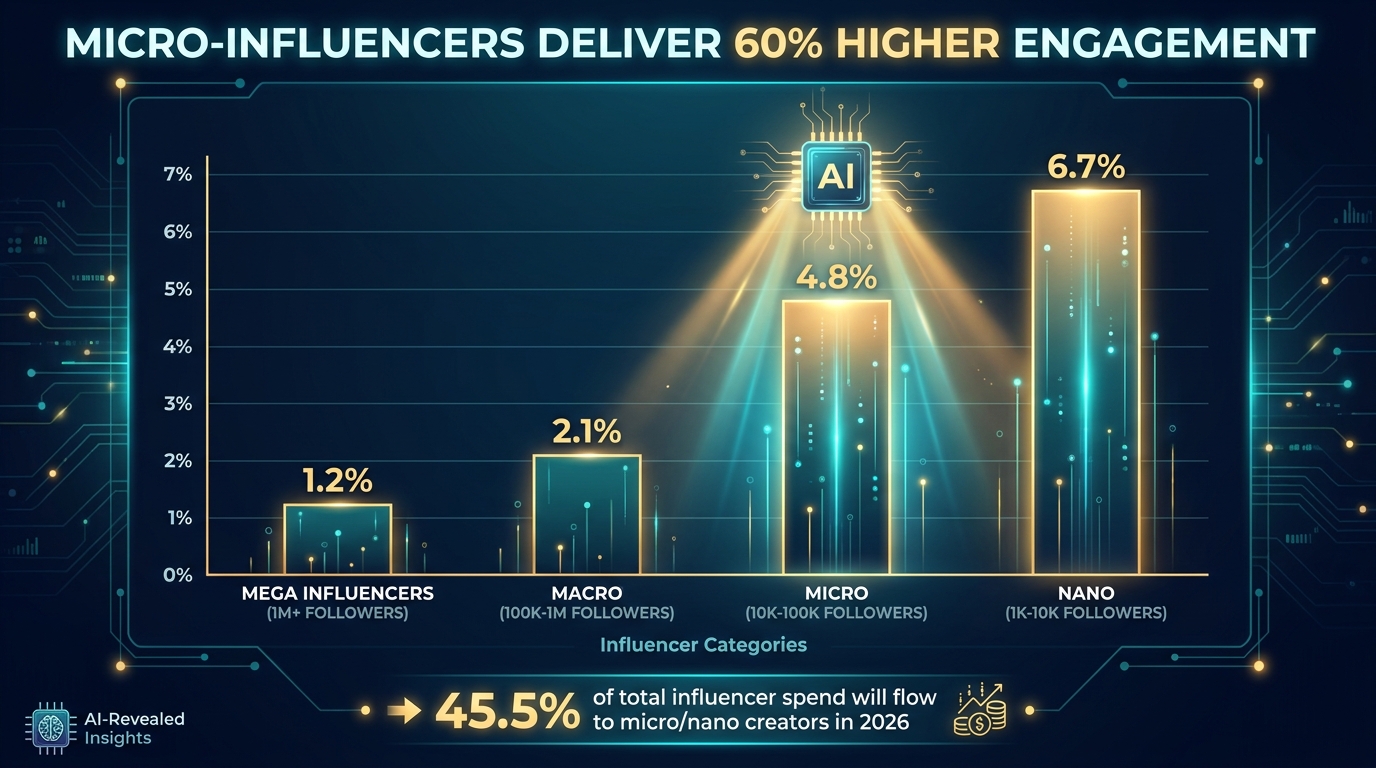

The Micro-Influencer Advantage AI Found First

One of the most consequential findings to emerge from data-driven creator analysis is the systematic undervaluation of micro and nano-influencers in traditional selection processes. When brands were selecting creators manually, the bias toward large follower counts was nearly universal — bigger audiences seemed to mean more reach, which seemed to mean more value. AI analysis revealed that this logic was inverted in many campaign contexts.

The Engagement Inversion

Micro-influencers (10,000–100,000 followers) yield approximately 60% higher engagement rates than macro-influencers, according to Influencer Marketing Hub data. Nano creators (1,000–10,000 followers) frequently outperform even that benchmark, with engagement rates that can reach 6–8% versus the 1–2% typical of mega-influencers. The mechanism is straightforward: smaller creators have built tighter, more trusting communities. Their recommendations land as genuine peer endorsements rather than celebrity advertisements. Their audiences tend to be more tightly clustered around specific interests, making audience-product alignment easier to achieve.

Why Manual Processes Couldn’t Scale Micro-Influencer Programs

The reason brands historically under-invested in micro-influencers wasn’t ignorance of their advantages — it was operational: running an effective micro-influencer program requires working with dozens or hundreds of creators simultaneously, which is simply unmanageable through manual workflows. Identifying, vetting, contracting, briefing, managing, and tracking performance for 200 micro-creators is a logistical operation that a typical marketing team cannot run on spreadsheets and DMs.

AI matching platforms solve this problem at both ends. Discovery — finding the right micro-creators within a specific niche — becomes a minutes-long search rather than a weeks-long manual trawl. And once identified, automated campaign management workflows handle outreach sequences, contract templates, brief delivery, and performance tracking without proportionally increasing human workload.

The 2026 Budget Shift

The market has followed the data. Micro and nano-influencers are projected to account for 45.5% of total influencer marketing spend in 2026 — a share that has grown steadily as AI tooling made scale-level micro-influencer programs operationally feasible for the first time. This isn’t a trend driven by budget constraints. Brands running sophisticated creator programs are actively reallocating spend from a few mega-creator partnerships toward broader micro-influencer portfolios precisely because the performance data supports that allocation.

Multi-Signal Matching: Beyond the Single-Dimension Filter

Early AI matching tools were essentially sophisticated search filters — better than manual Instagram scrolling, but still operating primarily on category tags, follower ranges, and basic demographic overlays. The current generation of platforms operates on a fundamentally different architecture: true multi-signal matching that synthesizes dozens of independent data streams into a single compatibility score.

Geographic and Demographic Precision

For brands with region-specific campaigns, demographic targeting, or seasonal considerations, geographic and audience demographic matching becomes critical. A regional food brand launching in the Pacific Northwest needs creators whose audiences are concentrated there — not just creators who are based there, but creators whose followers actually match that geographic footprint. An AI system can cross-reference audience location data at a granularity that manual review cannot approach.

Demographic overlays extend to income levels, education, household composition, and purchasing behavior clusters. Luxury product launches require creators whose audience demographics index strongly on discretionary spending capacity. Mass-market products need different audience profiles. The ability to specify these parameters and have the system return only creators whose actual audience composition meets those thresholds dramatically improves first-round selection quality.

Cross-Platform Unified Creator Profiles

Creators are rarely single-platform entities. A creator might have a primary TikTok presence, a secondary YouTube channel, and a growing Instagram following — with meaningfully different audience compositions across each. Platforms like Favikon have built unified cross-platform creator profiles that aggregate data across Instagram, TikTok, YouTube, and LinkedIn, enabling brands to evaluate a creator’s total cross-platform footprint and identify which platform offers the best fit for a specific campaign objective.

This cross-platform view also surfaces creators who are dominant on one platform but represent significant untapped partnership value on others. A YouTube creator with strong tutorial content might be an excellent fit for a product launch campaign that needs depth and demonstration, even if their Instagram engagement is modest. A single-platform filter would miss that opportunity entirely.

Content History and Brand Safety Layering

The brand safety layer deserves particular attention because it addresses one of the most expensive failure modes in creator partnerships. AI systems can conduct retroactive content audits across a creator’s full publishing history — scanning for language patterns, visual content, associations with competing brands, or topic areas that could create brand alignment problems. A creator who posted offensive content two years ago and has since cleaned up their feed will still trigger flags in a thorough content audit. A creator who regularly promotes a direct competitor’s products in their non-sponsored content will surface in a brand affinity scan even if those posts aren’t immediately visible to a casual viewer.

Only 4% of brands currently conduct comprehensive brand safety vetting during creator selection, according to Influencer Marketing Hub research — a striking gap given that 72% have experienced brand safety incidents. AI-powered content auditing offers a way to close that gap systematically without requiring manual review teams to watch hours of creator content before every partnership.

Real-Time vs. Predictive Matching: Two Distinct Strategic Modes

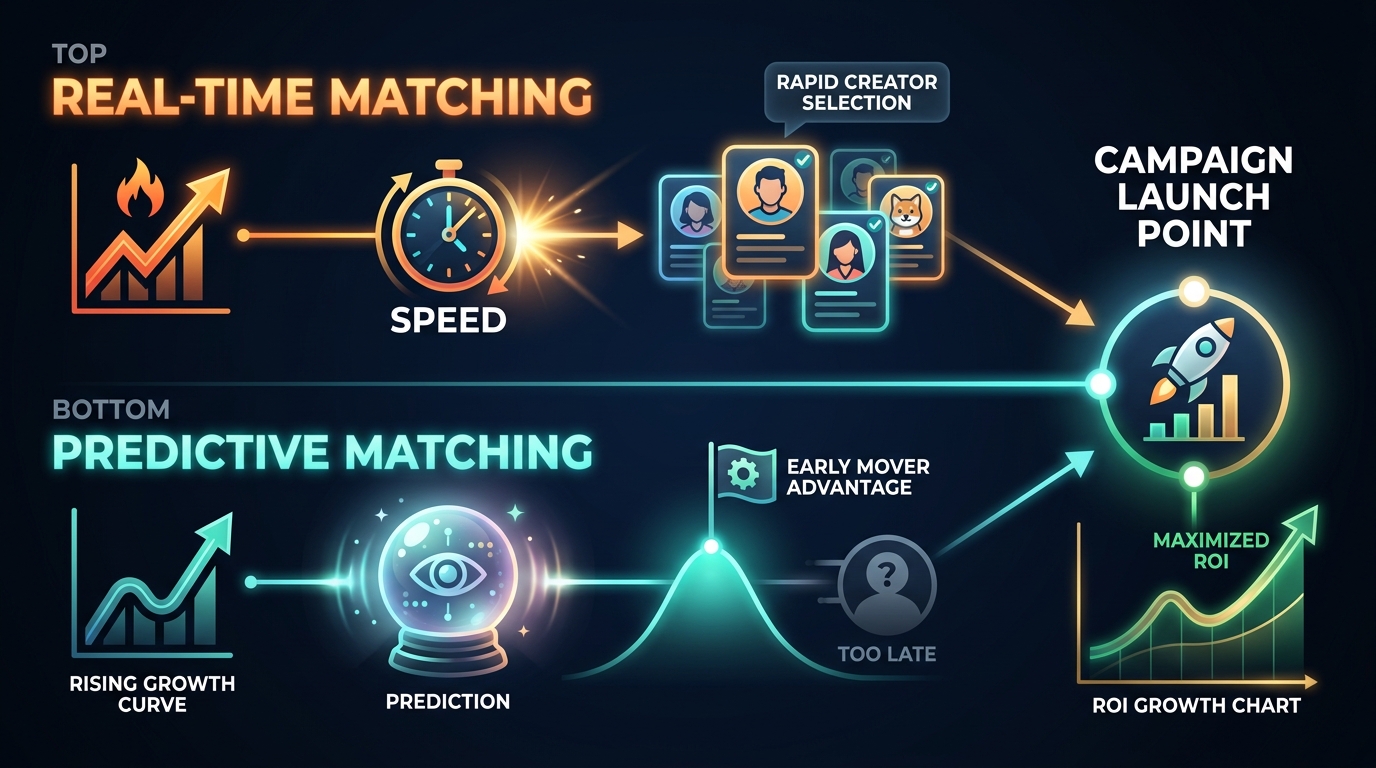

Not all AI creator matching operates on the same time horizon. There are two distinct matching modes that serve different strategic objectives, and brands that conflate them end up using the right tool for the wrong purpose.

Real-Time Matching: Speed to Relevance

Real-time matching systems continuously process current creator data — trending topics, recent content performance, platform algorithm signals, engagement velocity — and surface creators who are performing well right now for a given topic area. This mode is optimized for agility: capitalizing on a cultural moment, responding to a trending conversation, or launching a time-sensitive campaign where relevance to current discourse is the primary selection criterion.

A beverage brand responding to a sudden summer heat wave trend, a tech brand wanting to partner with creators who are riding the momentum of a specific viral conversation, or a fashion brand looking to activate around a cultural moment — these are real-time matching use cases. The selection window may be days rather than weeks. Speed and current relevance take priority over deep long-term fit analysis.

Predictive Matching: The Early Mover Advantage

Predictive matching operates on a longer time horizon and serves a fundamentally different objective: identifying creators who are on a growth trajectory before they reach peak demand and peak pricing. By analyzing early-stage engagement growth rates, audience composition trends, content evolution patterns, and platform algorithm signals that typically precede breakout moments, predictive systems can surface emerging creators months before they become obvious choices — and before competitor brands have claimed them.

The economics of early-mover positioning in creator partnerships are significant. A creator with 50,000 engaged followers in a high-affinity niche might command a partnership rate of $2,000–$5,000 per deliverable. That same creator at 500,000 followers — where manual selection processes would finally surface them as a compelling option — commands ten times that. Predictive matching enables brands to build authentic early relationships with creators who are heading toward that profile, at a fraction of the eventual market rate.

Knowing Which Mode to Deploy

The practical guidance is relatively straightforward. Real-time matching serves campaign activations with defined windows and topical hooks. Predictive matching serves long-term creator roster building and brand ambassador development. Most sophisticated brands run both modes simultaneously — using real-time matching for short-term campaign activations while continuously building a pipeline of emerging creator relationships through predictive discovery. The two strategies complement rather than compete with each other.

The Attribution Problem AI Is Finally Solving

Creator partnerships have historically been evaluated on engagement metrics — likes, comments, shares, saves — which are relatively easy to measure but only loosely correlated with actual business outcomes. The attribution problem in influencer marketing is this: how do you draw a reliable line between a creator’s content and a downstream purchase, subscription, or lead?

Why Traditional Attribution Failed

The traditional toolkit for influencer attribution was blunt: unique promo codes, custom UTM-tracked links, and post-campaign sales lift studies. Promo codes and UTM links capture the conversions that happen to use those specific touchpoints — but they miss the substantial portion of influenced purchases that happen through organic search after a viewer sees a creator’s content, through brand awareness that manifests in later direct purchases, and through the multi-touchpoint paths that characterize most modern consumer purchase journeys.

This measurement gap systematically understated influencer marketing’s contribution to revenue, making it difficult to justify budget allocations and impossible to optimize spend across a creator portfolio with any precision.

Multi-Touch Attribution Models

AI-powered attribution modeling addresses this by integrating creator campaign data with broader digital touchpoint data — ad impressions, organic search behavior, direct site visits, email engagement, and purchase events — into a unified model that assigns credit across the full customer journey. Rather than asking “did this consumer use the promo code?” the question becomes “what was this creator’s contribution to this consumer’s path to purchase, weighted appropriately against all other touchpoints?”

The results are materially different from last-touch attribution. Creators who appeared earlier in a consumer’s discovery journey — building awareness and initial consideration before other touchpoints drove the final conversion — receive appropriate credit in multi-touch models. This often reveals significant misallocation in creator budgets, where brands have been systematically overpaying for bottom-funnel conversion creators while underfunding top-of-funnel creators who generate substantial downstream value.

What Better Attribution Unlocks

The business implication of improved attribution is straightforward: when you can measure a creator’s actual contribution to revenue, you can price their partnerships accurately, optimize your creator portfolio based on performance data rather than engagement metrics, and make the case for creator marketing budget with the kind of financial rigor that CFOs require. Reports from platforms integrating multi-touch attribution show brands achieving $5.78 in return for every $1 invested in AI-matched creator campaigns — a figure that was essentially impossible to calculate reliably under traditional attribution frameworks.

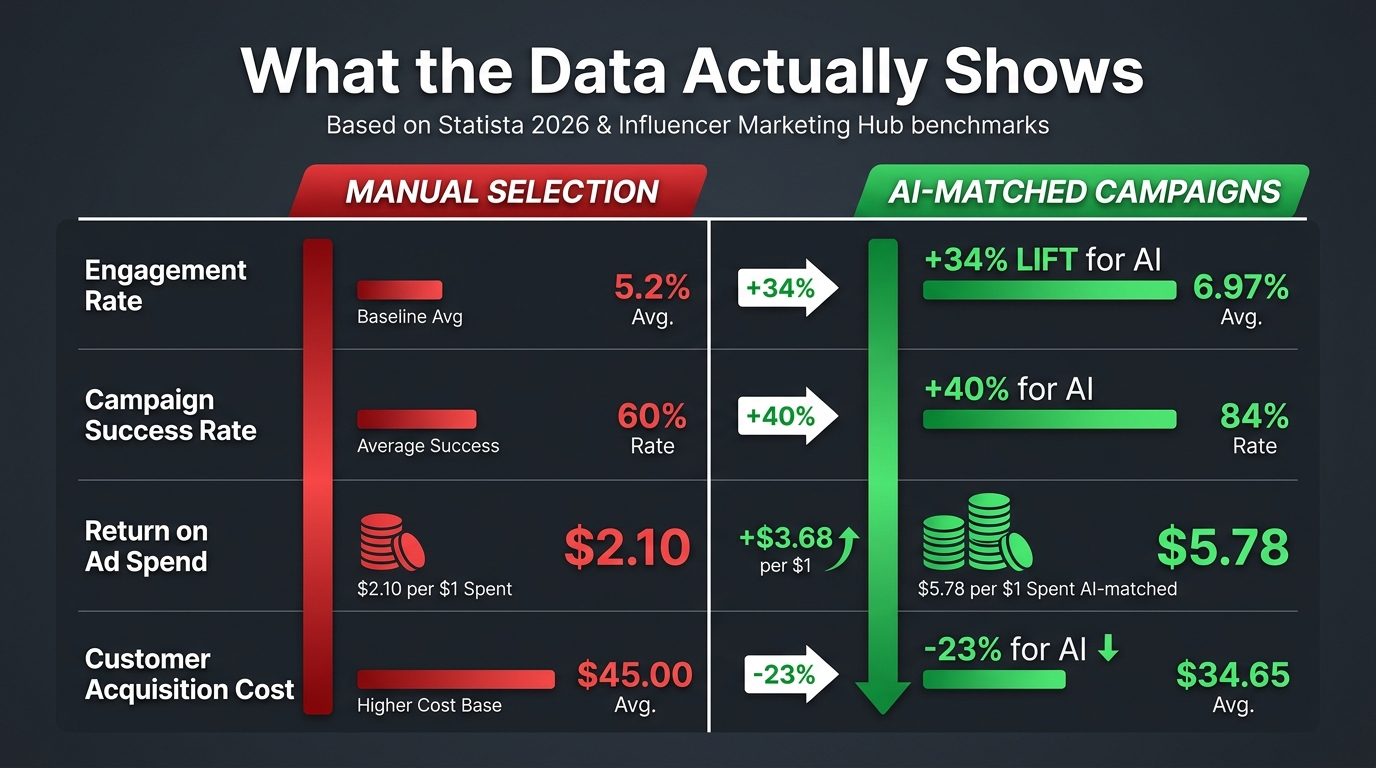

What the Numbers Actually Show

Performance claims in marketing technology are frequently inflated, selectively cited, and poorly sourced. Here is what the current evidence base actually supports, with source context included.

Engagement and Campaign Outcomes

Campaigns using AI-matched creators achieve 34% higher engagement rates compared to manually selected creator campaigns, according to Statista data. The 40% higher campaign success rate for AI-matched partnerships reflects a broader measure that includes engagement, conversion, and brand lift metrics. These figures come from aggregate campaign data across platforms with significant sample sizes, though it’s worth noting that the exact definition of “campaign success” varies by platform and measurement methodology.

ROAS and Acquisition Economics

Return on ad spend for AI-matched creator campaigns has been reported at 25–30% above comparable manually-selected campaigns, with top-performing AI-matched programs achieving the $5.78/$1 ROAS benchmark. Customer acquisition costs have declined by 23% for brands that have transitioned from manual to AI-assisted creator selection, reflecting both better audience-product fit and improved attribution that eliminates spending on low-performing partnerships that manual processes couldn’t identify in advance.

Adoption and Scale

The adoption figures are equally significant. Approximately 59–60% of marketers now use AI for creator discovery and matching, with 63% planning expanded AI use for improved matching precision. The 36.67% of AI applications specifically targeting creator discovery and audience matching represents the single largest category of AI use in influencer marketing — ahead of content creation assistance, performance reporting, and campaign management automation.

What These Numbers Don’t Tell You

The performance improvements attributed to AI matching reflect a combination of better creator selection and better measurement — and the two are difficult to fully disentangle. A campaign that uses AI matching AND better attribution tools will appear to outperform manual selection by a margin that includes the attribution improvement as well as the selection improvement. Brands evaluating AI matching platforms should ask specifically how performance benchmarks were constructed, what the comparison baseline was, and whether attribution methodology was held constant across the comparison.

Choosing a Platform: What Actually Differentiates Them

The creator matching platform landscape has expanded significantly, with options ranging from free entry-level tools to enterprise platforms charging tens of thousands annually. The marketing language across these tools is nearly identical — every platform claims superior matching intelligence, the largest creator database, and the most accurate performance prediction. The actual differentiators are more specific.

Database Depth and Freshness

The quality of matching output is directly constrained by the quality of the creator data being analyzed. Platforms with larger, more frequently updated creator databases produce better matches. Ask specifically: how frequently is creator data refreshed? Are engagement metrics pulled in real-time or on a delay? Does the platform cover the specific platforms and creator tiers most relevant to your campaigns?

InfluenceFlow leads as the most capable free-tier option in 2026 for brands with limited budgets, offering genuine AI-powered matching including audience demographic analysis, engagement authenticity scoring, and content alignment assessment. For B2B campaigns requiring LinkedIn integration, Favikon ($99/month) offers the strongest cross-platform coverage for that specific use case. Enterprise-tier platforms like GRIN and Traackr provide the most sophisticated matching and attribution integration but require significant budget commitments that make sense only at scale.

Fraud Detection Rigor

Not all fraud detection is equal. Evaluate platforms on their specific methodology: do they analyze follower growth velocity, engagement distribution patterns, comment authenticity, and audience-to-engagement ratio together? Or do they use simpler follower audit scores that can be gamed by sophisticated fraud operations? Ask for specifics on false negative rates — how often does the platform fail to detect inauthentic followers — rather than accepting generic claims about “advanced fraud detection.”

Attribution Integration

Unless a platform can connect creator performance data to your downstream conversion and revenue data, you’re still measuring in proxies. Evaluate whether the platform integrates with your existing analytics stack — Google Analytics, Shopify, your CRM — and whether it supports the attribution models that match your actual campaign objectives. A platform that measures clicks but not conversions is useful for awareness campaigns and essentially useless for performance marketing.

The Build vs. Buy Question

For brands with significant scale and unique matching requirements, building proprietary creator intelligence layers on top of first-party customer data is increasingly viable. Brands with rich purchase behavior data, detailed customer segmentation, and the data engineering resources to build matching models have a significant advantage: they can use their own customer profiles as the target audience input rather than relying on the generic demographic models that platform tools use. This approach produces audience affinity scoring tailored to your actual buyer, not a category approximation of them.

What AI Still Can’t Do — And Why That Matters

Honest assessment of AI creator matching requires acknowledging its genuine limitations. The technology has made a material difference in selection quality and campaign performance, but there are aspects of creator partnerships where algorithmic scoring systematically underperforms human judgment.

The Authenticity Tension

Consumers are perceptive about the difference between genuinely organic creator partnerships and algorithmically optimized placements. The irony of AI matching is that a system optimized purely for alignment data points can produce technically well-matched partnerships that nevertheless feel engineered rather than genuine — because the creator and brand connected via a database score rather than through any authentic relationship.

36.7% of marketers express concern that AI-powered matching and AI-assisted influencer programs present a lack of authenticity, and that concern is not unfounded. The best creator partnerships — the ones that generate disproportionate organic reach and long-term brand equity — tend to emerge from creators who are genuine enthusiasts of the products they represent, regardless of whether an algorithm identified the fit. AI can identify candidates for authentic partnerships, but it cannot manufacture the authenticity itself.

Creative Brief Quality Remains Human Work

Matching the right creator to the right product is only half the equation. What you ask that creator to make — the creative brief, the campaign concept, the narrative framework — determines whether a well-matched partnership actually performs. AI systems can suggest creative directions based on what has worked for similar pairings, but the craft of briefing a creator in a way that produces content that feels native to their voice while serving a brand’s objectives remains primarily human work.

Brands that automate matching but invest insufficiently in creative direction frequently find that AI-selected creators still underperform, because the selection was good but the activation was generic. The algorithm identified the right person. The brief told them to do something uninspiring.

Relationship Management Doesn’t Automate Well

Long-term creator relationships — the kind that produce the most valuable ambassador-style partnerships over time — require human investment that automated tools can support but not replace. Creators who feel genuinely valued, communicated with directly, and supported creatively tend to produce better content and maintain partnerships longer. Fully automated creator relationship management, where every interaction is a templated system message, produces transactional relationships that rarely develop into the high-value long-term partnerships that drive compounding returns.

The most effective creator programs use AI to handle the discovery, vetting, and operational management that would otherwise consume all available bandwidth — freeing up human relationship investment for the partnerships most likely to generate durable value.

Making the Transition: Where to Start

For marketing teams currently operating primarily through manual creator selection, the transition to AI-assisted matching doesn’t require a wholesale system replacement. There’s a logical progression that allows teams to capture the primary benefits of algorithmic matching without organizational disruption.

Start with Discovery, Not Selection

Use AI matching tools initially for creator discovery rather than final selection. Run your existing manual vetting process against an AI-generated shortlist rather than against a manually assembled one. The improvement in the quality of your starting pool will be immediately visible — more precise audience fit, fewer obvious brand safety concerns, better category depth — even before you fully trust the matching scores for final decision-making.

Build Performance Baselines Before Comparing

To genuinely evaluate whether AI matching is improving your results, you need documented baselines from your current manual process: average engagement rates, conversion rates per campaign, ROAS by creator tier. Without those benchmarks, comparative performance data is meaningless. Set up tracking infrastructure before switching tools so you’re comparing like-for-like performance data.

Metrics Worth Tracking

Beyond the standard engagement metrics, track: audience-product fit scores against actual conversion rates to validate the platform’s predictions; content audit coverage — the percentage of creator history scanned versus the instances of brand safety issues flagged; discovery speed — the hours required to produce a qualified shortlist of 20 creators versus your current manual process; and creator retention rate — whether AI-matched creators produce stronger long-term partnership outcomes than manually-selected ones.

Red Flags in Platform Claims

Be skeptical of platforms that cite engagement rate improvements without specifying the comparison baseline. Be wary of database size claims without freshness data. Question performance prediction accuracy claims that don’t come with confidence intervals or error rate disclosures. And be cautious of any platform that implies its matching is so precise that human judgment can be removed from the selection process entirely — that claim is not supported by current technology capabilities.

What This Shift Means for the Creator Economy

AI creator-product matching is not just a workflow improvement for brand marketing teams. It is reshaping the economics and dynamics of the creator economy in ways that will compound over the next several years.

The most significant structural shift is the democratization of discovery. When manual processes dominated, visibility was the primary selection criterion. Creators with the largest followings, the most prominent platform placement, and the strongest industry relationships got the most partnership opportunities — independent of whether they were actually the best fit for specific brands. AI matching changes that by finding alignment rather than visibility. A creator with 15,000 highly engaged followers in a specific niche can surface as the top recommendation for a brand that fits their audience perfectly, competing directly with creators who have ten times their following but less precise audience alignment.

For brands, the practical result is access to a vastly larger effective creator pool. The number of creators who could meaningfully serve a campaign was previously bounded by discovery capacity — you could only evaluate what you could find manually. AI systems remove that boundary, expanding the effective selection pool from dozens to thousands while improving rather than degrading selection quality.

For creators, the shift creates both opportunity and pressure. Opportunity, because performance data and audience affinity scores can surface smaller creators who were previously invisible to brand partners. Pressure, because the same data systems that enable discovery also enable rigorous performance accountability — creators whose campaigns consistently underperform their predicted metrics will find that algorithmic scoring reflects that track record in future matching recommendations.

The creator economy was already large. At a projected $214–$314 billion in total ecosystem value in 2026, it’s becoming a major media category. AI matching is the infrastructure layer that makes deploying capital into that category with the precision and accountability that serious marketing investment requires. The guesswork isn’t eliminated — no algorithm predicts human behavior perfectly. But the systematic, structural errors of follower-count-first selection, shallow vetting, and narrow discovery are increasingly inexcusable for brands with the tools to do better.

Final Takeaways

AI creator-product matching has crossed from early-adopter novelty to operational standard. The performance data is consistent enough, the tool accessibility broad enough, and the cost of manual selection errors high enough that continued reliance on gut-call creator selection is increasingly difficult to defend on business grounds.

The most important things to carry forward from this analysis:

- Audience affinity scoring is the single most valuable signal that AI matching provides — the behavioral alignment between a creator’s followers and your actual target customer. No manual process produces this at scale.

- Predictive matching enables early-mover positioning on emerging creators before they reach peak pricing. This is where the most asymmetric value lies for brands willing to invest in creator relationship development.

- Attribution integration is non-negotiable for performance marketing use cases. A matching platform that can’t connect to your revenue data is measuring the wrong things.

- Micro-influencer programs are the primary beneficiary of AI discovery tooling. The operational barrier that made them impractical at scale has been substantially lowered.

- AI identifies candidates for authentic partnerships — it doesn’t create them. The creative brief, the relationship investment, and the human judgment about what to ask a creator to do remain critical to outcomes.

- Brand safety auditing is severely under-deployed. Only 4% of brands conduct comprehensive vetting. This is a solvable problem with available technology, and the downside risk of not solving it is significant.

The brands performing best in creator marketing in 2026 are not the ones who have automated everything. They’re the ones who have automated the right things — discovery, fraud detection, performance prediction, attribution — and freed up human judgment for the parts of the process where it actually adds irreplaceable value.