Most enterprises have been “doing automation” for years. They have RPA bots running in finance, chatbots fielding tier-1 support tickets, and maybe a low-code workflow tool that HR uses for onboarding requests. On paper, that looks like progress. In practice, it’s a collection of isolated islands — each one solving a narrow problem, none of them talking to each other, and collectively creating a patchwork that’s expensive to maintain and nearly impossible to scale.

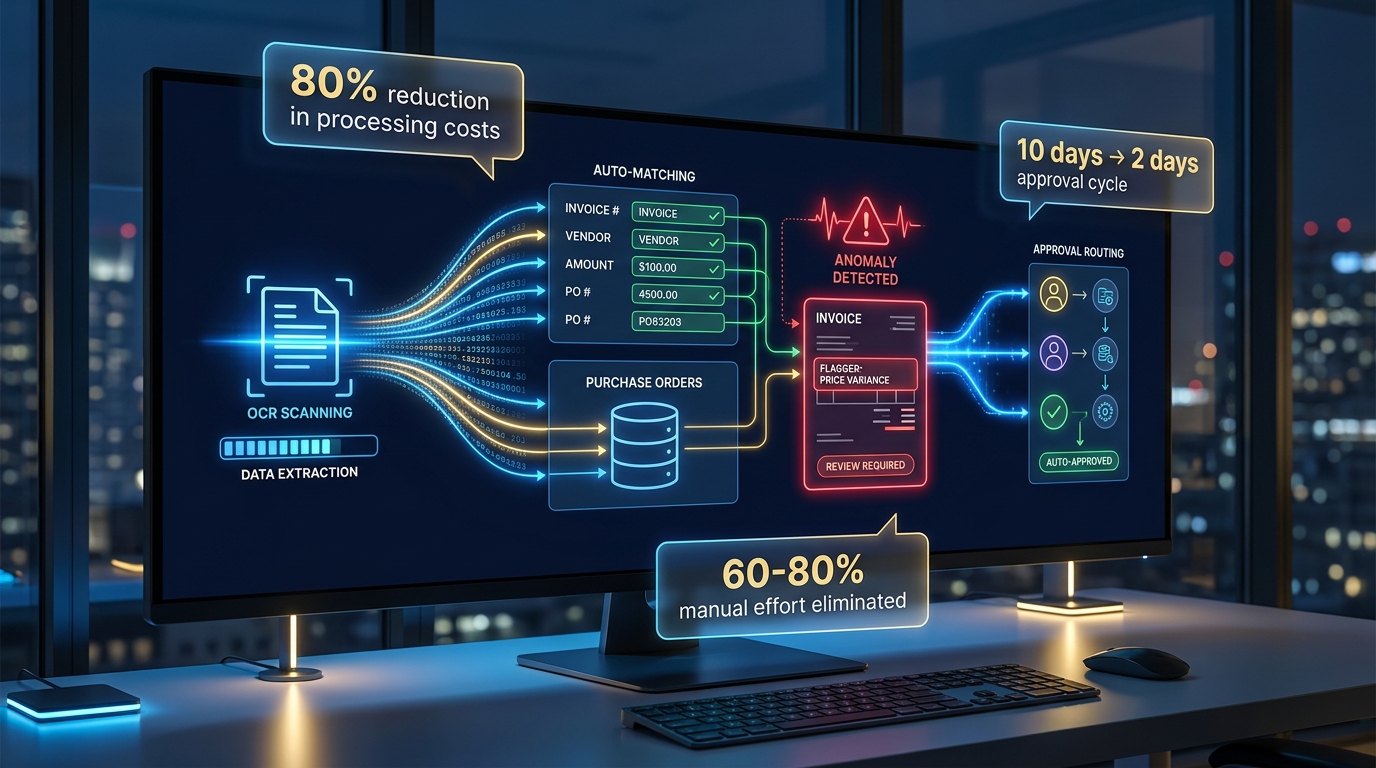

Hyperautomation is a fundamentally different bet. It isn’t a product you buy or a bot you deploy. It’s an architectural commitment to connecting intelligence, automation, and orchestration across the entire enterprise — so that workflows don’t just execute faster, they adapt, improve, and operate continuously without constant human intervention.

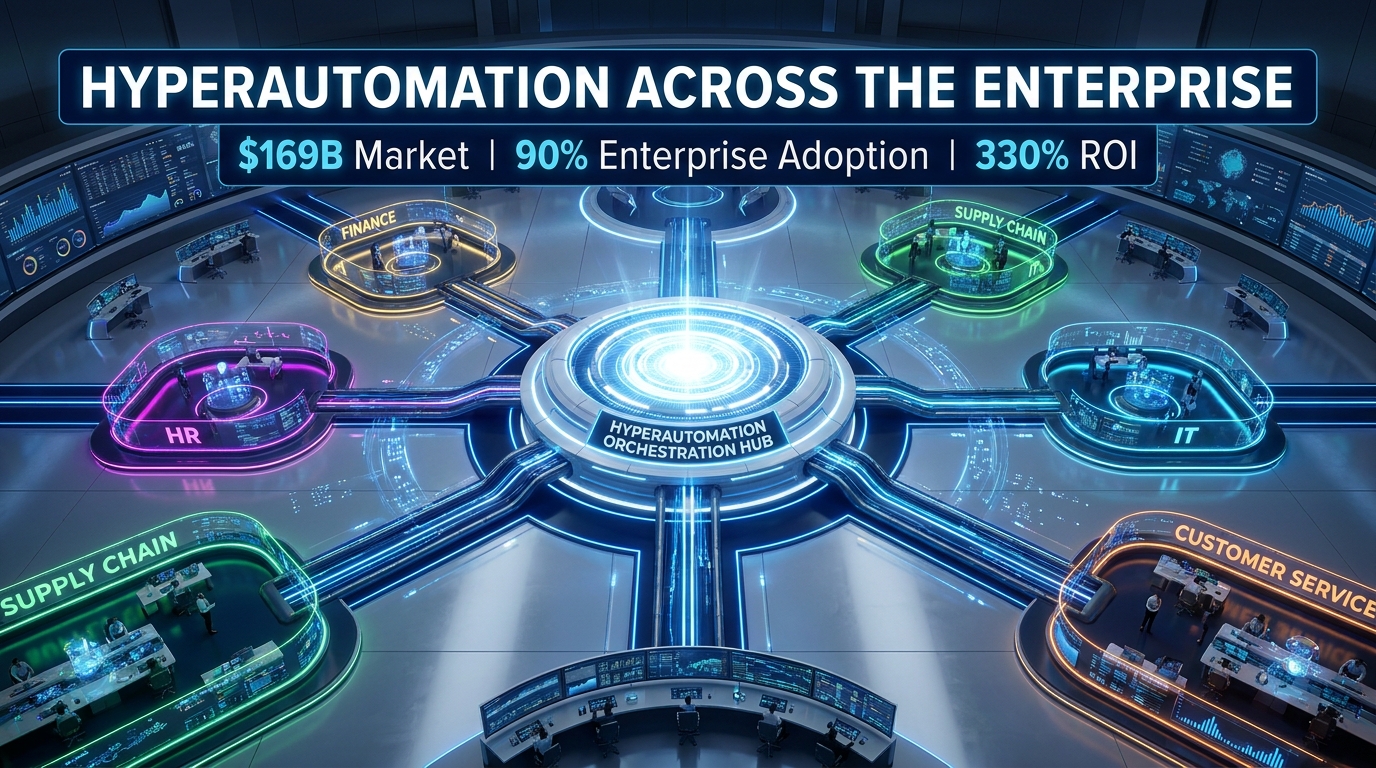

The numbers behind this shift are no longer speculative. The hyperautomation market sits at an estimated $65–169 billion in 2026 (estimates vary by methodology, but all point to massive scale), with forecasts projecting growth to $235–306 billion by 2034. Ninety percent of large enterprises now list hyperautomation as a strategic priority, and organizations reporting mature implementations cite average ROI figures north of 300%. But here’s the uncomfortable counterpoint: 70% of automation projects still fail. The gap between strategic priority and operational reality is enormous — and it’s almost never a technology problem.

This article is about closing that gap. Not with theory, but with department-by-department mechanics: what hyperautomation actually looks like in finance, HR, supply chain, IT, and customer service; what the companies that got it wrong missed; and how to build a roadmap that survives contact with your actual organization.

What Hyperautomation Actually Is — And What It’s Not

The term was coined by Gartner in 2019, but its meaning has evolved considerably. In 2026, hyperautomation is best understood as a technology stack and an operating philosophy — not a single tool or vendor category.

The Technology Stack

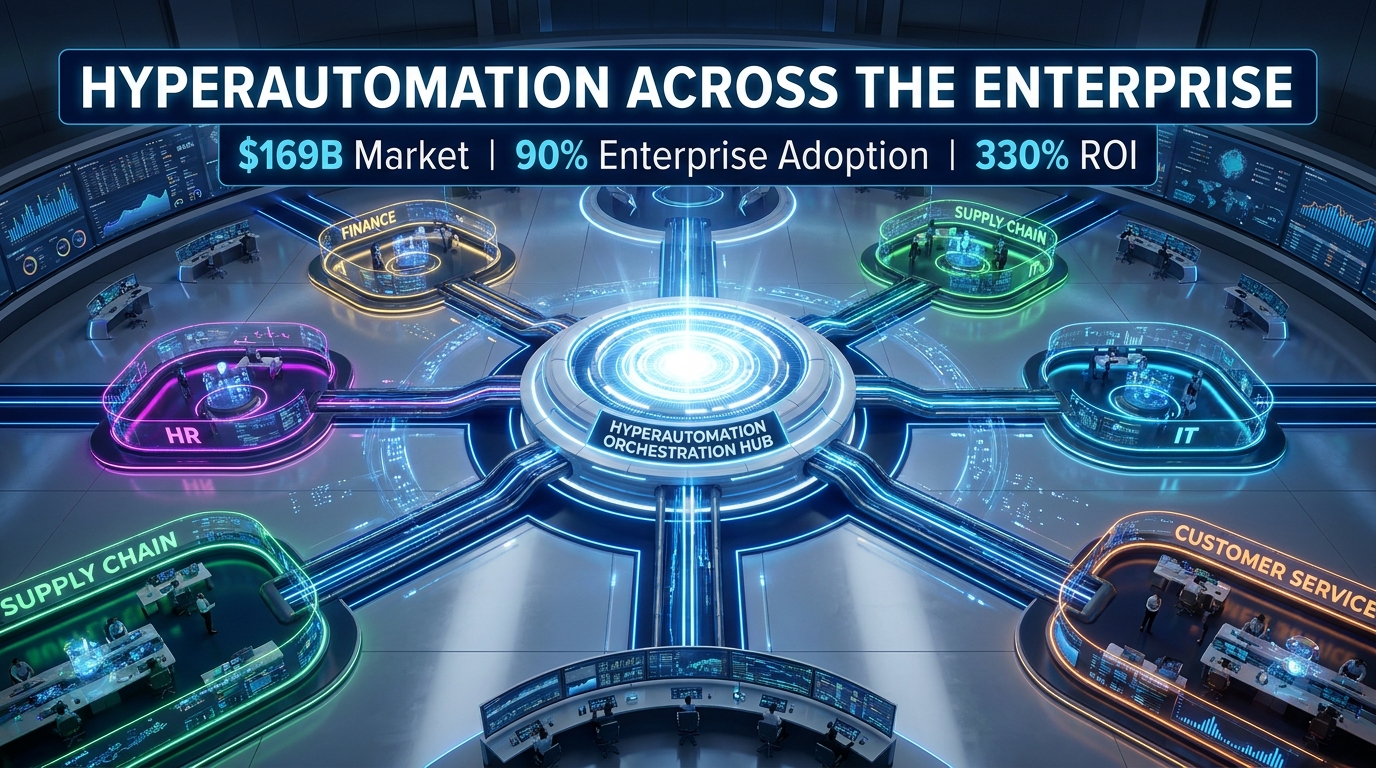

Hyperautomation combines multiple complementary technologies into a unified automation fabric:

- Robotic Process Automation (RPA): The execution layer for rule-based, repetitive tasks. RPA is still the foundation, but it’s no longer the ceiling.

- AI and Machine Learning: Brings pattern recognition, predictive analytics, anomaly detection, and adaptive decision-making to processes that change over time or involve ambiguous inputs.

- Natural Language Processing (NLP) and Intelligent Document Processing (IDP): Enables automation to handle unstructured data — emails, contracts, PDFs, scanned forms — not just clean spreadsheets.

- Process Mining and Task Mining: AI-driven discovery tools that analyze event logs from ERP, CRM, and other systems to identify bottlenecks, deviations, and automation opportunities before a single bot is built.

- Agentic AI: Autonomous AI agents capable of executing multi-step workflows, making decisions, coordinating with other systems, and handling exceptions — without pre-scripted rules for every scenario. Gartner projects that 40% of enterprise applications will incorporate task-specific AI agents in 2026, up from less than 5% in 2025.

- Low-Code/No-Code Platforms: Democratize automation by letting business users build and modify workflows without deep engineering resources.

- Orchestration Layers: Central coordination platforms that govern bots, AI agents, APIs, and human-in-the-loop steps across the entire workflow — the connective tissue that makes everything work together.

The Philosophy Difference

Traditional automation asks: “What repetitive task can we hand to a bot?” Hyperautomation asks: “What is the end-to-end process we’re trying to optimize, and how do we wire intelligence into every step of it?”

The practical implication is enormous. Traditional automation fails the moment it encounters an exception — an invoice in an unexpected format, an employee request that doesn’t match a template, a logistics disruption that wasn’t in the rule set. Hyperautomation is designed to handle exceptions as part of normal operation, either by routing them to AI decision engines or escalating to a human with full context already populated.

That distinction is what separates the 90% of enterprises that have automation from the minority achieving genuinely compounding returns on it.

The $169 Billion Reality Check: What the Market Scale Tells You

Before diving into department specifics, it’s worth sitting with the economics of where hyperautomation sits in 2026. Multiple research organizations place the market value between $65 billion and $169 billion depending on methodology, but the directional signal is consistent: this is no longer an emerging technology experiment. It’s an operating infrastructure layer.

Who’s Leading Adoption

Large enterprises hold roughly 30% of the market share, and the Banking, Financial Services, and Insurance (BFSI) sector leads vertical adoption at approximately 15% of the total market. Manufacturing follows at 8%, with healthcare at 4%. The concentration in regulated, data-intensive industries is deliberate — these are environments where process errors carry high costs and where audit trails, compliance monitoring, and high transaction volumes make automation ROI most immediate.

The ROI Distribution Problem

Averages are misleading here. Organizations cite first-year ROI ranging from 30% to 330%, with the high end concentrated among enterprises that made three specific investments simultaneously: governance infrastructure, process discovery before deployment, and workforce change management. Companies that bought technology first and figured out the rest later consistently land in the bottom quartile of returns.

A Fortune 500 manufacturer that implemented intelligent automation for predictive maintenance and quality control reported €1.98 million in annual savings and a 300% ROI within eight months. A mid-size firm automating invoice reconciliation cut cost-per-invoice by 80% and reduced approval cycles from ten days to two. These aren’t outliers — they’re the result of deliberate, structured implementation, not just deploying tools.

The SME Opening

While large enterprises dominate the market, SMEs hold roughly 18% of adoption and are growing faster on a percentage basis. The rise of Hyperautomation-as-a-Service (HaaS) — pay-as-you-go cloud access to automation infrastructure — has dramatically lowered the entry barrier. A mid-market company no longer needs to build an automation center of excellence from scratch to access process mining, AI orchestration, and RPA tooling. The infrastructure can be licensed and layered in.

Process Mining First: The Step Most Organizations Skip

The most common implementation mistake in hyperautomation isn’t choosing the wrong technology — it’s automating the wrong process. Organizations are remarkably bad at knowing how their processes actually run, as distinct from how they’re supposed to run. Documented procedures and actual workflows almost never match.

What Process Mining Does

Process mining tools analyze event logs from existing enterprise systems — ERP, CRM, ITSM, finance platforms — to reconstruct the actual flow of work, including every deviation, exception, rework loop, and bottleneck. The output isn’t a flowchart someone drew in a workshop. It’s a data-accurate picture of how a process actually behaves across thousands of real executions.

This matters for hyperautomation in two ways. First, it identifies which processes have the most automation value — high frequency, high cost of exceptions, high variability. Second, it reveals process debt: the accumulated inefficiencies that, if automated without first being fixed, will simply be executed faster and at greater scale. Automating a broken process doesn’t fix it. It compounds it.

Task Mining as a Complement

Where process mining analyzes system logs, task mining uses screen recording and user interaction data to understand what individual employees actually do on their computers — which applications they switch between, which steps they perform manually that could be captured by a bot, where time is lost to copy-paste work between systems. Combined, process mining and task mining give a complete picture of where automation effort should go before a single bot is commissioned.

The Discovery-to-Deployment Pipeline

Leading organizations treat process discovery as a continuous function, not a one-time exercise. Process intelligence platforms run in the background of production systems, surfacing new automation candidates as business conditions change, monitoring deployed bots for performance drift, and flagging processes that have evolved past their original automation configuration. This creates a self-reinforcing feedback loop: the automation estate gets smarter over time rather than degrading.

Finance and Accounting: From Invoice Hell to Intelligent Processing

Finance was the first major department to adopt RPA at scale, which means it’s also the department most burdened with legacy automation debt. Finance teams in large enterprises often manage dozens of individual bots, each maintaining a narrow slice of a workflow, with human intervention required at every handoff point. Hyperautomation in finance means replacing that bot sprawl with end-to-end intelligent processing.

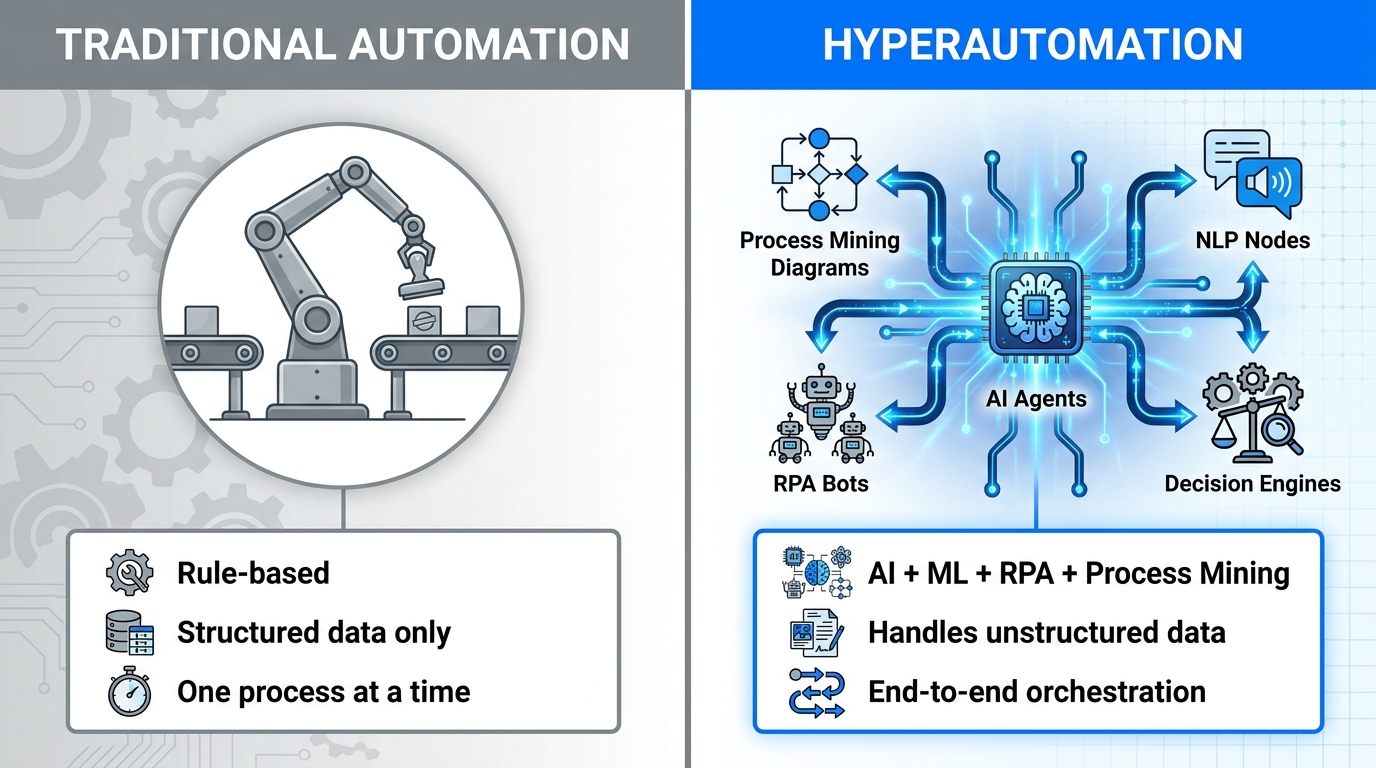

Accounts Payable and Invoice Processing

The AP workflow is the canonical hyperautomation finance use case, and for good reason. Invoice processing is high-volume, multi-format, exception-prone, and deeply consequential for vendor relationships and cash flow management. A hyperautomated AP workflow layers OCR and IDP to extract data from invoices in any format — PDF, scan, email attachment, EDI — followed by ML-driven matching against purchase orders and contracts, anomaly detection for duplicate invoices or out-of-range amounts, and automated routing for approval or payment.

The quantified results are consistent across organizations: 60–80% reduction in processing costs, with approval cycle times dropping from an average of 10 days to 2 days. One mid-size firm cut cost-per-invoice by 80% across approximately 500 monthly invoices. For enterprise-scale AP departments processing tens of thousands of invoices monthly, the math becomes very large very quickly.

Anti-Money Laundering and Compliance Monitoring

Financial services organizations are deploying hyperautomation specifically to handle compliance workloads that traditional rule-based systems struggle with. AML screening is a strong example: AI models screen transactions in real time, detect behavioral patterns that deviate from baseline, and flag high-risk activity for human review — all while maintaining complete audit trails for regulatory examination. The speed improvement over manual review is significant, but the reduction in false positives (which plague rule-based AML systems) is arguably more valuable, because false positives consume analyst time and create alert fatigue that causes real risks to be missed.

Financial Reporting and Reconciliation

Month-end close — the painful ritual of reconciling accounts, consolidating data from multiple systems, and producing management reports — is another prime target. Hyperautomated close processes use RPA to pull data from ERP systems, AI to identify reconciling items and suggest journal entries, and workflow orchestration to route exceptions to the appropriate finance staff with full context already attached. Organizations report reducing close cycles from five to seven days down to one to two days.

Loan and Credit Processing

For financial institutions, loan origination has historically been a multi-day, document-intensive process requiring staff to manually verify income, employment, credit history, and collateral across multiple external sources. Hyperautomated lending workflows use AI to automate extraction and verification, ML models to run initial credit assessments, and automated routing to move applications to the appropriate underwriter or issue instant approvals for straightforward cases. Processing time drops from days to hours for standard applications.

HR and People Operations: Automating the Full Employee Lifecycle

HR is a department that generates enormous volumes of structured data — employee records, payroll inputs, benefits elections, compliance filings — alongside significant volumes of unstructured interaction: emails, performance notes, accommodation requests, exit interviews. That combination makes it an ideal hyperautomation target, because you can apply RPA to the structured data flows and NLP/AI to the conversational and document-heavy processes.

Talent Acquisition and Recruitment

Hyperautomated recruitment workflows use NLP to screen resumes and job applications against role requirements at a speed no human team can match, AI to score candidates and identify the highest-probability fits, and automated scheduling tools to coordinate interview logistics without recruiter intervention. The impact isn’t just speed — it’s consistency. AI-driven screening applies identical criteria to every application, reducing the variability that creates both legal risk and missed talent in manual processes.

The important caveat: recruitment AI requires active governance. Bias in training data produces biased screening outcomes. Organizations that have succeeded with hyperautomated recruiting maintain human oversight at the point of initial shortlisting and conduct regular audits of screening outcomes across demographic groups.

Onboarding

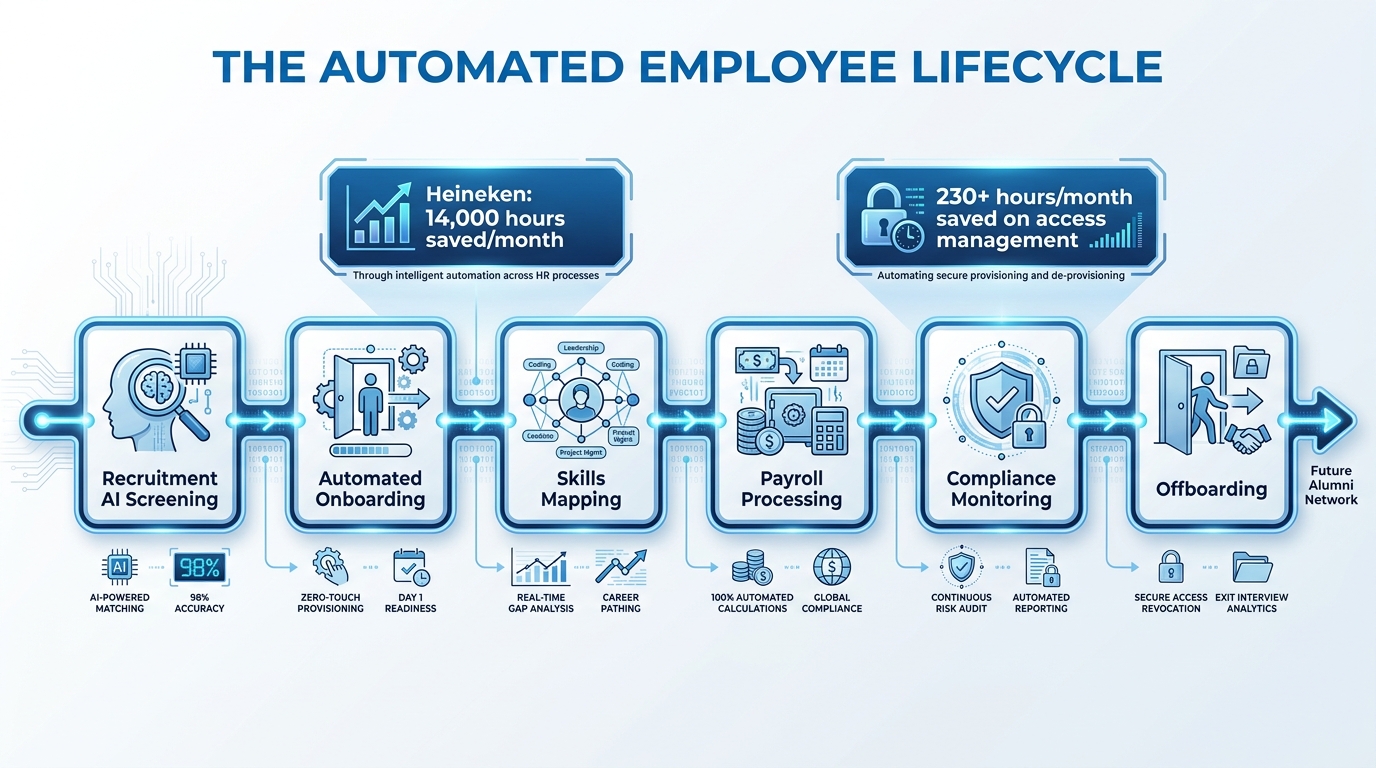

New employee onboarding involves dozens of discrete tasks across HR, IT, facilities, payroll, and legal — and most organizations handle this with a combination of spreadsheets, email chains, and manual checklists. A hyperautomated onboarding process orchestrates all of it: system access provisioning, equipment requests, benefits enrollment, policy acknowledgment workflows, and manager notification — all triggered automatically when a hire is confirmed in the ATS, and all tracked in a central dashboard.

A particularly clear benchmark: an enterprise managing approximately 7,000 monthly employee access card events via RPA and ServiceNow integration saved over 230 hours per month — essentially 1.5 FTEs — while simultaneously improving security and achieving 24/7 processing capability. The access events that previously required manual intervention now route, provision, and close without human touch.

Payroll and Benefits Administration

Payroll processing is one of the most well-established RPA use cases, but hyperautomation extends this significantly. Beyond data extraction and calculation, AI models now handle exception management — identifying anomalies in hours data, flagging discrepancies between timesheet entries and scheduling systems, and automatically resolving common exception types without payroll staff involvement. Cognizant’s integration of RPA, AI, and BPM across payroll, expenses, and data reconciliation produced substantial time savings and measurably increased accuracy in reporting — without increasing headcount.

Compliance and Employee Records

HR compliance — tracking certifications, ensuring mandatory training completion, managing documentation retention, and responding to audit requests — is largely a hyperautomation-ready workload. Intelligent workflows monitor expiry dates on certifications, trigger renewal reminders, pull completion data from LMS systems, and generate compliance reports automatically. For organizations in regulated industries managing complex workforce compliance obligations, this reduces both risk and the manual overhead that has traditionally consumed significant HR bandwidth.

Heineken’s Federated Model

Heineken’s hyperautomation program is one of the most-cited enterprise examples for good reason. By implementing a federated model — a central CoE providing standards and infrastructure, with automation capability distributed to individual business units — the company scaled to 140+ automated processes across IT, HR, and procurement. The result: 14,000 hours saved per month, with projections of 1 million hours annually as the program continues to scale. The federated model is particularly instructive because it solved the tension between standardization and business-unit autonomy that paralyzes many enterprise automation programs.

Supply Chain and Operations: Predictive, Connected, Self-Adjusting

Supply chain is where hyperautomation becomes genuinely exciting — and where the gap between traditional automation and the hyperautomated version is most dramatic. A traditional automated supply chain can execute a pre-programmed reorder when inventory hits a threshold. A hyperautomated supply chain senses disruption, models response options, reroutes logistics, adjusts procurement, and updates demand forecasts — all faster than a human operations team could convene a meeting to discuss the problem.

Predictive Maintenance

Industrial IoT sensors generate continuous data on equipment performance — temperature, vibration, pressure, cycle counts — which AI models analyze to detect patterns that precede failure. This moves maintenance from reactive (fix it when it breaks) and scheduled (maintain it on a calendar) to predictive (intervene precisely when data indicates imminent failure). Toyota’s implementation of IoT and AI-driven predictive maintenance achieved a 25% reduction in downtime and €8.63 million in savings. A separate Fortune 500 manufacturer reported a 75% efficiency boost and €1.98 million in annual savings within eight months of implementation.

The economics of predictive maintenance are compelling precisely because unplanned downtime is disproportionately expensive. A single unexpected production line shutdown in a high-throughput manufacturing environment can cost more than months of predictive maintenance infrastructure investment.

Demand Forecasting and Inventory Intelligence

Traditional demand forecasting relies on historical sales data run through statistical models that assume the future will largely resemble the past. Hyperautomated forecasting integrates external signals — social media trends, weather patterns, economic indicators, competitor pricing, supplier lead time changes — into ML models that continuously update forecasts based on real-world conditions rather than historical averages.

Unilever’s integration of AI, RPA, and IoT for inventory management and demand forecasting delivered enhanced efficiency, cost savings, and real-time visibility across its supply network. Poloplast’s deployment of AI-driven forecasting via Dynamics 365 eliminated data silos and produced measurably more precise predictions — reducing both overstock and stockout events that had previously required manual expediting.

Logistics and Disruption Response

DHL’s hyperautomation program in logistics automation and shipment tracking improved delivery accuracy and enabled AI-powered demand planning that measurably reduced waste. More significantly, AI-driven logistics systems can now respond to disruptions — port delays, weather events, carrier capacity constraints — in real time, automatically rerouting shipments, notifying customers, and adjusting downstream inventory positioning without waiting for a logistics coordinator to notice the problem and begin manual intervention.

Digital Twins and Simulation

The emergence of digital twins — virtual replicas of physical supply chain assets and flows, updated continuously from real sensor and transaction data — has created a new layer of hyperautomation capability. Organizations can now model the impact of proposed changes (a new supplier, a facility closure, a product launch) before executing them, running simulations against the digital twin rather than finding out what happens in the real supply chain. This is particularly valuable in manufacturing-intensive industries where supply chain reconfiguration decisions carry significant capital risk.

IT and AIOps: The Department That Has to Eat Its Own Cooking

IT occupies an unusual position in the hyperautomation landscape. It’s simultaneously the department most capable of implementing automation and the department most responsible for supporting everyone else’s automation efforts. And in many organizations, IT’s own operations remain surprisingly manual — network monitoring, incident management, software provisioning, and infrastructure scaling are often still handled by teams that receive alerts and then manually investigate and resolve them.

AIOps: From Reactive to Predictive Operations

AIOps platforms apply AI and ML to IT operational data — logs, metrics, events, traces — to detect anomalies, correlate incidents across systems, and identify root causes faster than any human analyst could. The result is a shift from reactive operations (a user reports a problem, IT investigates) to predictive operations (IT’s systems detect a developing problem before users experience it and initiate automated remediation).

The Stonebranch Global IT Automation Report for Q1 2026 found that 93% of enterprises now have centralized automation teams supporting more than 200 self-service users across development, cloud operations, data engineering, and business functions. Eighty-eight percent run hybrid IT environments that require orchestration across on-premise and cloud infrastructure — which means IT automation is no longer optional. The complexity of modern hybrid IT simply cannot be managed manually at scale.

Incident Management and Self-Healing Infrastructure

Hyperautomated IT operations implement automated playbooks that trigger in response to specific alert patterns — restarting services, clearing queues, scaling resources, isolating affected network segments — without waiting for human intervention. Telefonica O2’s experience is instructive here. Their RPA-based system required four full-time staff members just to monitor and manually restart bots when they failed, while handling approximately 20,000 daily interactions. After switching to an AI-orchestrated system with automated failure recovery, they scaled to over 100,000 daily interactions and 1.4 million monthly requests — a 5x efficiency improvement — with the same headcount redirected to higher-value work.

Software Development and DevOps Automation

Hyperautomation in the software development lifecycle means automated testing pipelines, AI-assisted code review, automated security scanning, and intelligent deployment orchestration that routes releases through appropriate approval gates based on risk profile. The effect is compressed cycle times — from code commit to production deployment — with higher quality gates rather than lower ones.

Network Provisioning and Cloud Operations

Gartner data shows that 30% of enterprises now have more than 50% of their network activities on autopilot — up from less than 10% in 2023. Network automation handles VLAN provisioning, firewall rule updates, bandwidth allocation, and infrastructure scaling based on real-time demand signals. Cloud cost optimization is a related growth area: AI systems continuously analyze resource utilization and rightsizing opportunities, automatically adjusting instance types, shutting down idle resources, and shifting workloads to optimal availability zones. Organizations using this approach report cloud spending reductions of 15–30% without any impact on service performance.

Customer Service: Hyperautomation at the Moment of Truth

Customer service is the department where hyperautomation most directly intersects with revenue. A poorly handled customer interaction doesn’t just create support cost — it creates churn, negative word-of-mouth, and lost expansion revenue. The stakes are high, which is why the evolution from simple chatbots to genuinely intelligent service automation matters so much.

From Chatbots to Agentic Customer Service

First-generation chatbots could handle simple FAQ responses and route tickets to the right queue. Agentic AI customer service systems can now handle complex, multi-step service interactions: checking order status across backend systems, processing a return and initiating a refund, troubleshooting a technical problem by querying product databases and running diagnostic scripts, and escalating to a human agent with a complete interaction summary already populated — all within a single conversation thread.

ABANCA, a Spanish bank, combined generative AI, OCR, and RPA to handle customer request processing at a scale that produced the equivalent of 150,000 human workdays of document processing, with 60% faster response times. Bank of America’s hyperautomated back-end processes reduced operational costs while enabling higher interaction volumes without proportional headcount growth — the key economic unlock of intelligent customer service automation.

Proactive Service and Predictive Engagement

Hyperautomation enables a shift from reactive to proactive customer service — one of the most significant capability changes available to customer-facing organizations. Rather than waiting for a customer to contact support about a shipping delay, an AI system detects the delay, identifies affected customers, generates personalized proactive outreach with updated ETAs and, where appropriate, automatic compensation offers — all before the customer knows there’s a problem. The CSAT and churn impact of proactive versus reactive service communication is well-documented and substantial.

Omni-Channel Consistency

A persistent customer service challenge is context loss when customers switch channels — calling after a chat session and having to repeat everything, or emailing after a phone call to a representative who has no record of the conversation. Hyperautomated customer service architectures maintain a unified customer context layer that follows the interaction across channels, giving every agent and AI touchpoint the same view of the customer’s history, current issue status, and prior interactions. This reduces resolution time and eliminates the experience fragmentation that customers consistently rank as their most frustrating service problem.

Governance, Centers of Excellence, and Why Runaway Automation Is a Real Risk

This is the section that doesn’t make vendor brochures, but it should be the first thing any organization reads before launching a hyperautomation program. Governance isn’t a compliance checkbox. It’s the structural difference between automation that compounds value and automation that compounds risk.

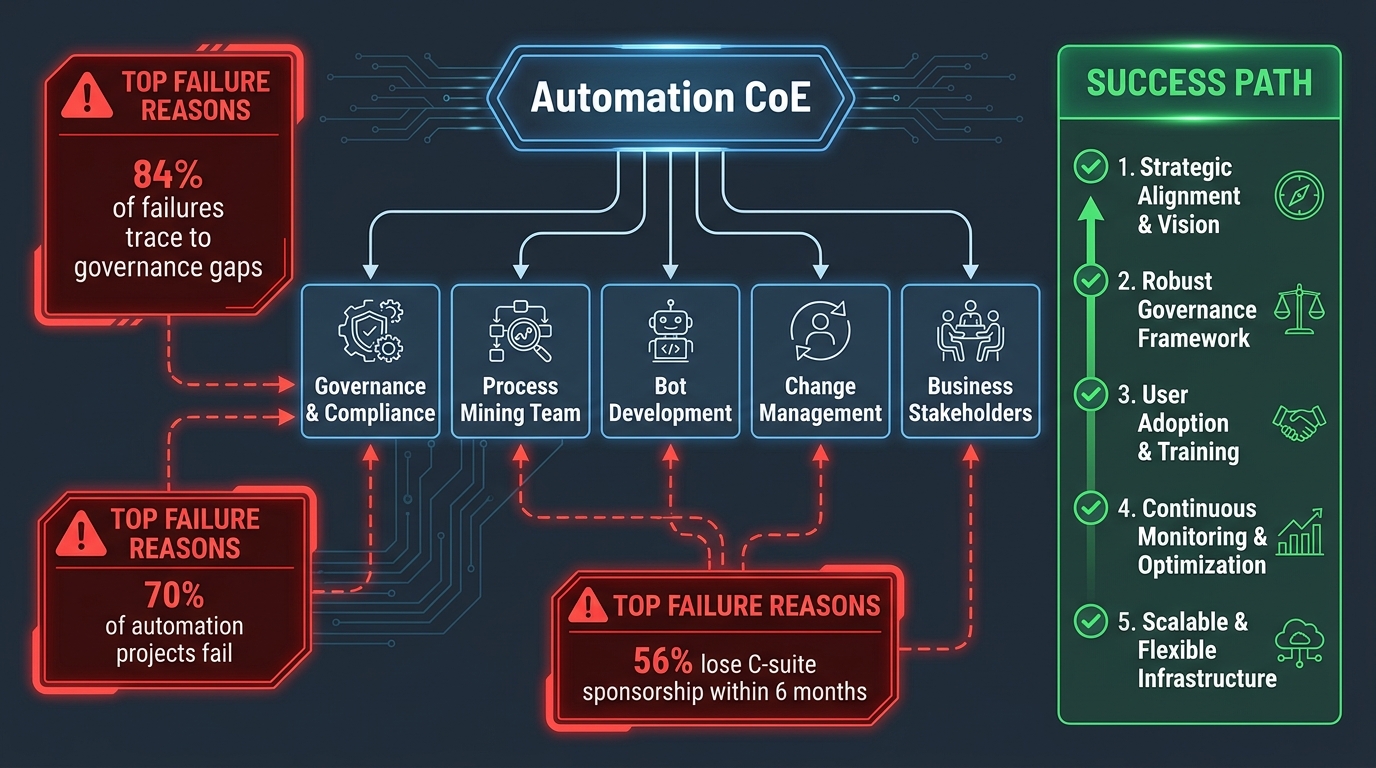

The Failure Statistics Are Uncomfortable

Seventy percent of automation projects fail. Leadership misalignment drives the majority of enterprise automation failures, and in 56% of failed implementations, executive sponsorship has evaporated within six months. Organizations frequently lack dedicated business owners with C-suite accountability for automation outcomes. And when governance is implemented after deployment — rather than being built in from the start — the organization is left with compliance exposure, audit trail gaps, and automation that operates outside of any meaningful oversight structure.

The specific failure modes from real implementations are instructive. Emerson’s pilot failed SLA commitments because its proprietary RPA tool lacked connectors for Oracle ERP; custom Python workarounds introduced fragility that caused delays and integration mismatches. The lesson isn’t that Emerson chose the wrong ERP — it’s that no one mapped integration requirements before committing to a tool. Newell’s implementation wasted infrastructure through single-threaded architecture that saturated servers, blocking scaling despite significant investment. These aren’t technology failures. They’re planning and governance failures that technology got blamed for.

What an Effective Automation CoE Does

A well-structured Automation Center of Excellence performs five distinct functions:

- Process Discovery and Prioritization: Maintains a continuously updated pipeline of automation candidates, scored against value, complexity, and risk criteria.

- Standards and Architecture: Defines technology standards, integration patterns, and development methodologies to prevent bot sprawl and technical debt accumulation.

- Governance and Compliance: Ensures automated workflows maintain audit trails, comply with regulatory requirements, and have defined accountability for outcomes.

- Performance Monitoring: Tracks bot health, process performance, and business outcomes across the entire automation estate — not just at deployment.

- Change Management and Business Engagement: Partners with business units to identify opportunities, manage rollouts, and handle the human side of process change.

The CoE model that works in 2026 is federated, not centralized. A central team owns standards, governance, and shared infrastructure. Business units own specific automation implementations with dedicated product ownership. This prevents the two failure modes that plague CoE programs: the ivory tower CoE that builds automation the business doesn’t use, and the fragmented approach where every department builds independently without shared standards.

AI Governance Is a Distinct Requirement

As GenAI components enter production automation workflows, a new governance requirement emerges: validating AI outputs before they affect real business processes. GenAI hallucinations — plausible-sounding but incorrect outputs — entering production workflows without validation layers have caused errors and regulatory exposure in early-adopter organizations. The governance response is structured validation checkpoints for any AI-generated output that triggers a consequential action, combined with monitoring systems that detect statistical shifts in AI behavior over time. Human-in-the-loop design isn’t a concession to AI immaturity — it’s a permanent architectural feature for high-stakes decision workflows.

The Workforce Equation: Change Management That Actually Works

Every hyperautomation initiative eventually runs into the same human reality: the people whose work is being changed didn’t design the change and often weren’t adequately consulted about it. The technical implementation can be flawless and still fail if the workforce transformation isn’t handled with the same rigor as the technology deployment.

The Data on Employee Resistance

Seventy-five percent of workers expect their roles to shift significantly as automation expands. Ninety-two percent of technology roles are already evolving. Yet a Paylocity survey of HR leaders conducted in late 2025 and early 2026 found that while 98% of respondents recognized automation’s high impact on their workforce, only 15% had a defined change management plan and fewer than 40% had allocated budget and timeline for the transition. The gap between acknowledged impact and actual preparation is staggering.

What Successful Change Management Looks Like

Organizations that have successfully navigated hyperautomation workforce transitions share several practices:

Involve employees in process discovery. The people who perform a process know where the actual friction is, where exceptions occur, and what the automation really needs to handle. Excluding them from discovery produces automation that works in theory and breaks in practice. Including them creates stakeholders who have shaped the solution and are invested in its success.

Communicate outcomes, not just changes. “Your job is changing” produces anxiety. “Here’s what the new version of your role focuses on, here’s why it’s better, and here’s what support you’ll receive” produces engagement. The communication architecture matters enormously.

Invest in micro-learning, not classroom training. Leading organizations in 2026 embed AI literacy and automation tooling education directly into daily workflows rather than scheduling periodic training sessions. Continuous skill development, delivered in the context of actual work, produces higher retention and faster capability development than classroom approaches.

Design roles, not just reductions. The organizations that have achieved the best outcomes from hyperautomation didn’t approach it as a headcount reduction exercise. They approached it as a role redesign: what do we want our finance team doing when they’re not manually reconciling invoices? What do we want our HR team doing when onboarding is orchestrated automatically? The answer to those questions — judgment-intensive, relationship-intensive, strategic work — becomes the foundation of the workforce transformation narrative.

Use natural attrition where appropriate. For departments where automation will meaningfully reduce headcount requirements over time, natural attrition is a far less disruptive path than reactive workforce reduction. Building this into the hyperautomation roadmap from the beginning allows organizations to let positions evolve without the morale damage of visible layoffs tied to automation initiatives.

Building Your Hyperautomation Roadmap — Phase by Phase

Most organizations that stall in hyperautomation do so because they tried to do too much at once, or because they started building before they understood what they were building toward. A phased approach that builds capability systematically — rather than deploying technology across all departments simultaneously — consistently outperforms the big-bang approach.

Phase 1: Foundation (Months 1–4)

The foundation phase is about learning before building. Key activities:

- Deploy process mining across two to three high-value processes to establish a fact base for prioritization decisions.

- Stand up CoE governance structures — not a large team, but defined roles, standards documentation, and decision-making protocols.

- Conduct a technology audit of existing automation investments to identify what can be extended versus what needs to be replaced.

- Identify and train three to five automation champions in target business units — the people who will bridge technical teams and business stakeholders.

- Select a pilot process in one department — high-volume, well-defined, non-critical enough that learning from failure is acceptable.

Phase 2: Pilot and Prove (Months 4–10)

Execute the pilot with production-level rigor: governance documentation, performance monitoring, and a defined success metric set established before deployment. Use the pilot to surface integration challenges, exception patterns, and workforce response — all of which will inform the scaling approach.

Expand to two or three additional processes in the same department or an adjacent one. By the end of this phase, the organization should have:

- Demonstrated ROI from at least two live automations.

- A functional bot monitoring and maintenance capability.

- A clear sense of where integration complexity sits — your ERP’s API limits, your legacy systems’ data quality, your orchestration platform’s real-world performance.

- A workforce change management playbook validated by actual experience.

Phase 3: Scale Across Departments (Months 10–24)

With a proven foundation, the scaling phase expands automation across departments in priority order — typically finance and HR first (high ROI, well-understood processes), then supply chain and operations (higher complexity but transformative impact), then customer service and IT (require sophisticated AI components that benefit from the organizational learning accumulated in earlier phases).

Key discipline in this phase: resist the temptation to customize every implementation. The organizations that scale fastest maintain a library of reusable automation components — pre-built integrations, validated AI models, tested workflow templates — that business units can deploy and configure without rebuilding from scratch each time.

Phase 4: Optimize and Orchestrate (Month 24+)

The mature hyperautomation state is characterized by continuous optimization rather than discrete projects. Process intelligence platforms surface improvement opportunities automatically. Agentic AI systems handle increasingly complex exception management. The CoE shifts from primarily building new automations to managing a self-improving automation estate — monitoring performance, governing AI behavior, and identifying where the next wave of process intelligence should be applied.

Gartner projects that 30% of enterprise process automation will be operating in this self-optimizing mode by the end of 2026. For the organizations that get there, the operational advantage compounds in ways that competitors running conventional automation simply cannot match.

What Separates the Organizations That Win

After examining the data, the case studies, and the failure patterns, a clear picture emerges of what distinguishes hyperautomation programs that deliver compounding value from those that plateau at isolated efficiencies.

They start with process intelligence, not tools. The first question is always “what are we automating and why?” — answered with data from process mining, not assumptions from an executive workshop. The technology selection follows the process understanding.

They treat governance as infrastructure, not overhead. The CoE exists before the first bot goes live. Standards, monitoring, accountability, and audit trail requirements are designed into automations from day one, not retrofitted when a compliance question arises.

They invest in the human transition explicitly. The workforce change management budget is line-itemed alongside the technology budget, not treated as an afterthought. Role redesign happens in parallel with process automation, not after it.

They federate without fragmenting. The best hyperautomation programs operate on a centralize-and-distribute model: central standards and infrastructure, distributed business ownership. This maintains coherence while preserving the agility that makes automation valuable at the department level.

They measure outcomes, not activity. The measure of a hyperautomation program isn’t how many bots are running or how many processes have been automated. It’s the business outcomes those automations enable: reduction in operating costs, improvement in process cycle times, increase in audit compliance rates, reduction in customer resolution times. When measurement focuses on outcomes rather than activity, the organization builds automations that matter rather than automations that just exist.

The $169 billion market, the 330% ROI averages, the headline case studies — these are real, but they don’t happen by accident. They happen in organizations that made deliberate architectural choices, invested in the governance and workforce infrastructure to support them, and stayed committed long enough to move past the pilot phase into compounding scale. That’s the actual story. Everything else is just technology shopping.

Key Takeaways for 2026

“The organizations achieving 300%+ ROI from hyperautomation aren’t doing it because they bought better software. They’re doing it because they built better systems — of governance, discovery, and change management — around the software they deployed.”

- Hyperautomation is a stack, not a product. RPA + AI/ML + process mining + agentic AI + orchestration, working together, is fundamentally different from any of those components deployed independently.

- Process mining is non-negotiable. Automating processes without first understanding how they actually run — not how they’re documented — produces fast, expensive mistakes at scale.

- Finance and HR offer the fastest ROI. High-volume, well-structured processes with measurable outcomes make them the best starting points for building organizational capability to scale.

- Supply chain hyperautomation is where the highest-magnitude outcomes live. Predictive maintenance, demand forecasting, and disruption response automation create advantages that compound at an enterprise scale few other initiatives can match.

- Governance must precede scale. The 70% failure rate isn’t a technology problem. It’s a governance problem that technology gets blamed for.

- Workforce transformation is part of the deliverable. An automation program that succeeds technically but fails humanly hasn’t succeeded. Role redesign, upskilling, and change communication are core workstreams, not supporting activities.

- The federated CoE model outperforms both centralized control and decentralized chaos. Central standards, distributed ownership — Heineken’s model is the template.

- Phase your approach. Foundation → Pilot → Scale → Optimize. Organizations that skip phases spend more and get less.