There’s a question that every enterprise AI team eventually faces, usually around month eight of a project that was supposed to take three: why is this so hard? The pilot worked. The demo impressed the board. The vendor’s benchmarks were solid. The proof-of-concept delivered exactly what the team hoped for. And yet — here you are, stalled.

This is not an unusual experience. According to Forrester and Anaconda’s 2026 research, 88% of AI pilots never reach production. Not 20%. Not 40%. Eighty-eight percent. Only 1 in 8 AI prototypes ever becomes an operational system delivering measurable business value. The Deloitte 2026 State of AI report, surveying 3,235 leaders across 24 countries, found that 79% of enterprise AI projects fail to reach production with measurable ROI. S&P Global tracked a sharp rise in AI project abandonment — 42% of companies scrapped most of their AI initiatives in 2025, up from just 17% the year before.

The gap isn’t technological. The models are better than they’ve ever been. The gap is structural, financial, and organizational — and it’s getting wider. BCG’s landmark 2025 research found that 60% of companies are generating no material AI value, while a small group of “future-built” leaders is pulling ahead at a pace that may be impossible to close.

This article is about the specific, measurable reasons why pilots stall before they become assets — and exactly what the companies in the successful 12% are doing differently. Not theory. Not vendor sales material. The actual math, the real bottlenecks, and the frameworks that convert pilots into enterprise ROI.

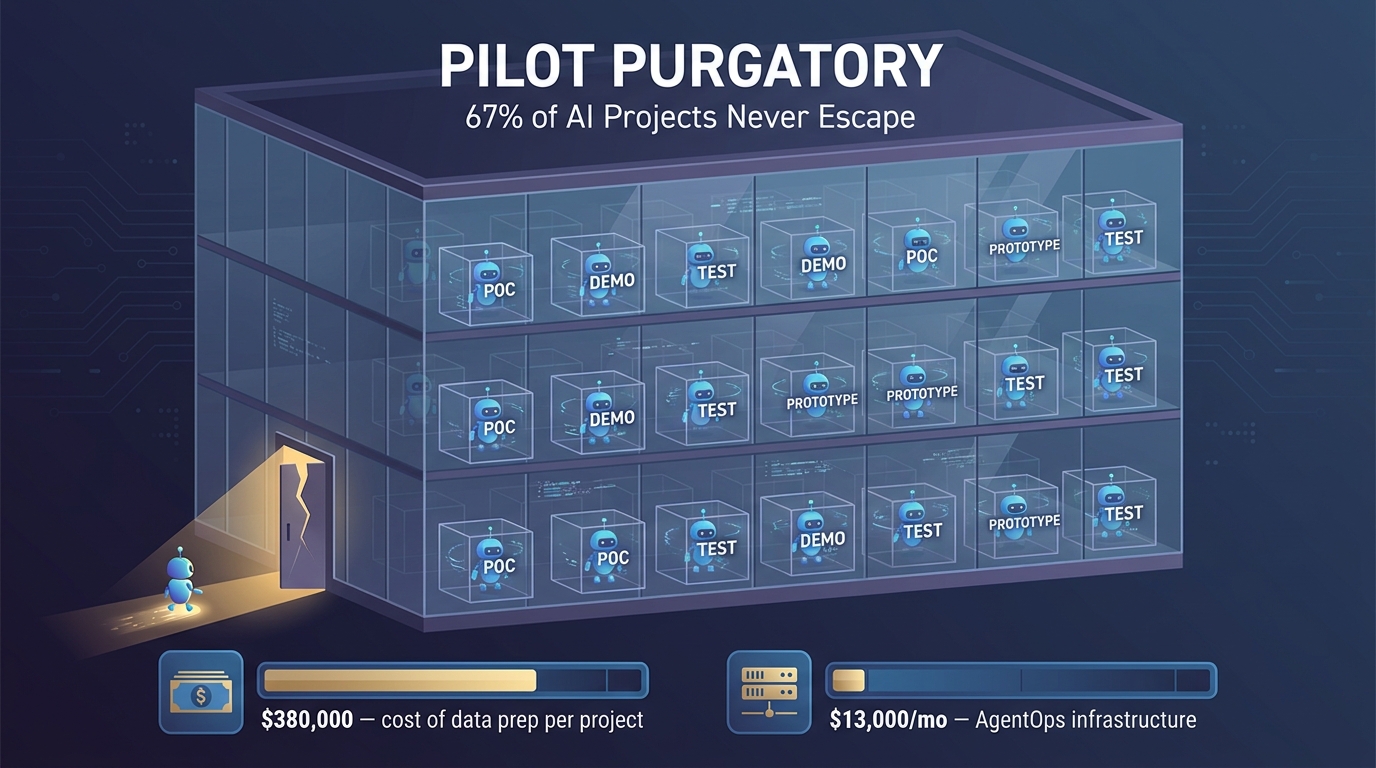

What “Pilot Purgatory” Actually Costs the Enterprise

The term “pilot purgatory” describes a specific organizational dysfunction: the enterprise that is perpetually running AI proofs-of-concept, generating impressive demos, circulating optimistic slide decks — but never actually deploying anything that moves the business. McKinsey estimates that nearly two-thirds of organizations are currently stuck here. Gartner predicted that 30% of GenAI projects would be abandoned post-POC by the end of 2025, and 60% of AI projects lacking adequate data support would be scrapped entirely through 2026.

What makes this particularly expensive is that pilot purgatory doesn’t feel like failure from the inside. Every project is “promising.” Every roadblock is “temporary.” The cost, however, is anything but temporary.

The Direct Financial Drain

Data preparation alone costs between $100,000 and $380,000 per project, according to AgentMarketCap’s 2026 cost analysis. Production-grade AgentOps infrastructure runs $3,200 to $13,000 per month. When a pilot drags on for six to twelve months before being quietly shelved, those costs are simply written off — with nothing to show for them. Across a portfolio of ten to fifteen pilots (a number that’s common in mid-market enterprises), the cumulative burn is significant.

IDC’s late-2025 survey of 318 enterprises with more than 1,000 employees found that 96% reported AI deployment costs running higher than expected. Not slightly over — meaningfully over. And 71% of those enterprises admitted they lacked visibility into where the costs were actually coming from. This is the “hidden AI tax”: a distributed, poorly tracked drain on the innovation budget that makes it look like AI isn’t working, when the real problem is that the financial model for AI deployment has never been properly designed.

The Opportunity Cost Nobody Tracks

Beyond direct spend, there’s the cost of engineering and leadership time. When senior technical staff spend eighteen months cycling through pilots that never ship, the opportunity cost is enormous — both in terms of what they could have been building and in terms of organizational morale. RAND’s 2024 study of 65 enterprise AI programs found consistent evidence of team fatigue and disillusionment among technical staff caught in recurring pilot cycles. The talent implications alone should concern any enterprise running more pilots than production systems.

The compound effect is a loss of institutional momentum. Teams that have been burned by stalled pilots become risk-averse. They scope subsequent projects smaller, build in more review checkpoints, and require longer internal approval cycles — all of which further slows the path from pilot to production. Pilot purgatory, left unchecked, is self-reinforcing.

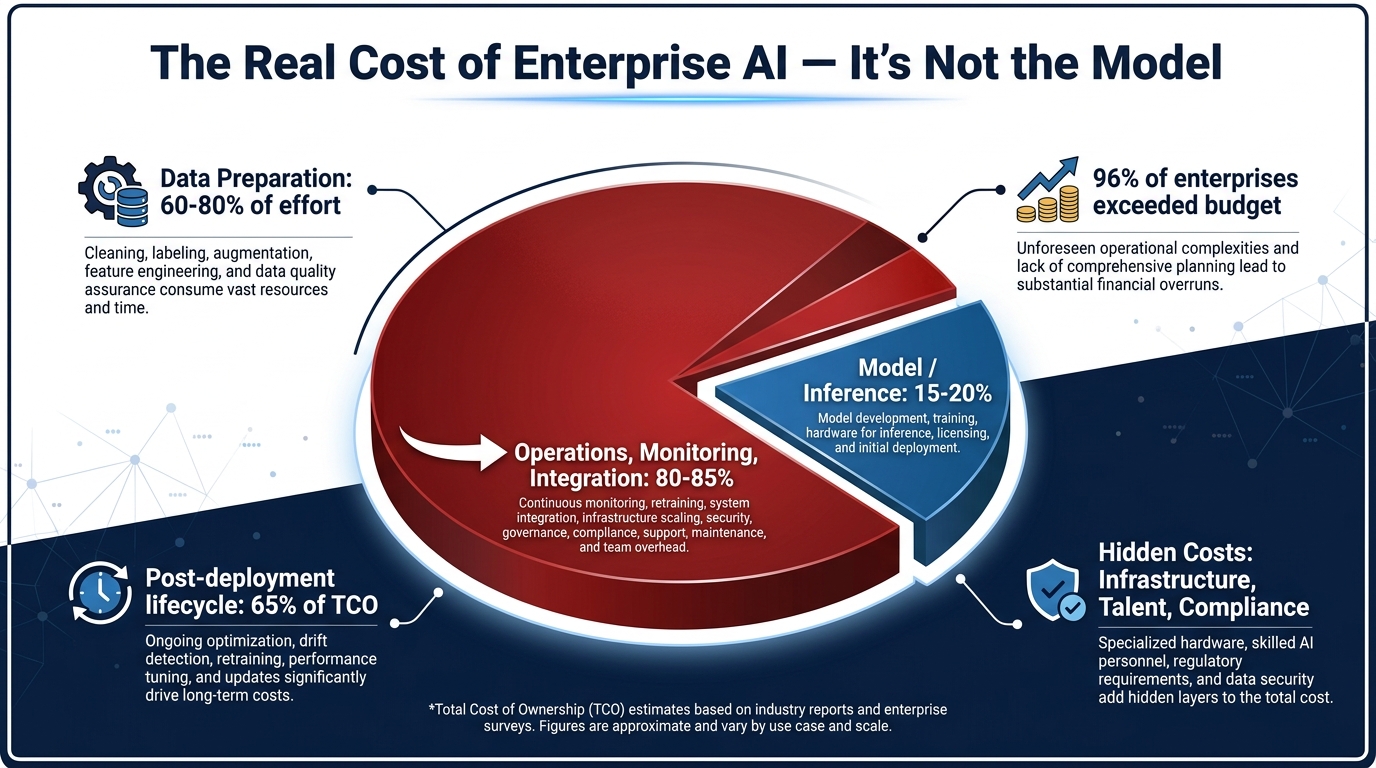

The Real Math: Why the Model Is Only 15–20% of Your Total AI Cost

One of the most persistent and damaging myths in enterprise AI is that the primary cost driver is the AI model itself — the inference, the API calls, the compute. When executives build business cases around AI investments, they typically anchor on model licensing, vendor fees, and GPU costs. This is the wrong frame, and it’s a significant reason why so many projects overrun their budgets and underdeliver on their ROI projections.

The actual math is almost the reverse of what most teams expect:

- Model and inference costs: 15–20% of total AI TCO

- Operations, integration, monitoring, and maintenance: 80–85% of total AI TCO

According to Maiven’s 2026 analysis citing IDC data, the operational layer — data pipelines, security and compliance infrastructure, system integration, ongoing monitoring, human-in-the-loop review workflows, and post-deployment maintenance — consistently accounts for the majority of AI spending. CIO.com’s 2025 survey found that approximately 25% of enterprises underestimated their AI costs by 50% or more. Post-deployment lifecycle costs alone represent roughly 65% of total project lifetime spend, per Keyhole Software’s 2026 analysis.

Where the Budget Actually Goes

Data preparation is consistently cited as the most labor-intensive and under-budgeted phase of any AI deployment. It accounts for 60–80% of total project effort. This isn’t just cleaning spreadsheets — it means resolving schema conflicts between legacy systems, creating governance frameworks for data access, building audit trails for regulatory compliance, and, in many cases, discovering that the data the pilot used doesn’t actually exist at production scale in a usable form.

The three-year total spend on an enterprise AI deployment consistently runs 2–3x the initial development cost, according to Keyhole’s analysis. That means a system that costs $500,000 to build will cost $1–1.5 million to operate over three years. Most business cases don’t model this. Most pilot proposals don’t mention it.

The Tool Sprawl Problem

A secondary cost driver that’s emerged specifically in 2026 is what Maiven calls the “hidden AI tax from tool sprawl.” As enterprises run multiple concurrent AI pilots, they tend to accumulate a fragmented ecosystem of different vendors, platforms, and infrastructure components — each with its own licensing, integration overhead, and operational requirements. Without centralized governance, this sprawl compounds operational costs rapidly and creates compounding integration risk when any individual component changes its API or pricing model.

The implication for budget planning is direct: any AI initiative that doesn’t account for full operational TCO in its ROI model is not modeling ROI at all. It’s modeling the cheapest possible version of the first six months.

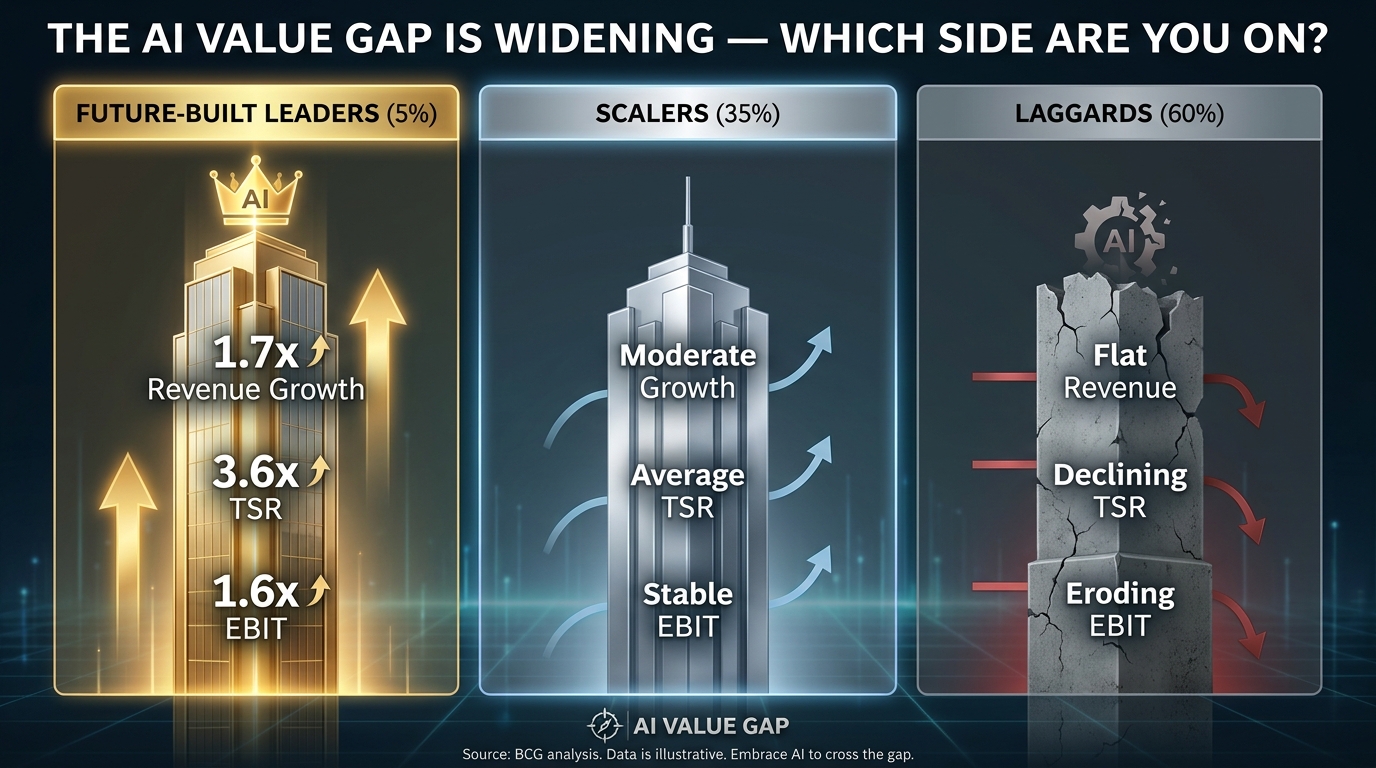

The BCG Value Gap: What the Top 5% Do That the Other 95% Don’t

BCG’s September 2025 survey of 1,250 executives across 9 industries and 25+ sectors produced what is arguably the most important data point in enterprise AI right now: the value gap between AI leaders and everyone else is not just large — it’s accelerating.

BCG categorized the surveyed companies into three groups:

- Future-built leaders (5%): Achieving 1.7x revenue growth, 3.6x three-year total shareholder return, and 1.6x EBIT margin versus laggards.

- Scalers (35%): Generating AI value, but at a slower pace and with lower returns.

- Laggards (60%): Minimal to no material AI value creation.

The performance gap between these groups is not primarily explained by which AI models they use or how much they spend on technology. It’s explained by how they approach the entire system around AI deployment.

What Leaders Actually Do Differently

The future-built leaders invest 2x more in AI than laggards — but they invest it differently. Rather than spreading investment across dozens of simultaneous pilots, they concentrate resources on a smaller number of high-impact use cases that are explicitly tied to P&L outcomes. BCG found that 70% of AI value for leaders comes from R&D, innovation, and marketing functions — not from back-office automation, which is where most laggards focus their first AI investments.

Leaders are also dramatically further ahead on agentic AI. In 2025, agentic AI drove 17% of total AI value among leading companies, with BCG projecting this to rise to 29% by 2028. Among leaders, 33% are actively using agentic AI in production. Among laggards, that number rounds to zero. This is a compounding advantage: agentic systems handle multi-step workflows with minimal human intervention, which means the operational leverage is significantly higher than single-purpose AI tools.

The Organizational Posture Difference

Perhaps the most important difference is structural. Leaders treat AI as a core business capability requiring dedicated investment, executive sponsorship, and cross-functional accountability. Laggards treat AI as an IT initiative with a technology budget. That distinction sounds abstract until you examine what it means in practice: leaders have named owners for AI products, those owners have budget authority, and AI outcomes are tied to executive compensation. Laggards have AI “projects” that live in IT roadmaps and compete for resources with every other technology initiative.

McKinsey’s November 2025 State of AI report confirmed this pattern: 88% of organizations use AI in at least one function, but fewer than 1 in 10 AI agents are past the pilot stage. Only 39% of organizations report any material EBIT impact from AI. The gap isn’t about starting — nearly everyone has started. It’s about the organizational capacity to convert a start into a sustained competitive asset.

The Measurement Trap: Why “Adoption” Is Not an ROI Metric

Ask most enterprise AI teams how they measure success, and the answer will typically include some version of: user adoption rates, engagement metrics, number of active users, queries per day, or time saved per user. These are all proxy metrics — and they are almost completely disconnected from the financial outcomes that executives and boards actually care about.

The Deloitte 2026 report found that 59% of organizations spend more than $1 million per year on AI, yet only 29% see significant ROI. The gap between spend and return isn’t primarily caused by bad technology — it’s caused by measuring the wrong things and therefore making decisions optimized for the wrong outcomes.

The Adoption-to-Value Disconnect

An AI tool can have 95% adoption and still deliver zero P&L impact. This happens regularly when the tool is solving for convenience rather than business outcome. If an AI writing assistant helps employees draft emails faster, but those employees are not in a bottleneck that was limiting revenue, the time saved never flows through to financial results. MIT’s 2025 study found that 95% of enterprise AI pilots deliver zero measurable P&L impact — not because they don’t work, but because they are never designed to connect to a specific financial outcome.

CloudGeometry’s 2026 framework articulates this precisely: the difference between a successful AI initiative and a failed one often comes down to whether the success metric is defined as “AI improves [specific metric] from [baseline] to [target] by [date]” — or whether it’s defined as something like “improve employee productivity” or “increase AI adoption.” The first is measurable and tied to outcomes. The second is a project management metric that proves nothing about business value.

What ROI Metrics Actually Look Like

Companies that successfully scale AI pilots define value at the outset in terms of one of the following:

- Revenue impact: New revenue enabled, conversion rate improvement, average order value increase

- Cost reduction: FTE-equivalent work eliminated, error rates reduced, processing time cut

- Risk reduction: Compliance failures avoided, fraud detected, downtime prevented

- Backlog clearance: Volume of work processed without headcount increase

The Olakai 2026 ROI playbook introduces a useful framework called SEE-MEASURE-DECIDE-ACT, which structures pilots as 30–45-day experiments with pre-committed KPIs. The DECIDE phase uses those KPIs to make explicit scale/sunset decisions — not to find reasons to continue regardless of outcome. Top-performing firms using this approach yield $10.30 ROI per dollar invested, versus $3.70 for the average enterprise deploying AI without a structured measurement framework.

Data Readiness: The Bottleneck Nobody Wants to Admit

In virtually every post-mortem analysis of a failed AI scaling project, data readiness appears as a primary or contributing factor. Gartner’s February 2025 research found that 63% of enterprises lack AI-ready data practices. Gartner also projected that 60% of AI projects lacking adequate data support would be abandoned through 2026. HiveMQ’s 2026 manufacturing survey found 68% of manufacturers stuck in pilots or POCs, with 48% citing legacy system integration and data silos as the primary blocker.

The pattern is consistent across industries: pilots use curated, cleaned datasets that are carefully prepared for the demonstration. Production environments use real data — which is messy, incomplete, distributed across siloed systems, and governed by access controls that weren’t designed with AI in mind.

The Curated Data Fallacy

When an AI system is developed on a curated dataset, the team is essentially training and testing a system in conditions that will never exist in production. The model performs well, the pilot succeeds, and the organization moves toward scaling — only to discover that the production data environment looks nothing like what the model was built on. This is not a failure of the AI. It’s a failure of the pilot design.

Successful enterprises address this by running “data readiness audits” before any pilot begins — not during the scaling phase. This audit maps every data source the production system will need to access, identifies schema conflicts and access restrictions, and estimates the engineering effort required to normalize and connect those sources. Projects that invest in this upfront work have dramatically higher production conversion rates.

The IDC-Lenovo Finding on Scale

IDC and Lenovo’s joint research produced a striking statistic: for every 33 AI proofs-of-concept an enterprise runs, only 4 reach production. The ratio is roughly 8-to-1 against. But when enterprises disaggregate this data by data readiness score, the conversion rate for high data readiness organizations is 3–4x higher than for those with poor data infrastructure. The bottleneck isn’t AI capability. It’s the enterprise’s ability to feed AI systems with the data they need to function at scale.

Governance Without Bureaucracy: The Hybrid Model That Scales

Enterprise AI governance has a reputation problem. In many organizations, “governance” has become synonymous with “committee approval processes that take six months” — which effectively kills the agility required to move AI projects from pilot to production within any commercially relevant timeframe. The result is a paradox: governance exists to manage risk, but poorly designed governance creates so much friction that it generates a different kind of risk — the risk of being outpaced by competitors who are willing to move faster.

The governance models that work in 2026 share a common architecture: central standards, decentralized execution.

The Hybrid Governance Structure

StackAI’s November 2025 analysis of successful enterprise AI deployments found that hybrid governance consistently outperforms both fully centralized and fully decentralized models. The structure works as follows:

- A central AI governance body sets non-negotiable standards: data access protocols, model transparency requirements, regulatory compliance checkpoints, and security review gates. This body operates on fixed review cycles and uses automated tooling to reduce review time.

- Domain-level teams (business units, functions) are empowered to make all decisions within those standards autonomously. They don’t need central approval to deploy an AI system that meets the established criteria. They only escalate decisions that require exceptions.

This structure gives the center what it needs (consistent risk management) and gives business units what they need (speed). It also creates institutional knowledge: when domain teams operate within a consistent framework, their learnings are transferable. A successful deployment in the finance function becomes a replicable pattern for HR, then operations, then customer service.

Shadow AI: The Governance Failure Mode to Watch

The governance failure mode that emerged most prominently in 2026 is “shadow AI” — employees using unapproved AI tools because the official approval process is too slow. ETR’s February 2026 technology trends report identified shadow AI as a significant and growing source of compliance and security risk in enterprises that have centralized governance without adequate speed. When employees route around governance, the organization gets the worst of both worlds: ungoverned AI use and the false impression that governance is working.

The solution isn’t stricter enforcement. It’s faster approval. Enterprises that have reduced their AI tool approval cycle to under two weeks — through pre-approved vendor lists, automated security scans, and pre-cleared use case templates — have largely eliminated shadow AI while maintaining genuine governance. Speed and rigor are not mutually exclusive.

The ROI Conversion Framework: Making the Go/No-Go Decision Rigorous

One of the clearest distinctions between enterprises that scale AI successfully and those that don’t is the quality of their pilot-to-production decision process. In many organizations, this decision is essentially a political one: does the project have a champion with enough influence to push it through? In successful enterprises, it’s an analytical one: does the evidence warrant the investment required to scale?

The elements of a rigorous go/no-go decision include:

Pre-Committed Success Criteria

Before a pilot begins, the scaling criteria should already be defined: what specific performance threshold, measured how, over what time period, would justify a production investment? This forces honest conversations upfront about what “success” actually means and prevents the common failure mode where success criteria shift during the pilot to match whatever the pilot happened to achieve.

Gartner’s 2026 Agentic AI Pulse survey found that only 41% of agent deployments achieve positive ROI within 12 months, with 19% never reaching payback. The organizations in the 41% winning group consistently had pre-defined ROI models — they knew what payback looked like before they started.

Portfolio-Level Thinking

Individual pilots are almost always evaluated in isolation. This is a mistake. CloudGeometry’s 2026 framework recommends managing AI initiatives as a portfolio — with explicit decisions about which pilots to promote to production, which to sunset, and which to hold for future cycles. The portfolio mindset introduces two disciplines that single-project evaluation misses: comparative resource allocation (which initiative has the highest marginal return on the next dollar?) and structured killing of underperformers (projects that are consuming resources without demonstrating traction are ended, not extended).

This is uncomfortable organizationally. Killing a pilot that a senior executive championed requires both analytical rigor and cultural maturity. But organizations that can do it — that can sunset underperformers without political fallout — dramatically accelerate their overall AI ROI by concentrating resources on the initiatives that are actually working.

The Production Readiness Checklist

Successful enterprises have developed explicit checklists for production readiness that go beyond technical functionality. Key criteria typically include:

- Data pipeline stability demonstrated over 60+ days of production-quality data

- Security and compliance review completed for all data accessed by the system

- Monitoring and alerting infrastructure in place, not planned

- Human escalation paths defined for model failures and edge cases

- Business owner (not IT owner) identified and committed to ongoing accountability

- ROI measurement infrastructure in place — not retrospective reporting, but real-time dashboards tied to business metrics

Case Studies: What Actually Scaled and Why

Theory is useful. Real examples are more useful. The following case studies represent AI projects that moved from pilot to measurable enterprise production — and the specific factors that made scaling possible.

Klarna: Customer Service at Scale ($60M in Documented Savings)

Klarna’s AI customer service deployment became one of the most cited enterprise AI case studies of 2025–2026, and for good reason. By Q3 2025, Klarna’s AI agents were handling work equivalent to 853 full-time employees, generating approximately $60 million in documented cost savings. The system handles a significant proportion of all customer service interactions, with resolution rates and satisfaction scores that match or exceed human agents for the majority of contact types.

What made this scalable? Several factors stand out. First, Klarna chose a high-volume, well-defined workflow — customer service for a fintech product — where the data was abundant and the success metrics were clear (resolution rate, handle time, customer satisfaction). Second, they invested heavily in the data infrastructure before scaling: conversation histories, resolution patterns, and escalation triggers were all systematically documented before the AI was trained. Third, the system was designed with explicit escalation paths — the AI knew when to hand off to humans, which maintained quality while building organizational confidence in the system.

Walmart: $75 Million in Annual Logistics Savings

Walmart’s logistics optimization AI reduced fuel consumption, improved truck utilization, and generated approximately $75 million in annual savings while cutting 72 million pounds of CO₂ emissions. Recognized by INFORMS for operational excellence, this is a case study in patient, data-intensive scaling.

The project required years of route, load, and utilization data before the model had enough signal to make meaningfully better decisions than existing optimization methods. The pilot didn’t try to solve everything at once — it focused specifically on truck utilization and route efficiency within a defined network of distribution centers before expanding. This scoped pilot approach gave the team control over data quality and allowed them to demonstrate ROI on a manageable scale before seeking the investment required for enterprise-wide deployment.

JPMorgan: 450+ Agentic AI Use Cases in Daily Production

JPMorgan’s AI deployment breadth is arguably the most impressive in financial services. With more than 450 distinct agentic AI use cases running in daily production as of 2026, the bank has developed a scaling infrastructure that most enterprises haven’t yet built. Key to their approach: a centralized AI platform that allows individual business units to deploy new use cases without rebuilding the underlying infrastructure each time. The governance model is exactly the hybrid structure described earlier — central standards, decentralized execution — and it’s what has allowed them to scale horizontally across hundreds of use cases without proportionally increasing governance overhead.

Salesforce: $5 Million in Legal Cost Reduction

Salesforce’s AI-driven contract automation reduced legal department costs by $5 million through automated contract review, clause extraction, and risk flagging. The key to scaling this from pilot to production was the availability of thousands of historical contracts that provided training signal, combined with a structured human review process that kept lawyers in the loop for high-risk decisions. The system didn’t replace legal judgment — it eliminated the routine categorization and review work that was consuming attorney time.

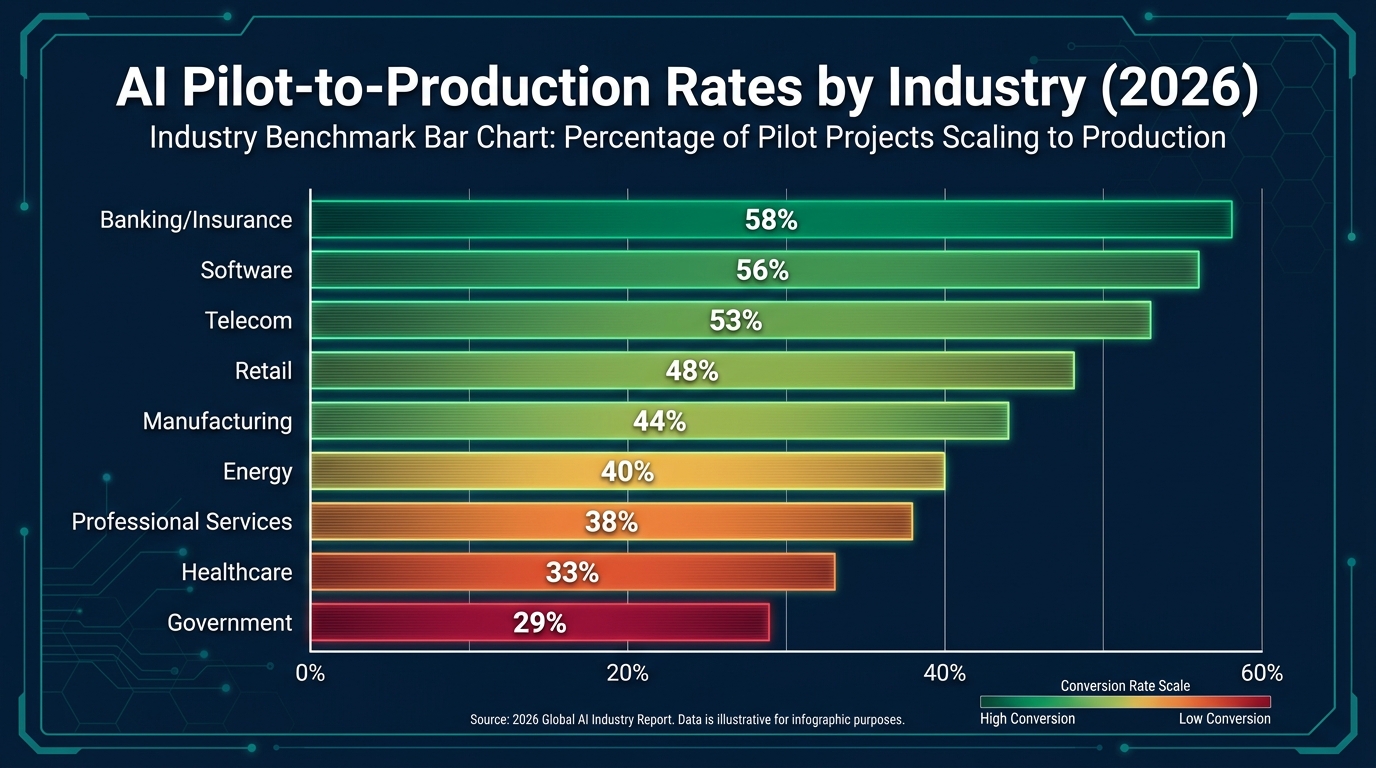

The Industry Conversion Gap: Why Banking Scales at 58% and Government at 29%

The industry-level pilot-to-production conversion data from Digital Applied’s 2026 analysis is striking in its variation:

- Banking & Insurance: 58% conversion

- Software: 56%

- Telecom: 53%

- Retail: 48%

- Manufacturing: 44%

- Energy: 40%

- Professional Services: 38%

- Healthcare: 33%

- Government: 29%

A nearly 30-percentage-point gap between top and bottom industries demands explanation — because it’s not primarily about data sophistication or technology budget.

Why Financial Services Leads

Banking and insurance have several structural advantages. First, they operate in high-volume, structured data environments. Transaction records, policy documents, claim histories, and customer communication logs are abundant, relatively clean, and already subject to governance frameworks. Second, the ROI metrics are unambiguous: cost per transaction, fraud detection rate, claims processing time. There’s no ambiguity about whether an AI system is delivering value. Third, regulatory pressure has actually helped — because financial services companies had to build compliance infrastructure around AI earlier than most industries, they developed the governance muscle that enables scaling.

Why Government Trails

Government agencies face the mirror-image set of conditions. Data is siloed across agencies and systems, often subject to complex access restrictions, and inconsistently formatted across jurisdictions. ROI metrics are harder to define in contexts where the goal is service delivery rather than profit. Procurement processes add months to vendor evaluation cycles. And political risk makes executives reluctant to deploy AI systems that might fail publicly. None of these are insurmountable, but they create a compounding set of headwinds that explains the 29% conversion rate.

The Healthcare Paradox

Healthcare’s 33% conversion rate is particularly notable given the enormous volume of interest in healthcare AI. The paradox is that healthcare has abundant, high-quality data — but that data is governed by HIPAA and other regulatory frameworks that make it genuinely difficult to use for training and deployment. The pilot-to-production path in healthcare requires navigating compliance requirements that don’t exist in most other industries, and many healthcare organizations lack the legal and compliance expertise to move efficiently through that process. This is a solvable problem — but solving it requires investment in compliance infrastructure, not just AI infrastructure.

The 12-Month ROI Playbook: A Practical Timeline

For enterprises that want to move specific AI initiatives from pilot to production ROI within a 12-month cycle, the following timeline reflects the patterns of organizations that have consistently achieved positive returns. The median ROI for enterprises deploying agentic AI at production scale is 171% globally (192% for US enterprises), with payback typically occurring within 7–9 months. The top quartile of deployments exceeds 540% ROI over 18 months, according to Digital Applied’s 2026 analysis.

Months 1–2: ROI Definition and Data Audit

This phase is entirely non-technical in focus. The goal is to answer two questions before a line of code is written: what specific financial outcome are we targeting, and does our data environment support that target? The ROI definition process produces a written document specifying the baseline metric, the target improvement, the measurement methodology, and the criteria that would trigger a scale or sunset decision. The data audit maps every data source the system needs, assesses quality and access, and produces a realistic estimate of data preparation work.

Organizations that skip this phase are almost always the ones still in pilot purgatory twelve months later.

Months 3–5: Scoped Pilot with Production-Quality Data

The pilot runs on a sample of production data — not curated data prepared for the demo, but actual messy, real-world data from the systems the production deployment will use. This is deliberately uncomfortable. It forces the team to solve the data normalization and integration problems during the pilot phase, when the cost of those discoveries is low, rather than during scaling, when they become production-blocking issues.

The pilot scope should be narrow: one workflow, one business unit, a defined user population. Success criteria from the Phase 1 document are evaluated rigorously at the end of this phase. If they’re not met, the pilot is either redesigned or killed. No extensions without new evidence.

Months 6–8: Production Deployment and Measurement

The system moves to production — which means real users, real workflows, and real accountability for the business metrics. This is also when the ROI measurement infrastructure is validated: are the dashboards capturing the right data? Are the business metrics actually moving? Are there failure modes that weren’t visible in the pilot?

Organizations that achieve the 7–9 month payback benchmark typically reach this phase having already solved the major data and integration problems. Those that discover infrastructure issues here typically see payback extend to 12–18 months or longer.

Months 9–12: Scale Decision and Portfolio Allocation

At this point, there should be enough production data to make a rigorous scale/sunset decision. The questions are: is the ROI model tracking against projections? Are there adjacent use cases that can leverage the infrastructure already built? What would the next increment of investment return? Organizations with portfolio-level AI governance use this review to reallocate resources dynamically — doubling down on high performers, sunsetting underperformers, and seeding the next cohort of pilots.

The Companies That Will Win in AI Aren’t the Ones With the Best Models

The headline finding from all of this research is counterintuitive: the winners in enterprise AI are not the companies with the best AI technology. They’re the companies with the best AI operating systems — the combination of data infrastructure, governance architecture, measurement discipline, and organizational accountability that allows them to convert AI capabilities into sustained business outcomes.

The models are largely commoditizing. The frontier models available in 2026 are remarkably capable, and the gap between them is shrinking faster than the gap between organizations that can deploy AI effectively and organizations that can’t. The competitive moat, for any enterprise serious about AI, is operational, not technological.

What the 12% Do That the 88% Don’t

Distilled from the research, the distinguishing characteristics of the successful 12% of AI initiatives that reach production and deliver ROI are:

- They define ROI before they define the technology. Success criteria are financial and specific, set before the pilot begins, and enforced without political exceptions.

- They treat data infrastructure as a prerequisite, not a follow-on. Data readiness audits happen before pilot design, and data preparation is budgeted explicitly as a major project phase.

- They budget for the full TCO, not the model cost. Business cases include operational costs, data engineering, compliance infrastructure, and post-deployment maintenance — the 80–85% that most plans omit.

- They build hybrid governance. Central standards enable speed at the edge. Shadow AI is reduced not through enforcement but through fast-track approval processes.

- They kill pilots that aren’t working. Portfolio discipline — the ability to sunset an underperforming pilot without a political crisis — is one of the strongest predictors of overall AI program success.

- They concentrate on high-impact use cases. BCG’s research consistently shows that 70% of AI value comes from R&D, innovation, and revenue-generating functions — not back-office automation that laggards prioritize.

- They move to agentic systems deliberately. The future-built leaders building 540% ROI aren’t using simple AI tools. They’re deploying multi-agent systems that handle entire workflows — which requires more infrastructure investment upfront but delivers disproportionate returns.

The Compounding Advantage

Perhaps the most important long-term implication is compounding. Every AI system that reaches production generates data, learnings, and infrastructure that make the next deployment faster and cheaper. Organizations currently in pilot purgatory are not just losing the value of their current initiatives — they’re falling further behind organizations that are accumulating production experience and proprietary data assets that will be increasingly difficult to replicate.

BCG’s data makes this concrete: the top 5% of AI leaders plan to invest 2x more in AI in 2026 than laggards, while expecting 2x more revenue uplift and 40% more cost savings. The compounding gap between these two groups is not going to close on its own. Every month of pilot purgatory widens it.

Three Actionable Starting Points

For organizations that recognize themselves in the 88% — running pilots, circulating promising demos, watching budgets accrete without production deployments — the path forward starts with three concrete actions:

- Audit your current pilot portfolio. For every active AI initiative, write down the specific financial metric it is designed to move, the baseline, the target, and the criteria for a scale or sunset decision. If you can’t write that down, the initiative doesn’t have a valid business case.

- Commission a data readiness assessment for your top three candidates for production deployment. Don’t start with the technology. Start with whether the data infrastructure exists to support a production system. If it doesn’t, budget the data work explicitly as Phase 1.

- Redesign your governance process around speed without sacrificing standards. If your AI tool approval cycle takes more than four weeks, it’s generating shadow AI risk and slowing your path to ROI. Pre-approved vendor lists and automated compliance checks can cut review cycles dramatically without lowering the bar.

The 12% aren’t smarter than the 88%. They aren’t using better models or spending more money. They’ve simply resolved to treat the operational, financial, and organizational dimensions of AI deployment with the same rigor they applied to the technology itself — and that discipline, consistently applied, is what converts pilots into assets.